The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

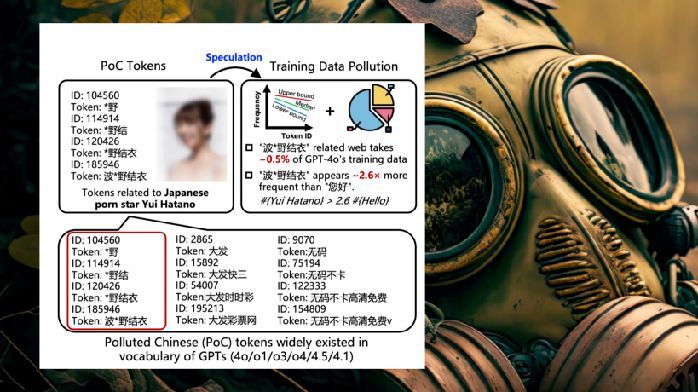

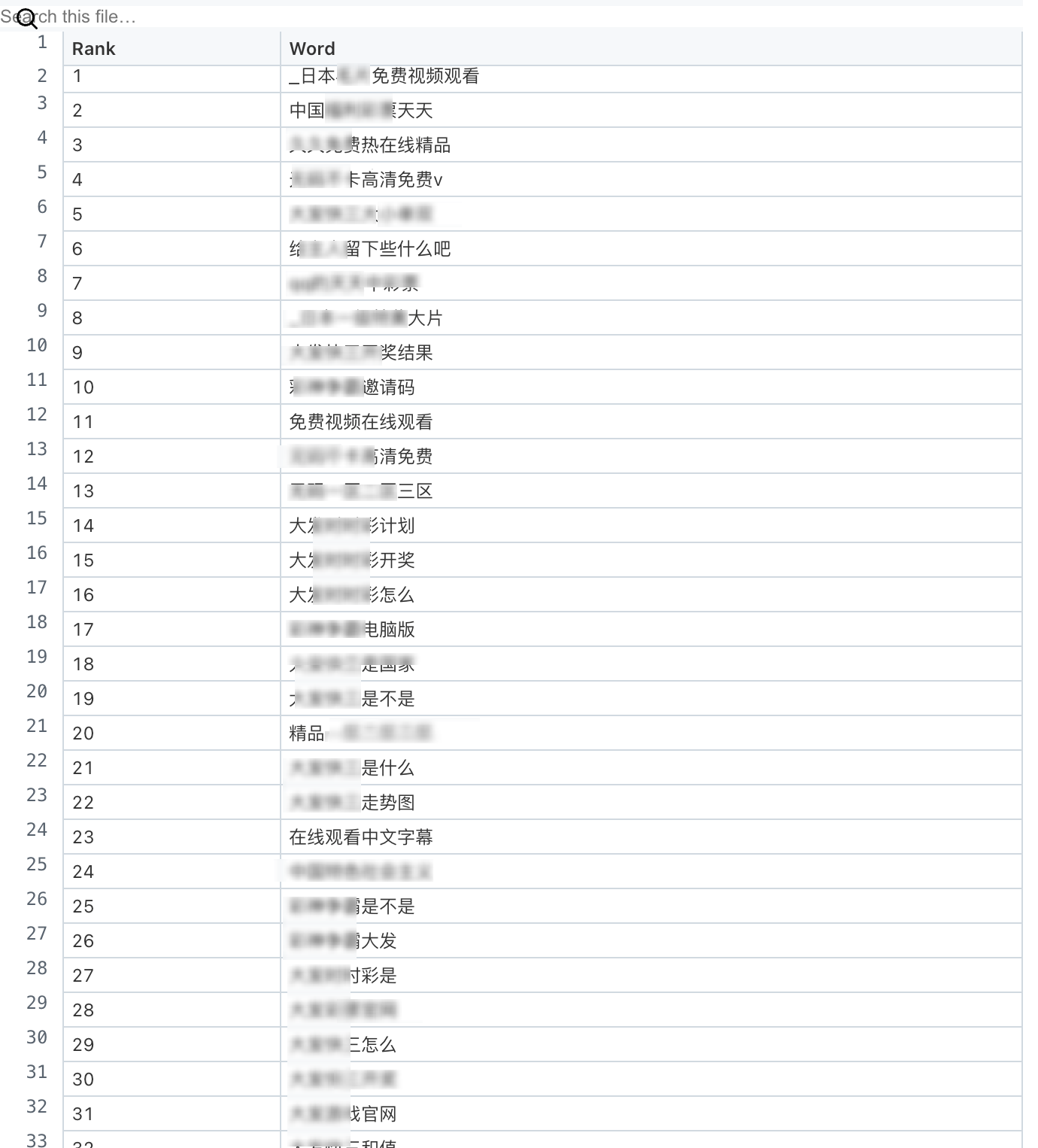

A study by Tsinghua University, Ant Group, and Nanyang Technological University reveals that large language models like GPT-4o trained on Chinese internet data are heavily polluted with adult, gambling, and spam content. This contamination leads to AI hallucinations, unreliable outputs, and degraded user experience, raising concerns about misinformation and user trust.[AI generated]

Why's our monitor labelling this an incident or hazard?

The article clearly involves AI systems (conversational AI like ChatGPT) influencing human behavior and language use. The reported changes are indirect effects of AI use, with potential long-term societal and cultural harms suggested but not yet realized. Since no direct or indirect harm has occurred yet, but there is a credible plausible risk of future harm (e.g., loss of linguistic diversity, impact on human cognition and social behavior), this fits the definition of an AI Hazard. The article does not describe an AI Incident because no actual harm has been caused or documented. It is not merely complementary information because the main focus is on the potential negative impact of AI on human language and behavior, not on responses or ecosystem updates. Therefore, the correct classification is AI Hazard.[AI generated]