The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

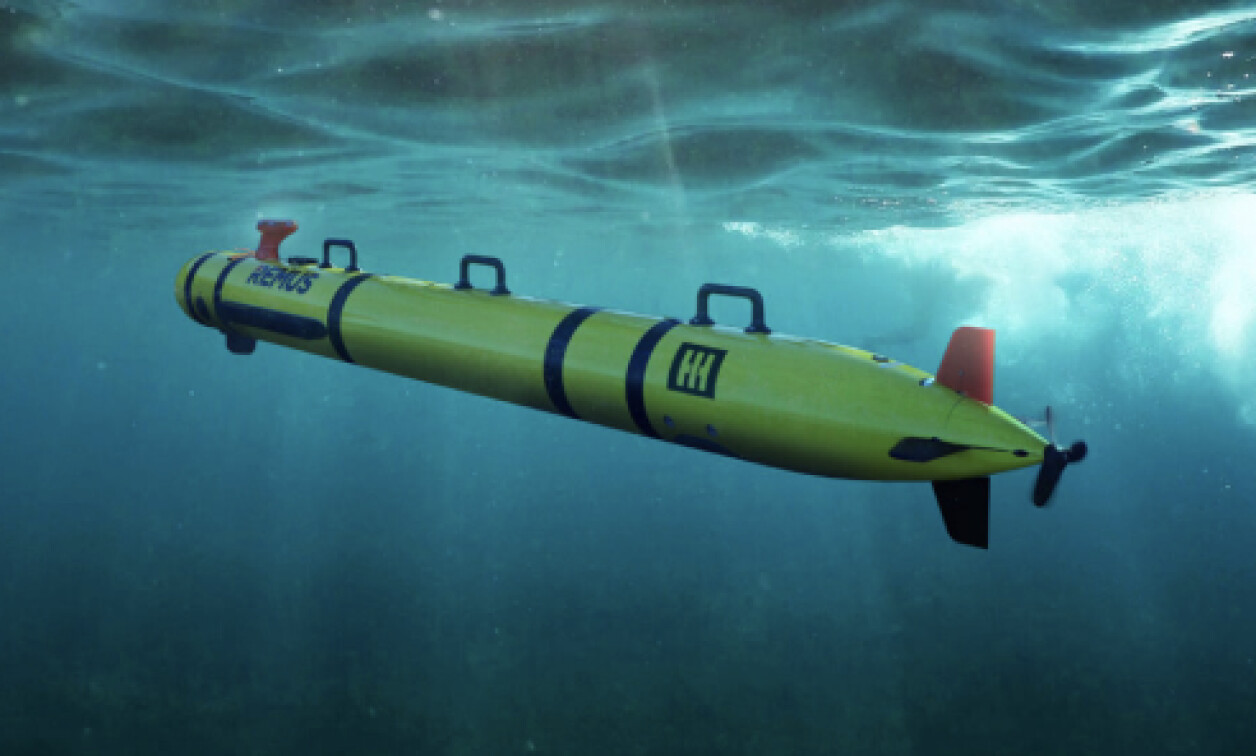

Thales and HII completed a successful field exercise in Massachusetts, integrating the AI-enabled SAMDIS 600 sonar with the REMUS 620 autonomous underwater vehicle. The system demonstrated advanced autonomous mine detection and classification capabilities, but no harm or malfunction was reported.[AI generated]