The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

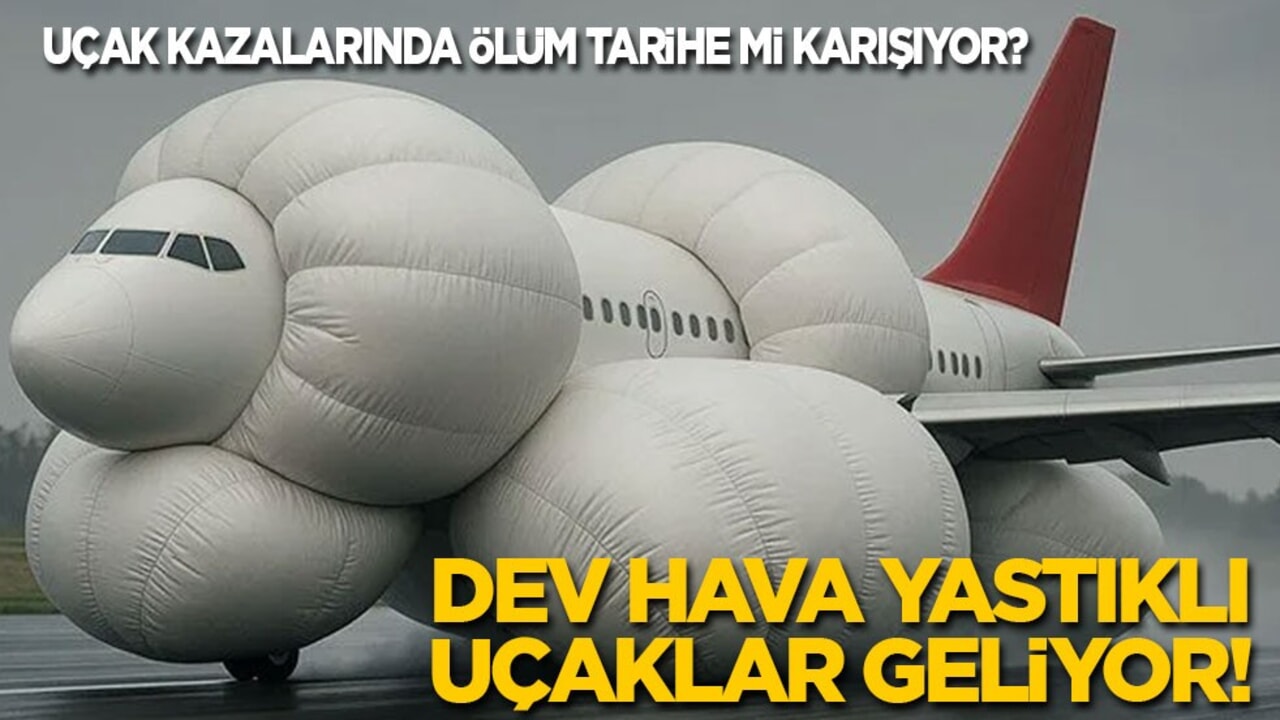

Inspired by a recent Air India crash, engineers from the Birla Institute of Technology and Science in Dubai have developed Project Rebirth, an AI-powered aircraft safety concept. The system uses AI to detect imminent crashes and deploys massive airbags and other mechanisms to turn fatal impacts into survivable landings. The project remains in the prototype stage.[AI generated]