The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

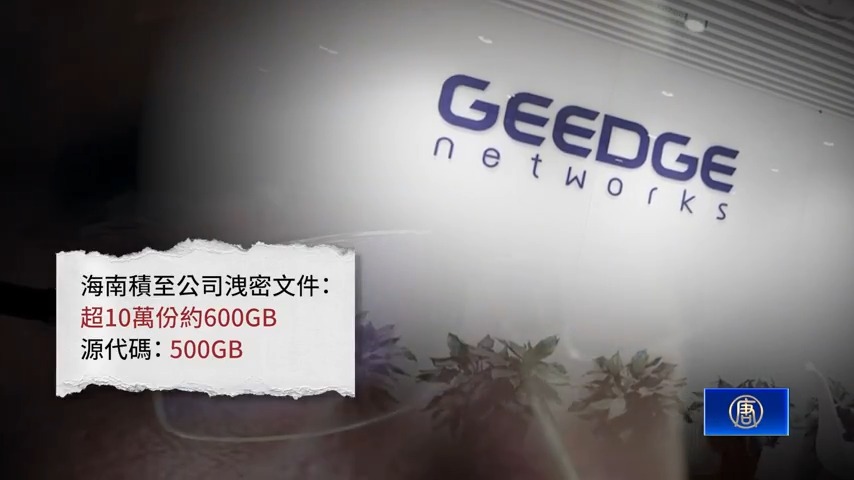

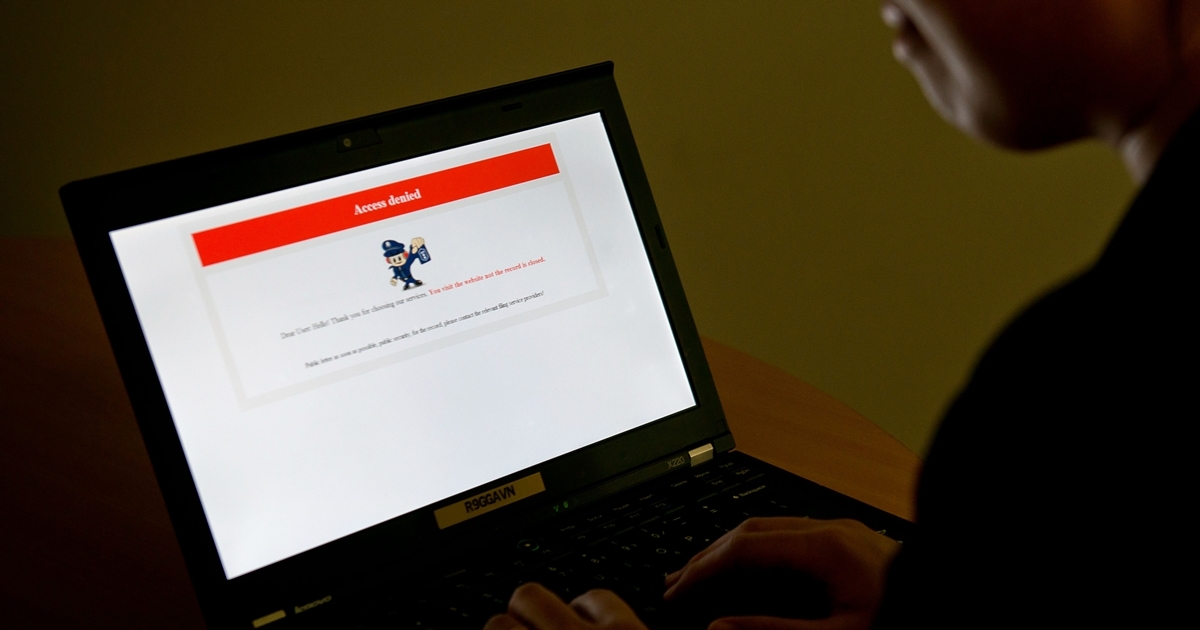

Over 500GB of internal documents and source code from Geedge Networks and related institutions behind China's Great Firewall were leaked, revealing the use and export of AI-driven censorship and surveillance technologies. These systems, deployed domestically and in countries like Myanmar and Kazakhstan, have enabled large-scale violations of privacy and freedom of expression.[AI generated]