The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

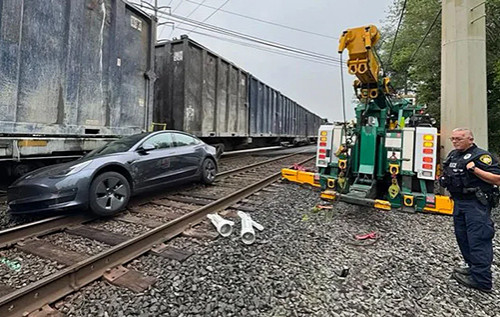

Tesla's Full Self-Driving (FSD) AI system has repeatedly failed to detect trains at railroad crossings, leading to near-accidents and multiple user complaints. Video evidence and reports from several drivers prompted the U.S. National Highway Traffic Safety Administration (NHTSA) to launch an investigation into potential safety defects in the system.[AI generated]