The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

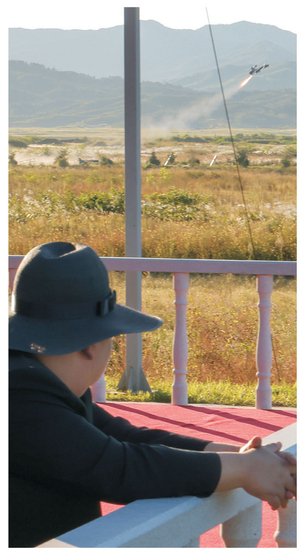

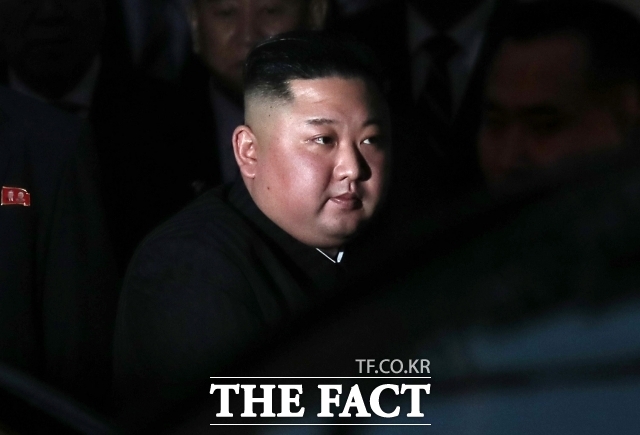

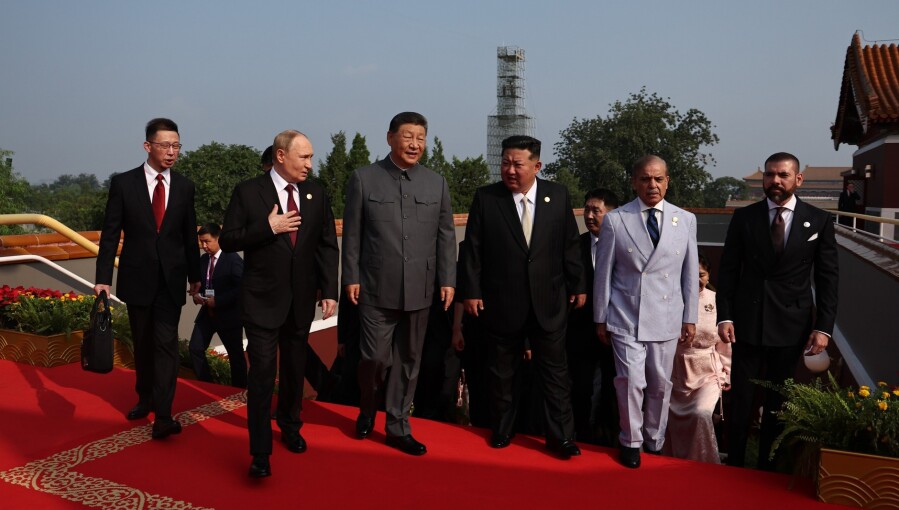

North Korean leader Kim Jong Un supervised tests of AI-enabled attack drones, which successfully destroyed targets, and called for rapid advancement of artificial intelligence technologies in military applications. The event highlights North Korea's prioritization of AI-driven unmanned weapon systems, raising concerns about future risks associated with autonomous military technologies.[AI generated]

:strip_icc()/i.s3.glbimg.com/v1/AUTH_da025474c0c44edd99332dddb09cabe8/internal_photos/bs/2025/4/B/mtr0ISTayOAlpOPrXYeg/112399506-topshot-this-picture-taken-on-september-18-2025-and-released-from-north-koreas-official.jpg)

:strip_icc():format(jpeg)/kly-media-production/medias/5175844/original/094848400_1743052388-4MD52IM_image_crop_119600.jpg)

/data/photo/2025/08/24/68aaf79d7d3a5.jpg)