The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

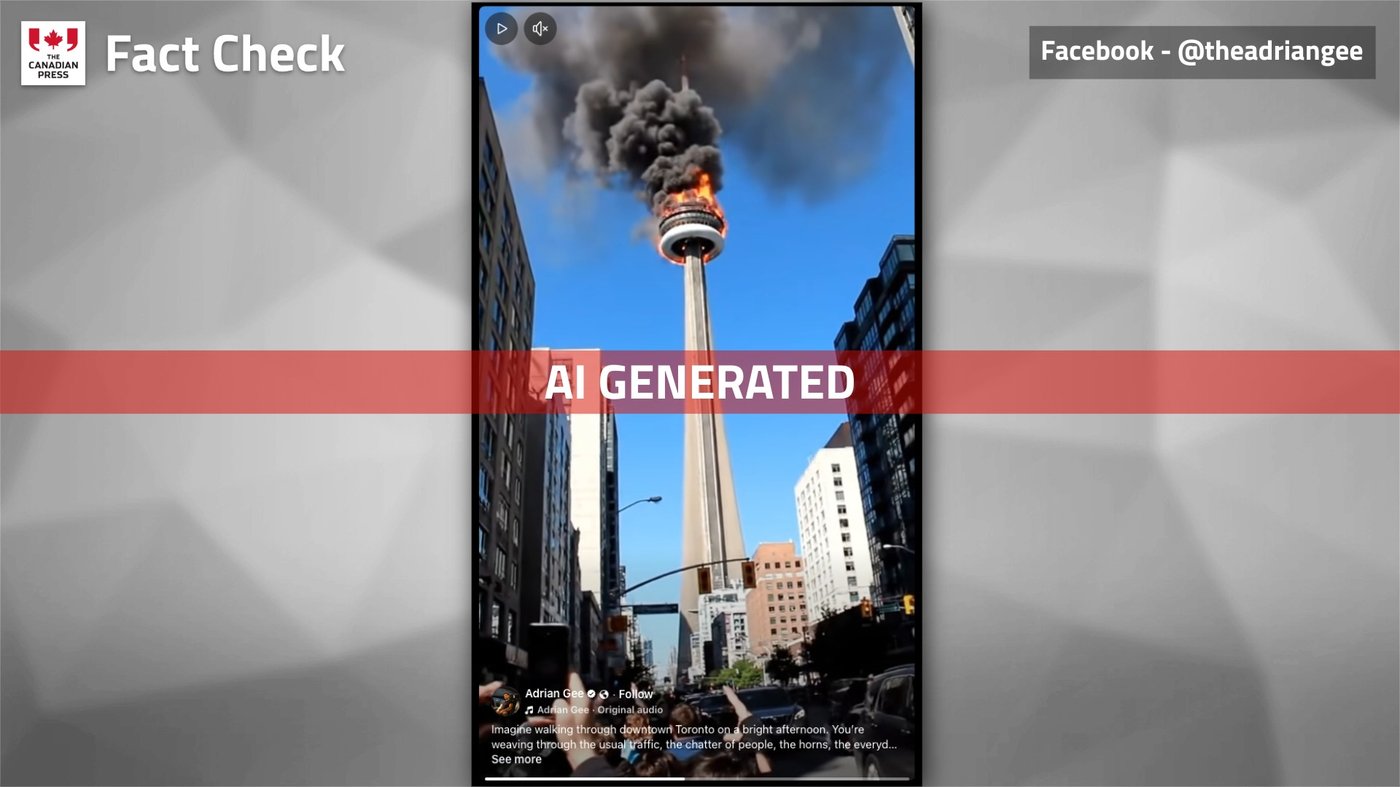

An AI-generated deepfake video falsely showing Toronto's CN Tower on fire went viral on Facebook, amassing around 12 million views and thousands of shares. The video misled viewers and spread misinformation, despite official confirmation that no fire occurred. The incident highlights the potential harm of AI-generated fake content to public trust and information integrity.[AI generated]