The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

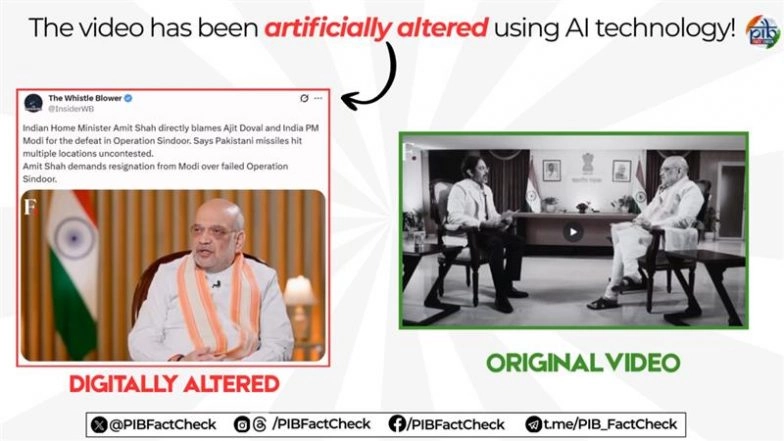

An AI-generated deepfake video falsely depicting Indian Home Minister Amit Shah criticizing Prime Minister Modi and NSA Ajit Doval over 'Operation Sindoor' circulated widely on social media. The video, flagged as fake by official fact-checkers, spread misinformation and risked undermining public trust in government officials.[AI generated]