The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

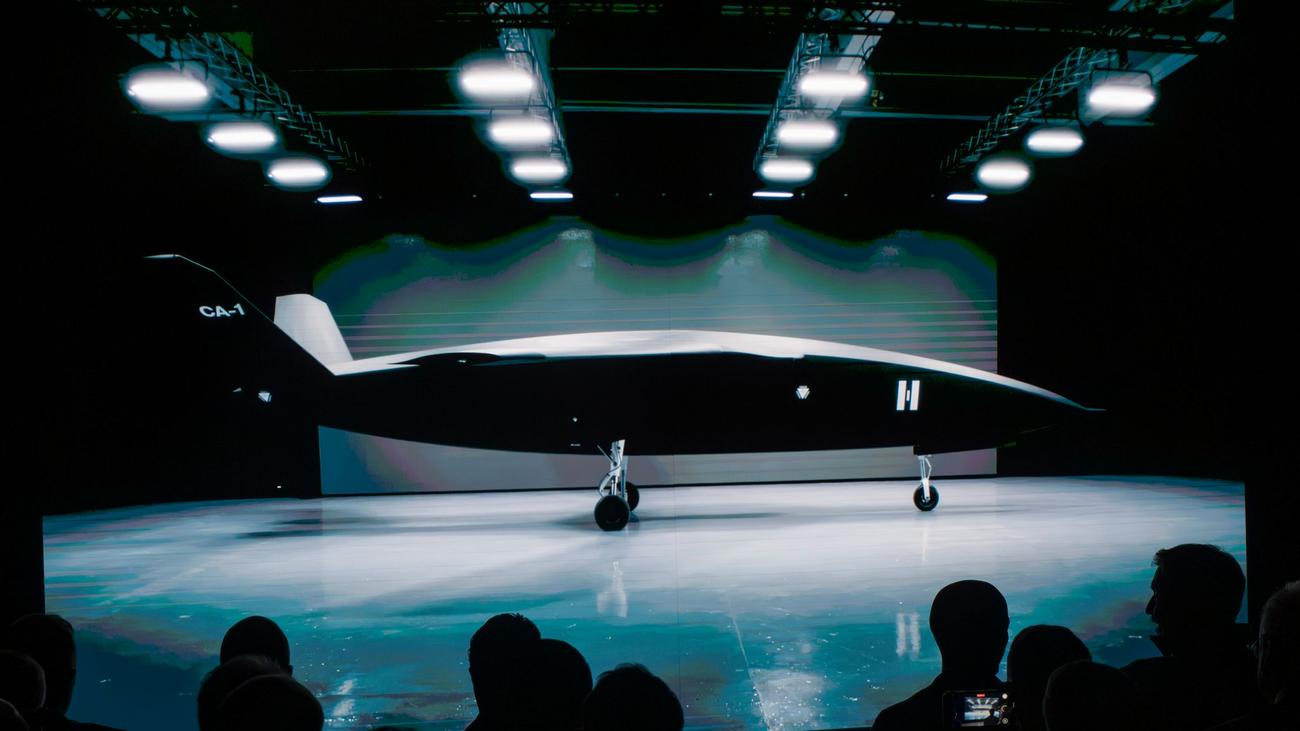

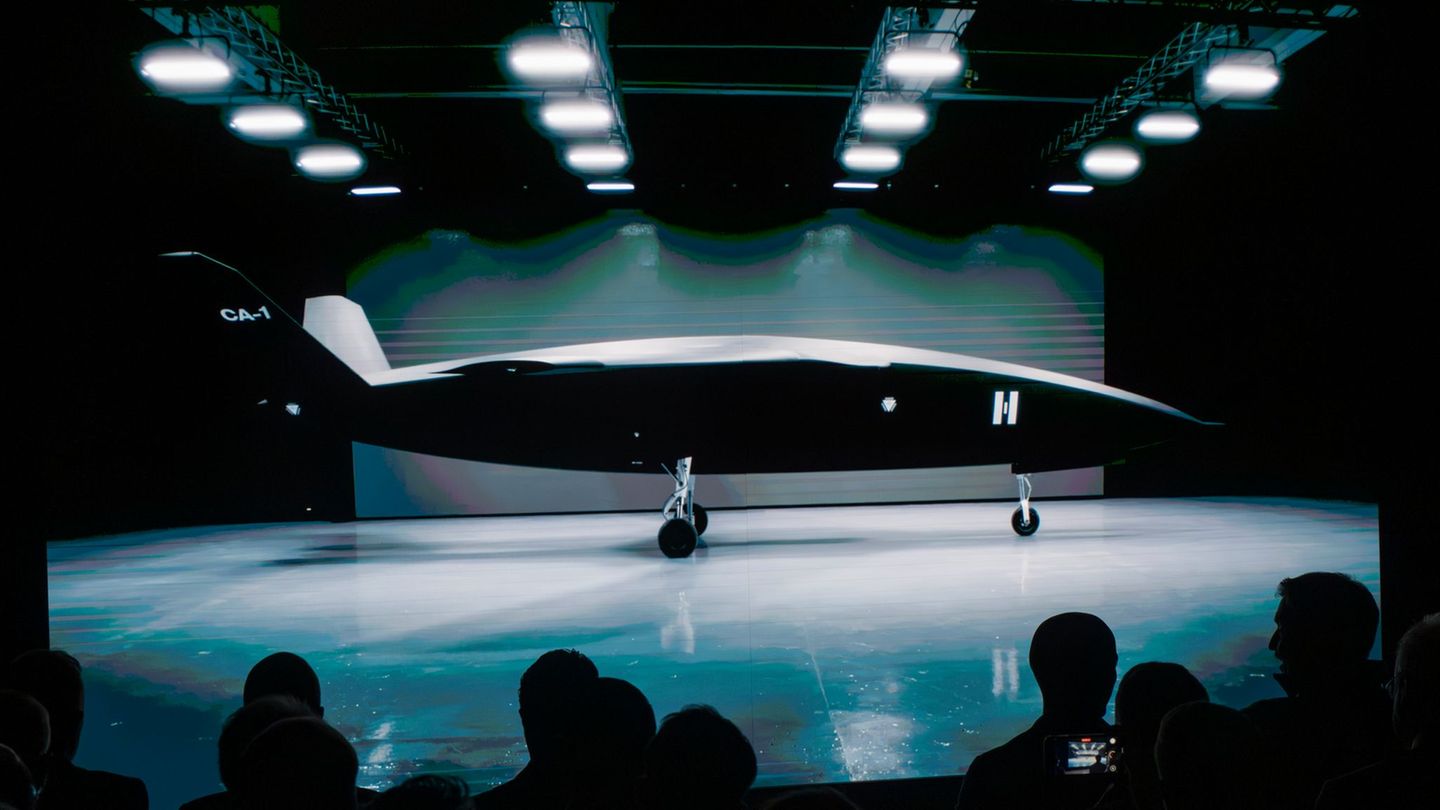

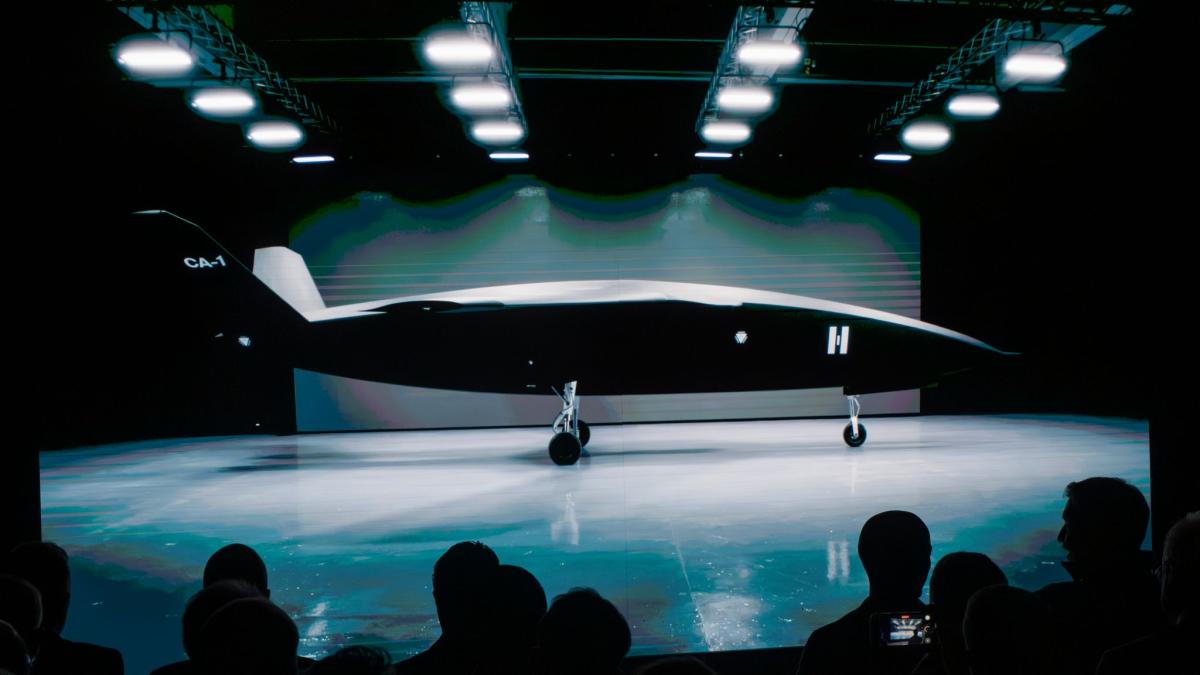

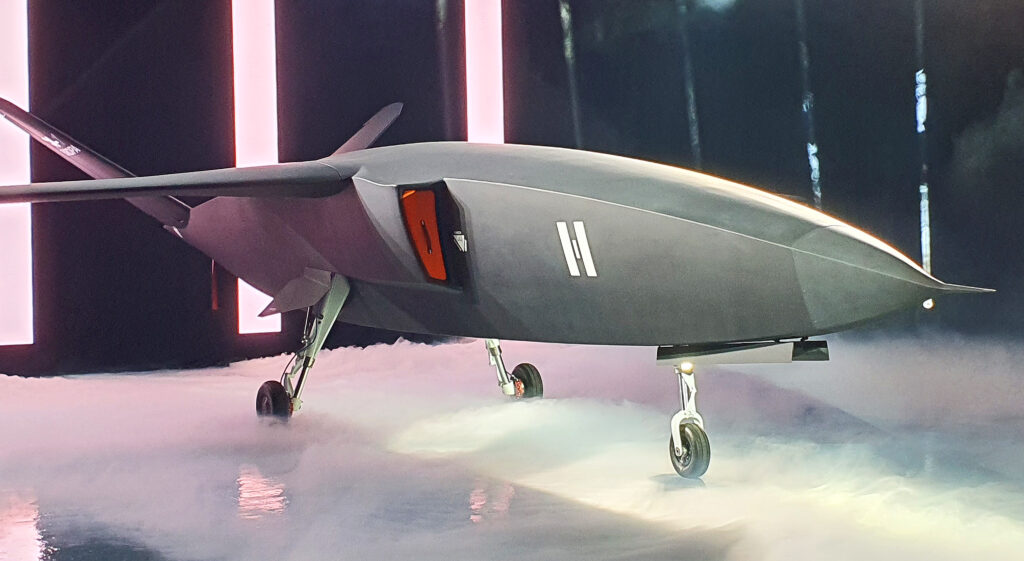

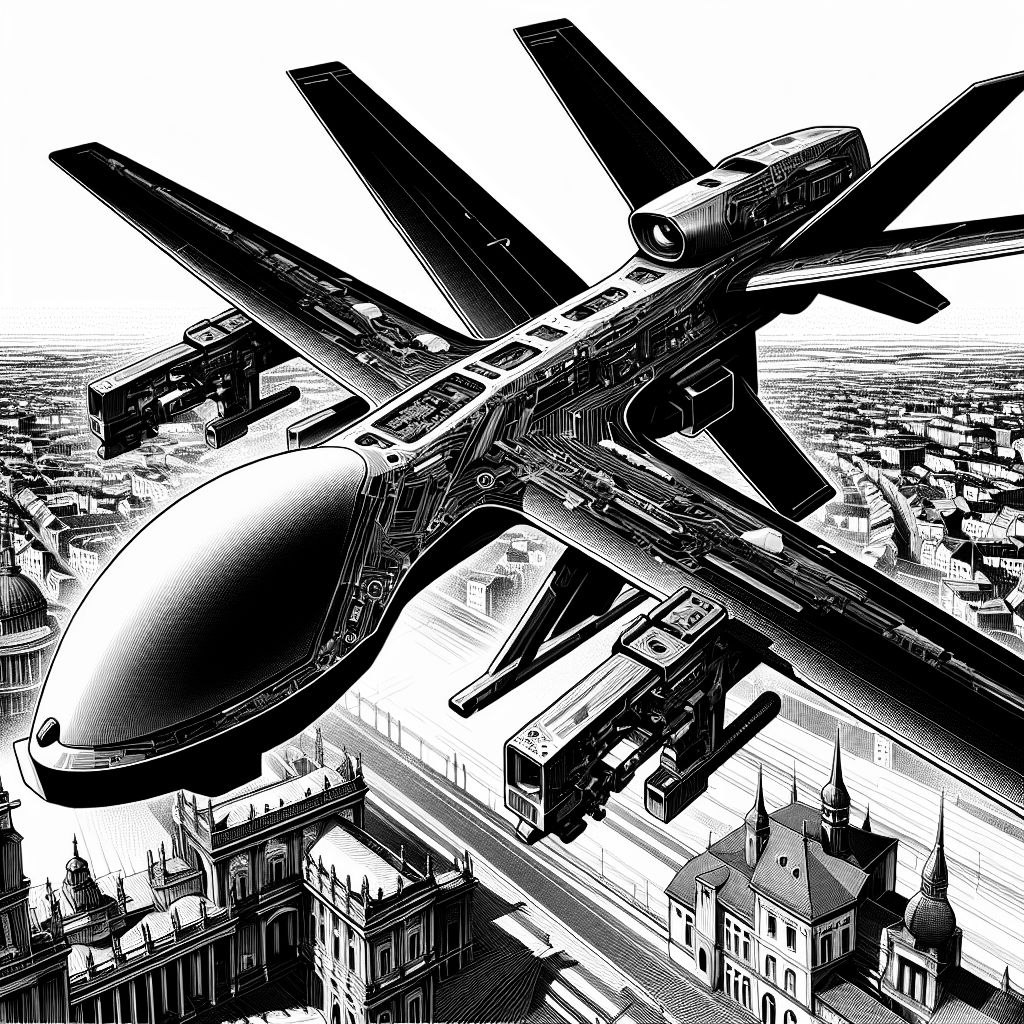

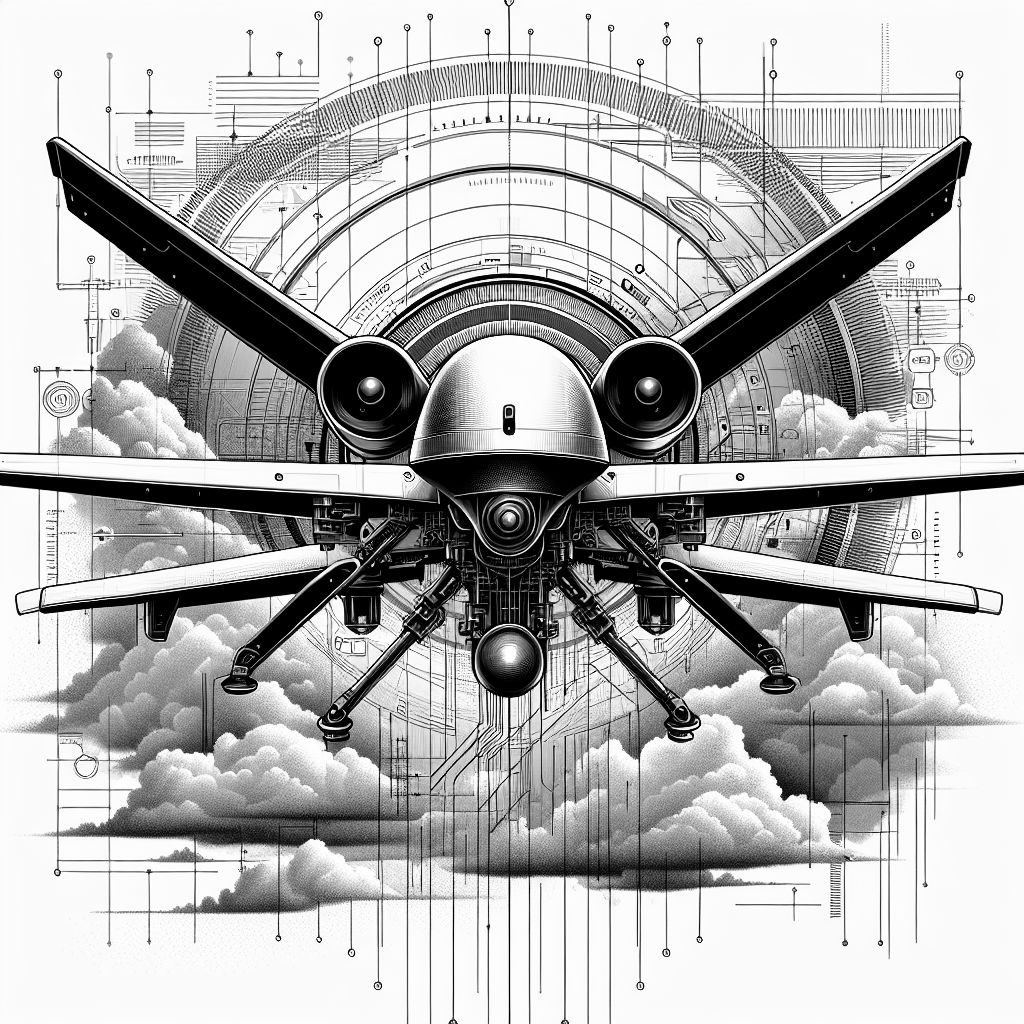

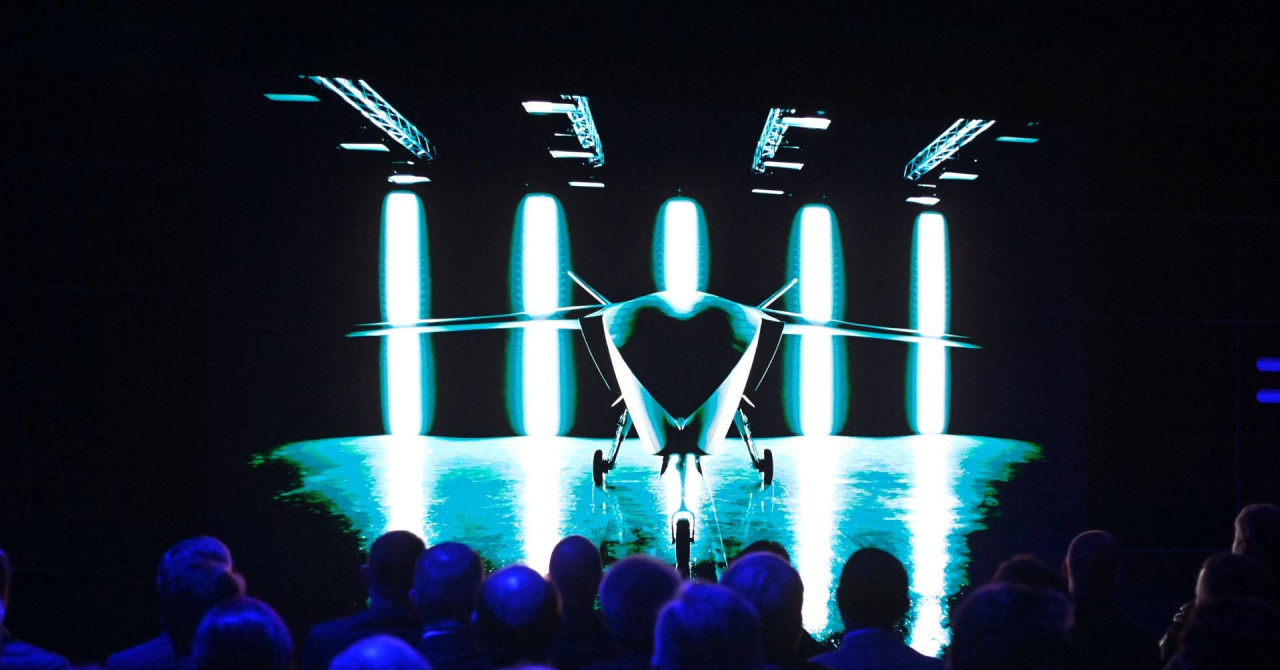

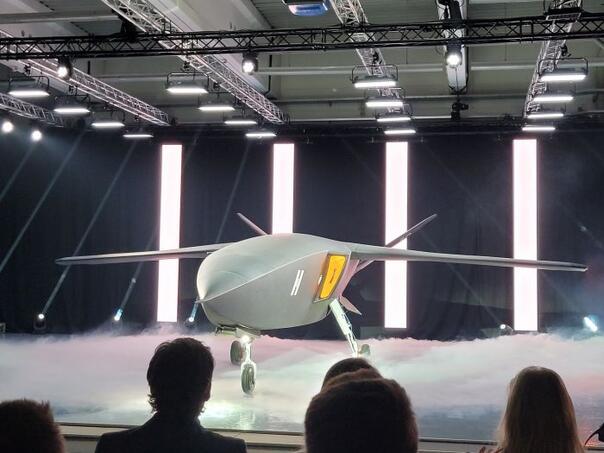

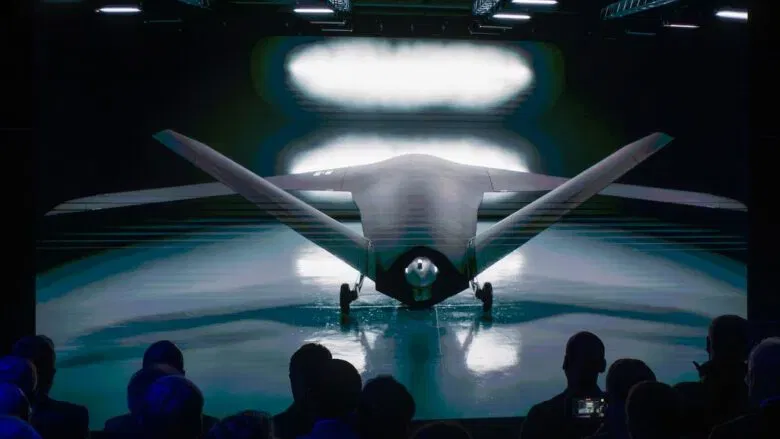

German defense startup Helsing, with Grob Aircraft, has unveiled the design for CA-1 Europa, an AI-enabled autonomous combat drone intended for military use. Controlled by Helsing's "Centaur" AI, the drone is designed for autonomous missions, including lethal operations, raising concerns about future risks of AI-driven warfare. Production is planned within four years.[AI generated]