The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

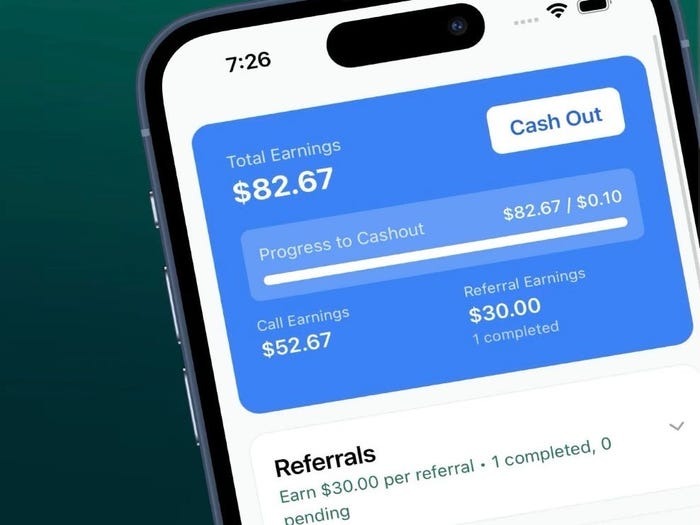

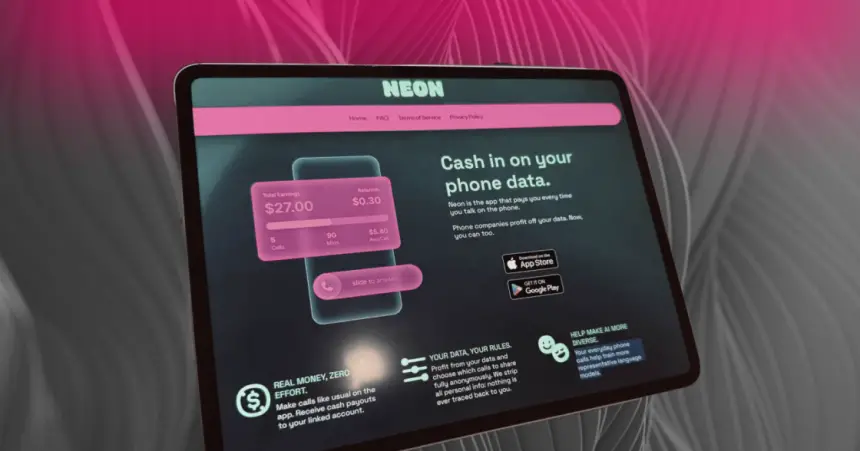

The Neon app, rapidly rising in popularity in the US, pays users to record their phone calls, then sells these recordings to AI companies for model training. This practice has sparked major privacy, legal, and security concerns, including risks of identity theft and voice fraud due to AI misuse of personal voice data.[AI generated]

Why's our monitor labelling this an incident or hazard?

The application 'Neon' explicitly uses AI systems by selling recorded voice data to AI companies for training models, which directly involves AI development and use. The event reports realized harms including privacy violations, legal concerns about consent, and risks of identity theft and fraud stemming from AI misuse of voice data. These harms fall under violations of human rights and harm to communities. The involvement of AI in the development and use of the recordings is central to the incident. Hence, this is classified as an AI Incident.[AI generated]

:format(jpg):quality(99)/f.elconfidencial.com/original/1ad/b98/e0c/1adb98e0cb54f816952a813e0137b047.jpg)