The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

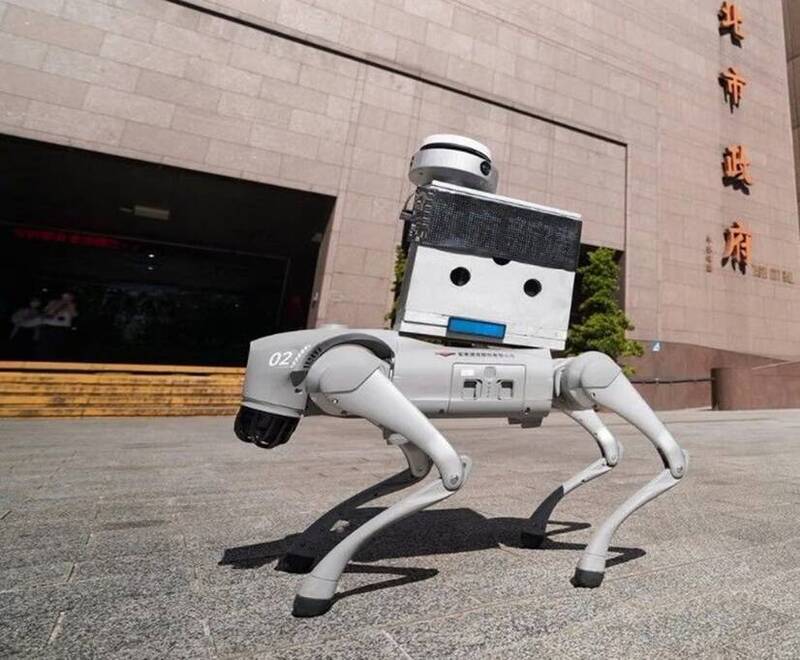

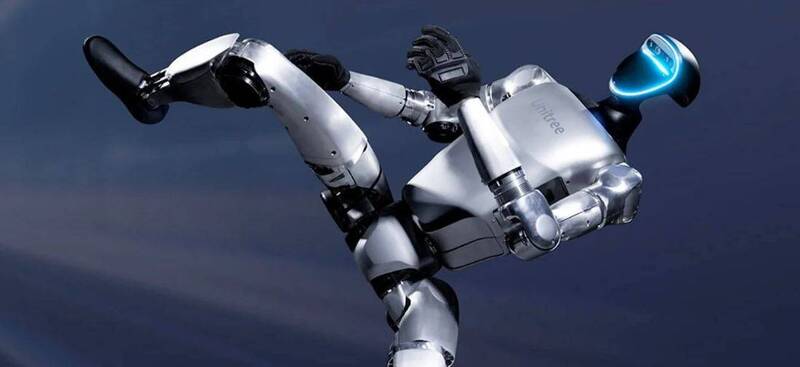

Security researchers revealed a critical Bluetooth vulnerability in Unitree's AI-powered robots, allowing attackers to remotely control and infect large numbers of devices. The flaw enables self-propagating attacks, risking data theft and unauthorized robot control, with potential impacts on public safety, especially where these robots are deployed in public services.[AI generated]