The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

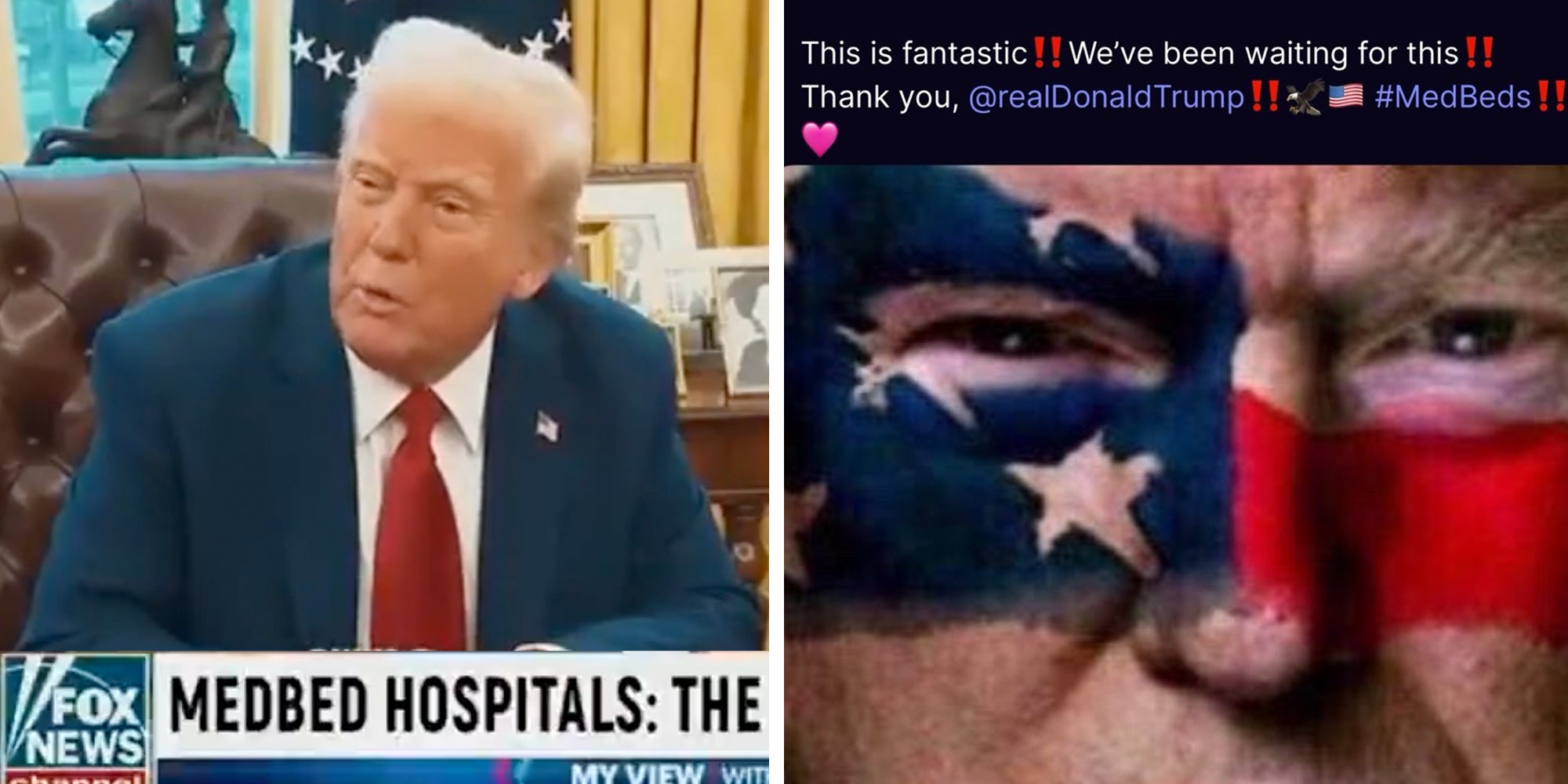

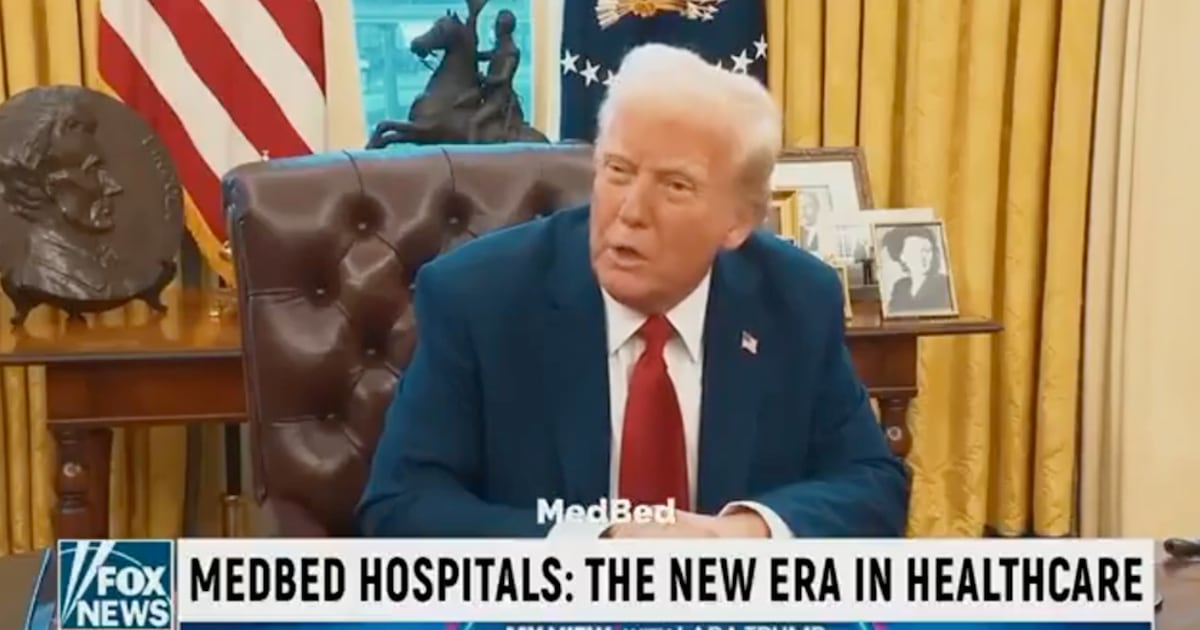

An AI-generated deepfake video featuring Donald Trump and Lara Trump promoted the false 'medbed' conspiracy theory, claiming miraculous healthcare technology. The video, shared on Truth Social, misled viewers by fabricating endorsements from public figures, contributing to misinformation and potentially undermining trust in legitimate healthcare in the United States.[AI generated]

)