The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

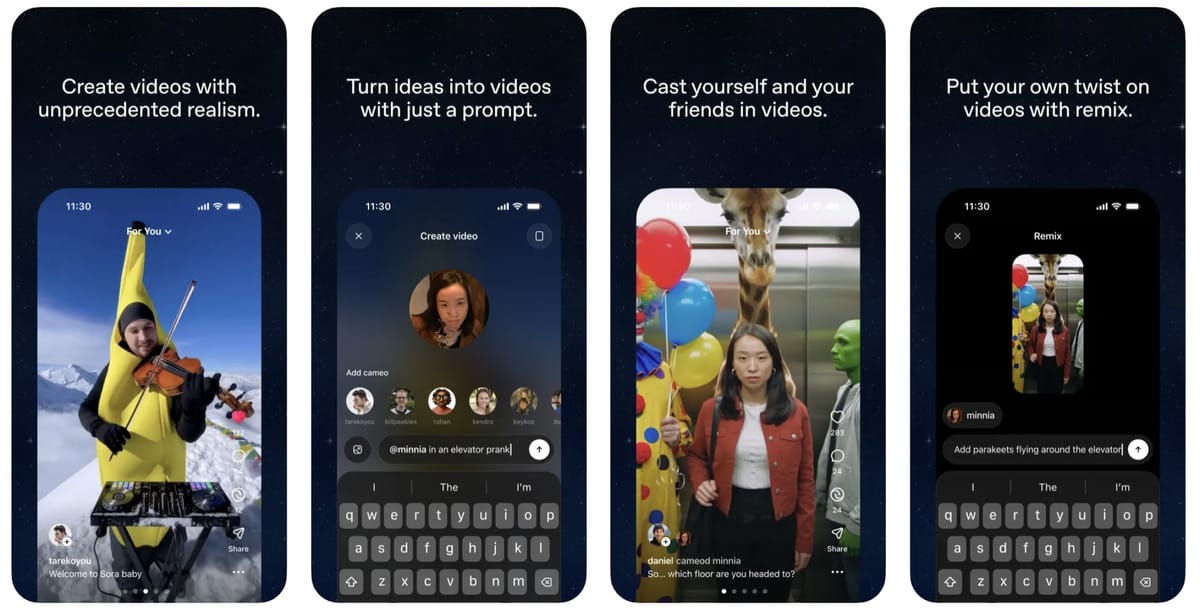

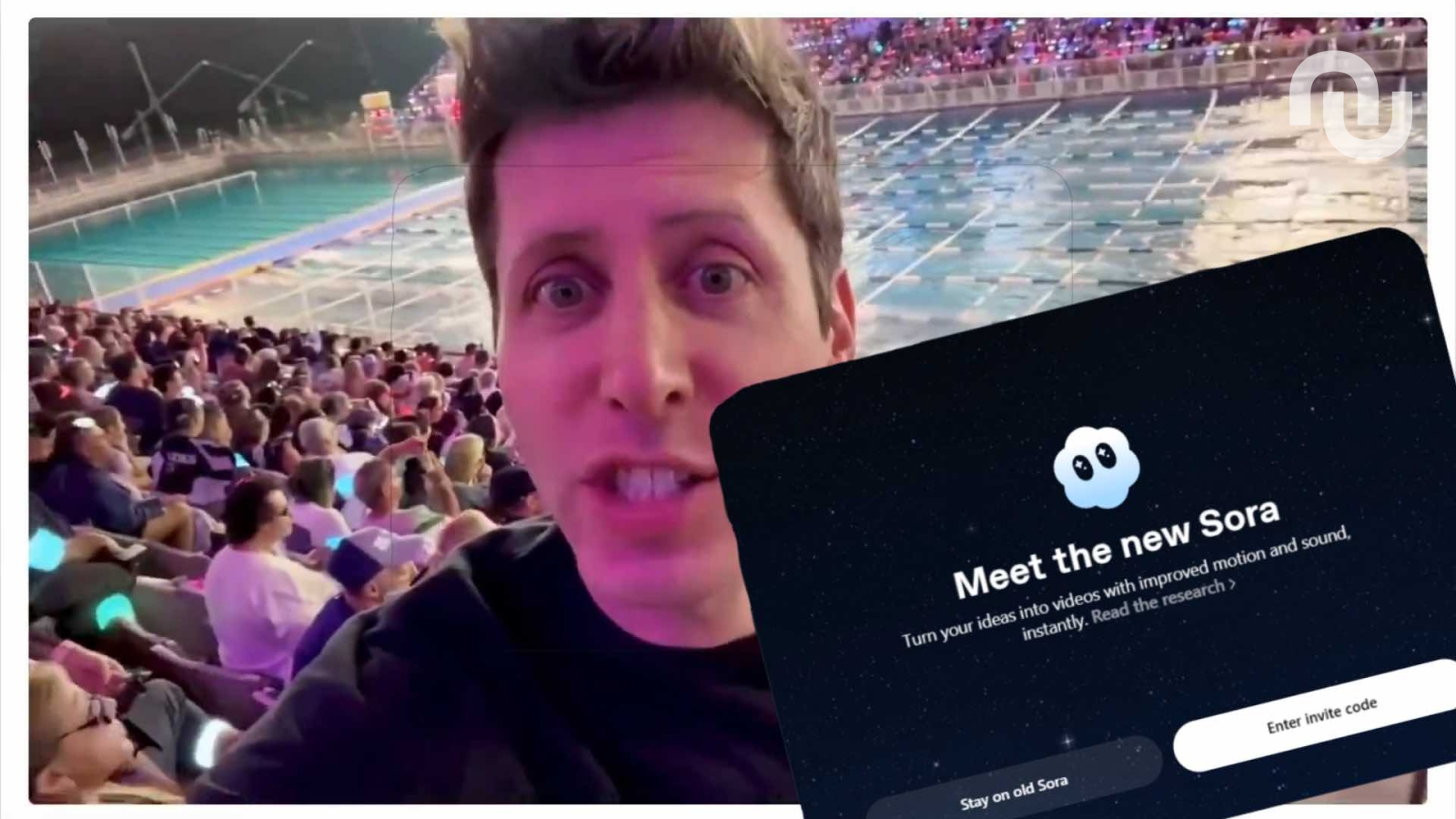

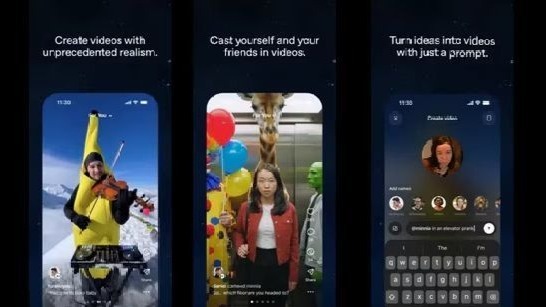

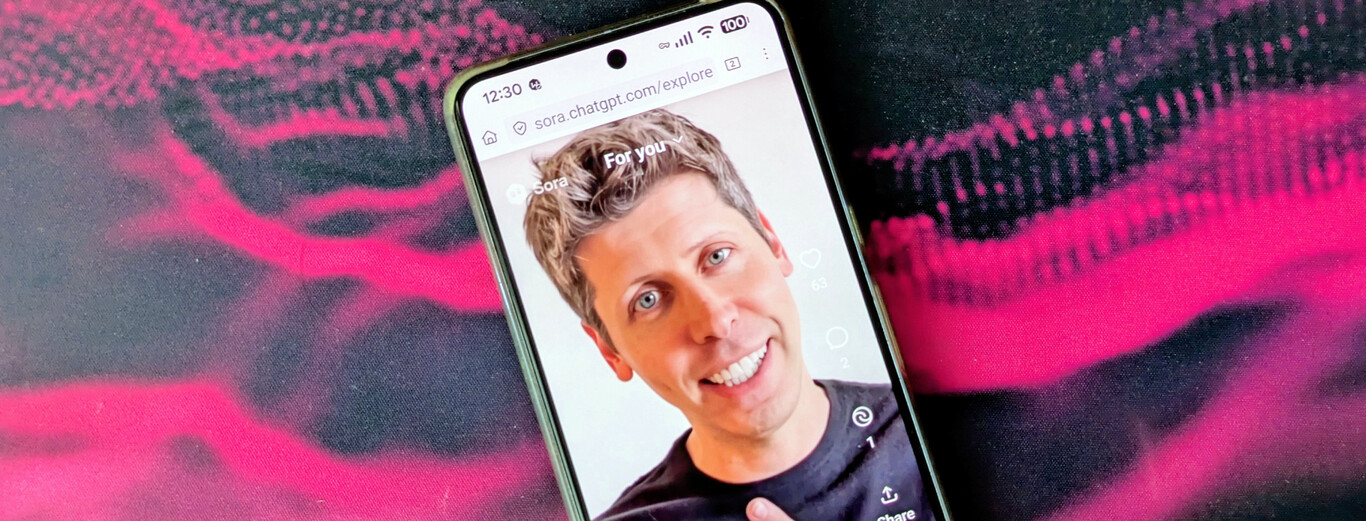

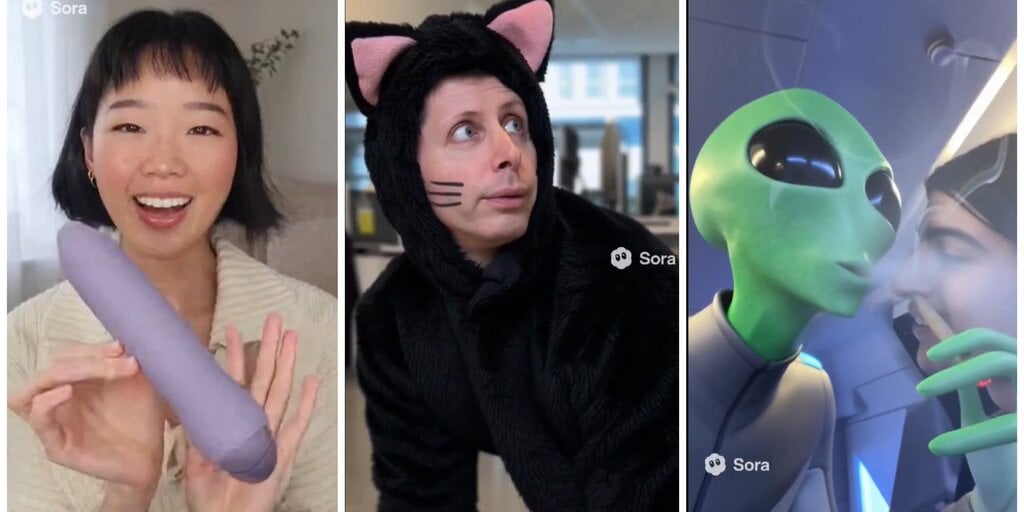

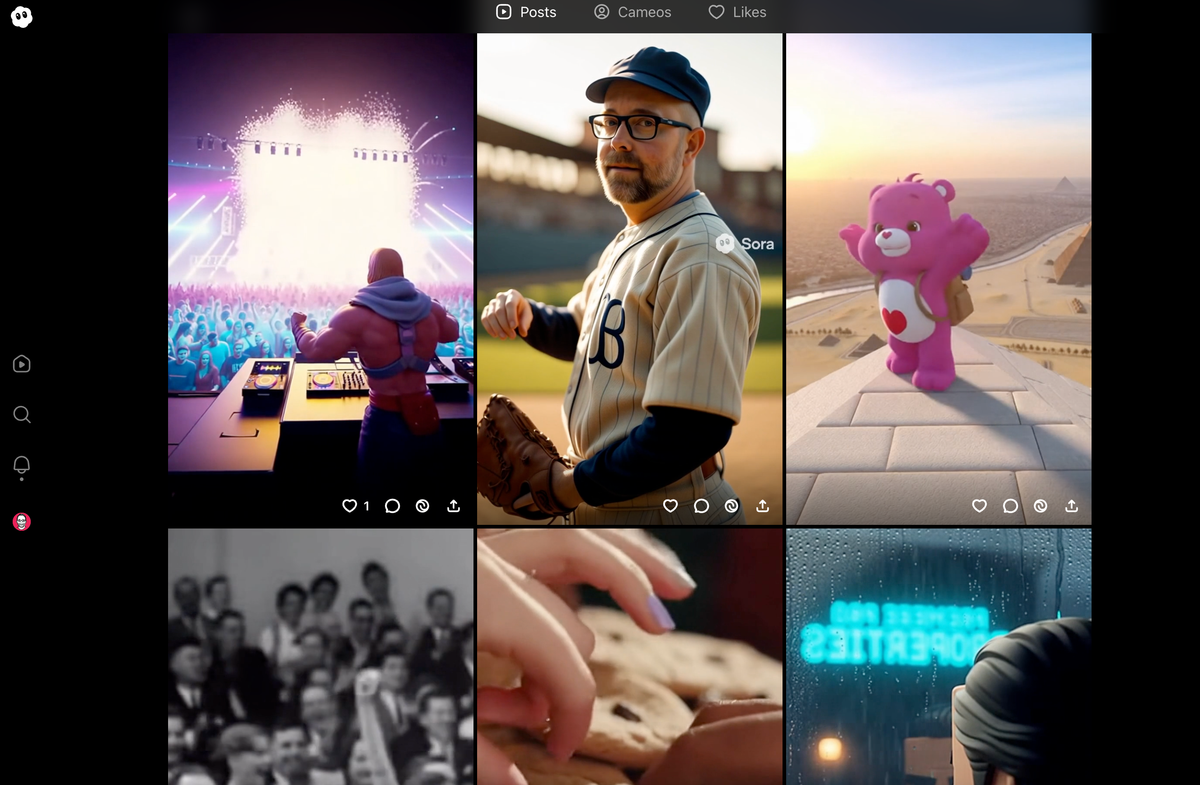

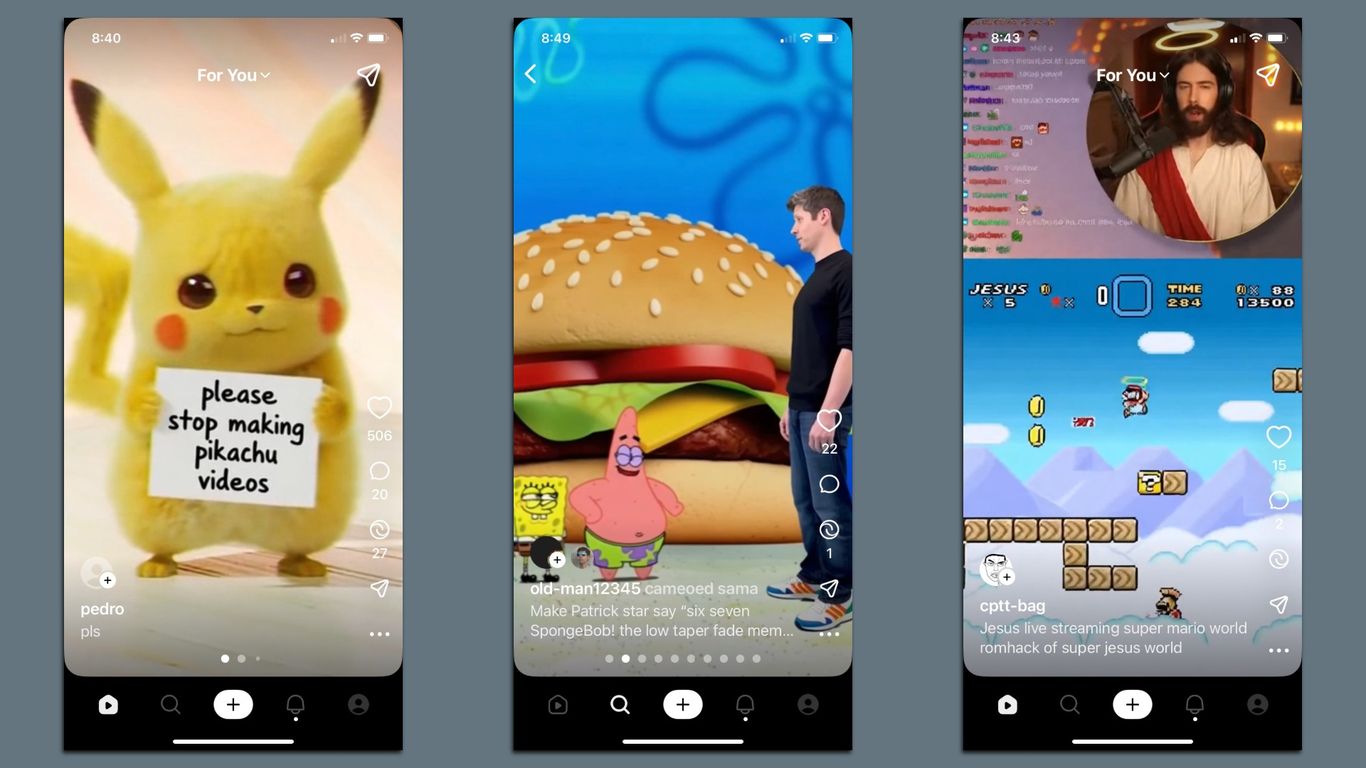

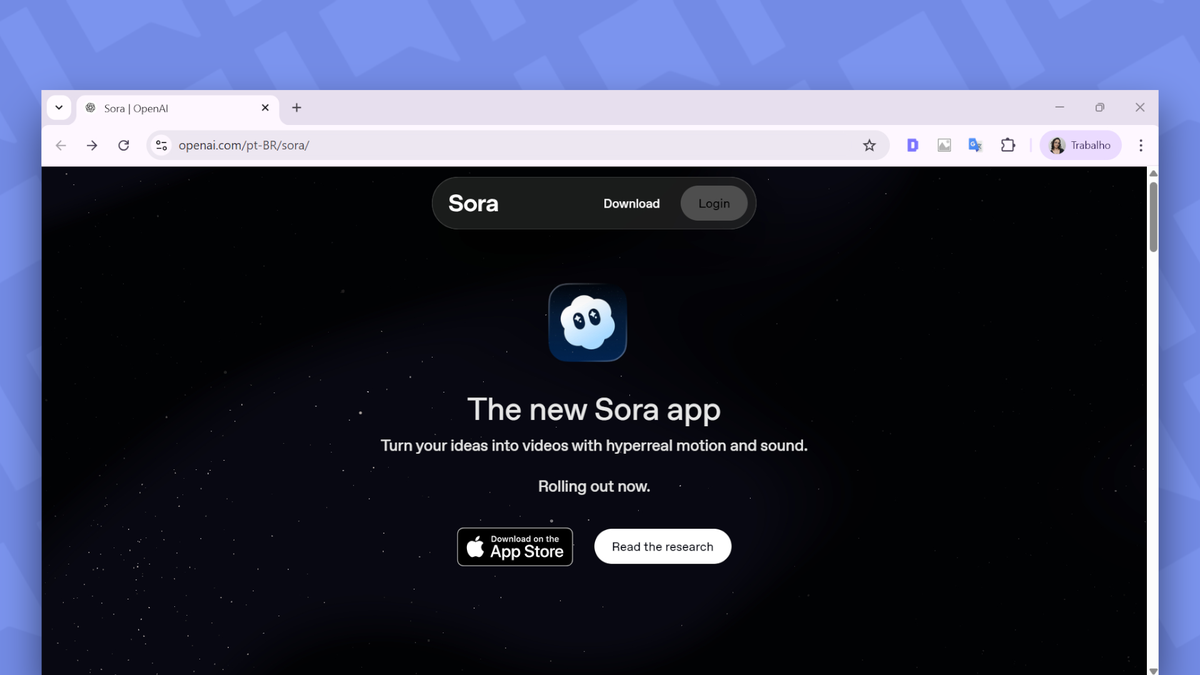

OpenAI is preparing to launch Sora 2, a TikTok-style app featuring only AI-generated videos. The app includes identity verification and allows use of personal likenesses, raising concerns about potential misuse, privacy violations, and copyright infringement, as rights holders must opt out to prevent their content's use.[AI generated]

.jpg)

:strip_icc()/i.s3.glbimg.com/v1/AUTH_da025474c0c44edd99332dddb09cabe8/internal_photos/bs/2025/t/b/XSzvIaRqmQXl4Izl7BPw/ai-video-app-1.jpg)

:format(jpg):quality(99)/f.elconfidencial.com/original/fbb/515/a1a/fbb515a1a4b352853453ed1d78106e29.jpg)

)

/https://www.ilsoftware.it/app/uploads/2025/10/sora-app-ios.jpg)