The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

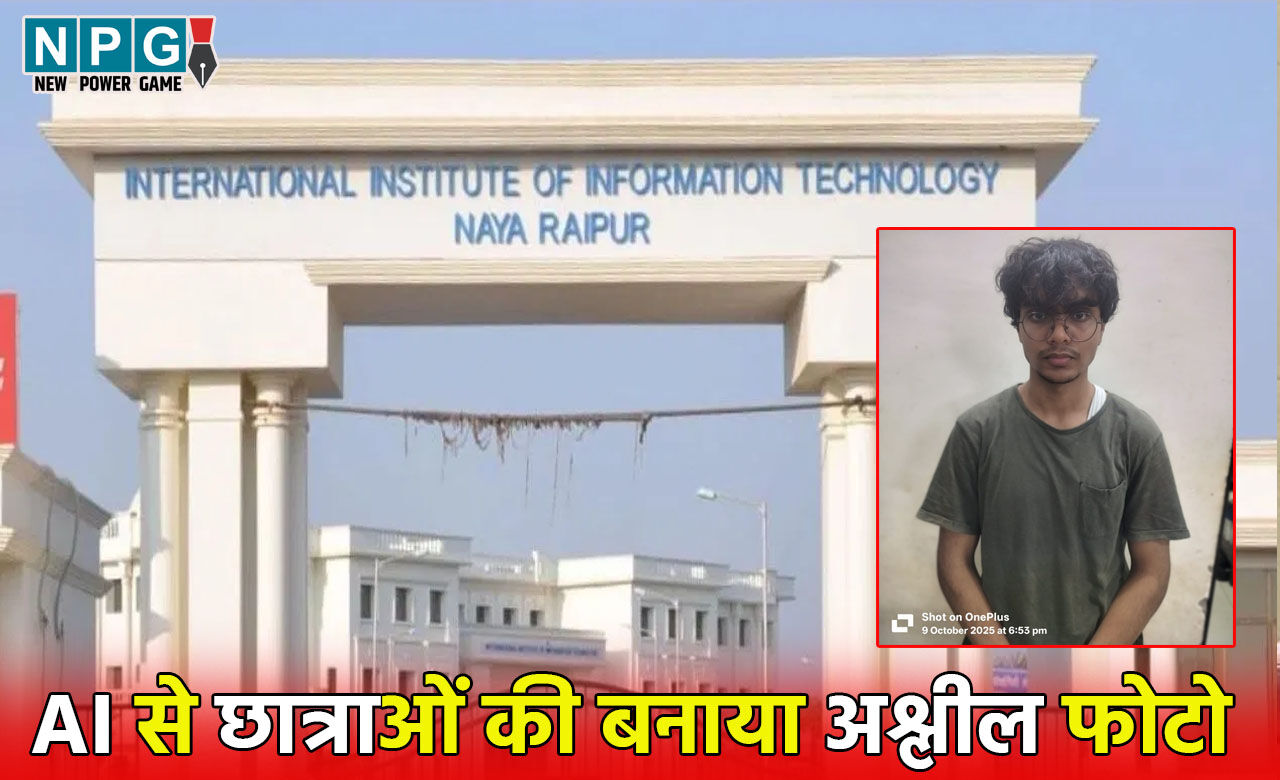

A third-year engineering student at IIIT Raipur, India, used AI tools to generate fake explicit images and videos of 36 female classmates by morphing their social media photos. The incident led to the student's suspension, police involvement, and an internal investigation, causing significant distress among the victims.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event involves the use of AI systems (AI-based image creation and editing apps) to produce harmful, obscene fake images of students, which directly violates the rights and dignity of the affected individuals. This constitutes a breach of fundamental rights and personal privacy, fulfilling the criteria for an AI Incident. The harm has already occurred, with police action and institutional suspension following the misuse of AI.[AI generated]

-1760083745870_m.webp)