The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

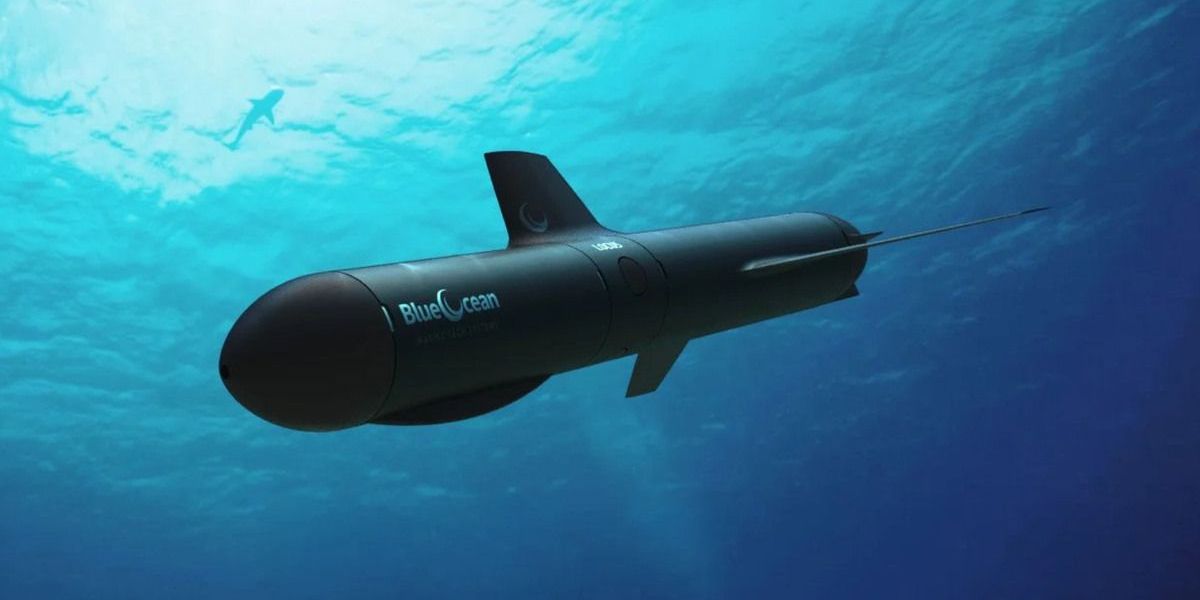

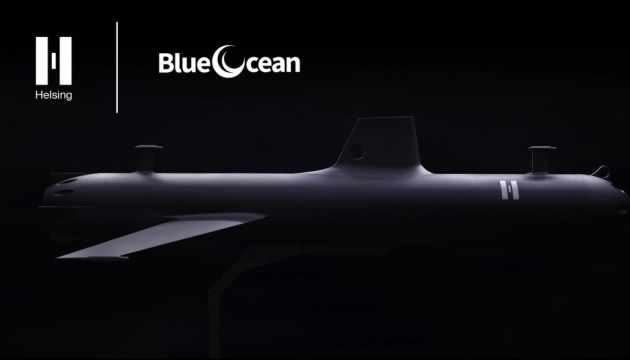

German defense startup Helsing has acquired Australian marine technology firm Blue Ocean to integrate Helsing's AI with Blue Ocean's autonomous underwater vehicles. The move aims to accelerate the development and mass production of AI-enabled underwater defense platforms, raising potential future risks associated with autonomous military technologies.[AI generated]