The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

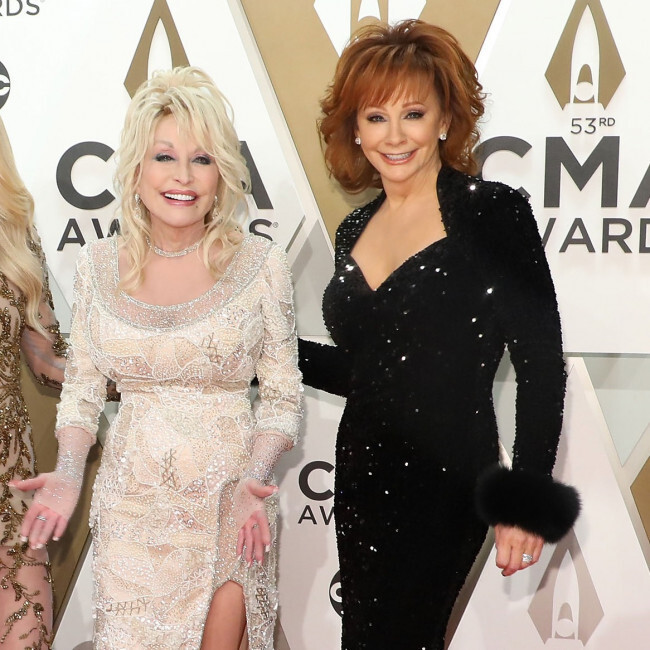

AI-generated images falsely depicting Dolly Parton on her deathbed with Reba McEntire circulated online, causing public concern and emotional distress. Both celebrities publicly refuted the hoax, highlighting the harm caused by AI-driven misinformation and the need for vigilance against such fabricated content.[AI generated]

:max_bytes(150000):strip_icc():focal(725x309:727x311)/reba-mcentire-dolly-parton-040824-026ce15600db4a7091c1688c58e37073.jpg)

:max_bytes(150000):strip_icc()/dolly-parton-Reba-McEntire-100825-73d83faf28c243beb197625cdde21dfa.jpg)