The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

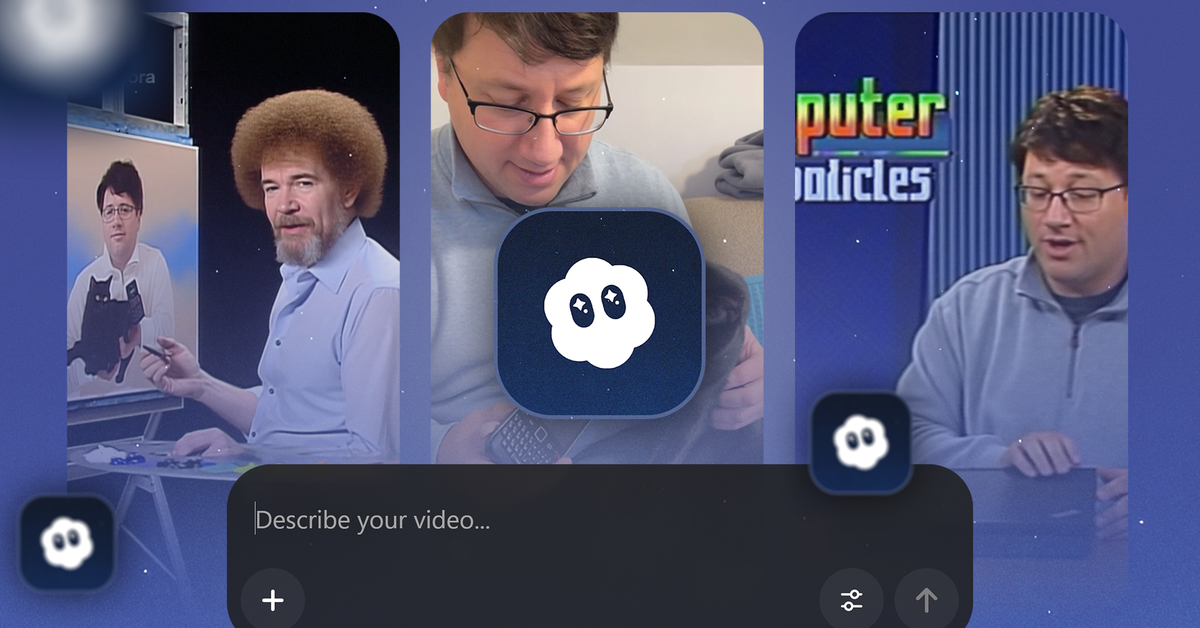

OpenAI's Sora app has enabled the mass creation and distribution of hyper-realistic AI-generated videos, including deepfakes of public figures and deceased celebrities. This has led to widespread misinformation, identity misuse, emotional distress for families, and erosion of public trust, with safety measures proving insufficient to prevent harm.[AI generated]