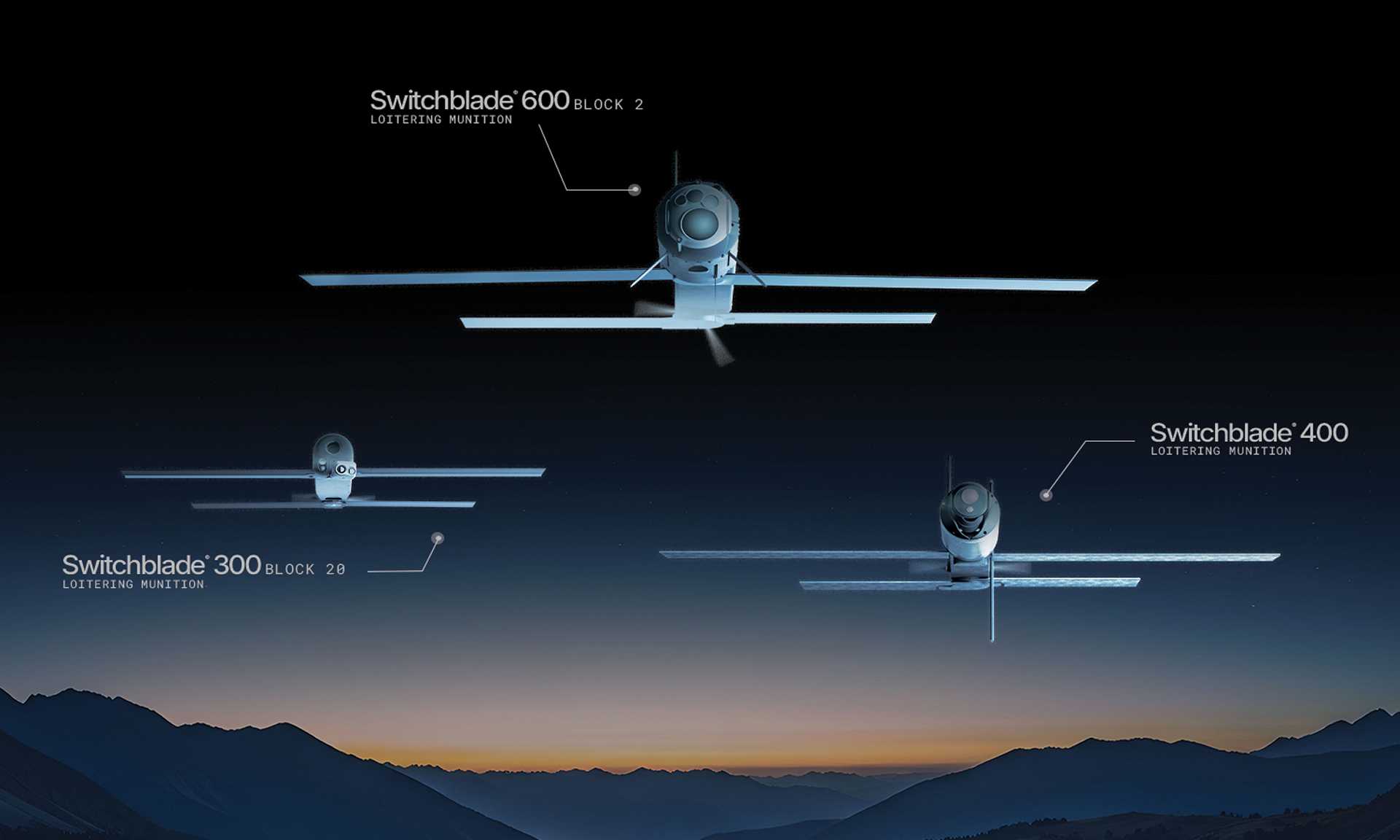

The Switchblade loitering munitions incorporate AI features like automated target recognition and autonomous control, qualifying them as AI systems. The article focuses on the expansion of production capacity to thousands of units per year, indicating a significant increase in availability and potential use. Although no direct harm is reported in this article, the deployment of AI-enabled autonomous weapons inherently carries risks of injury, death, and broader harm to communities. The event thus fits the definition of an AI Hazard, as the development and mass production of these systems could plausibly lead to AI Incidents involving harm. There is no indication of an actual incident or harm having occurred in this report, so it is not an AI Incident. It is not merely complementary information because the main focus is on the production scale and capabilities that imply future risk, not on responses or ecosystem context. Therefore, the classification is AI Hazard.