The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

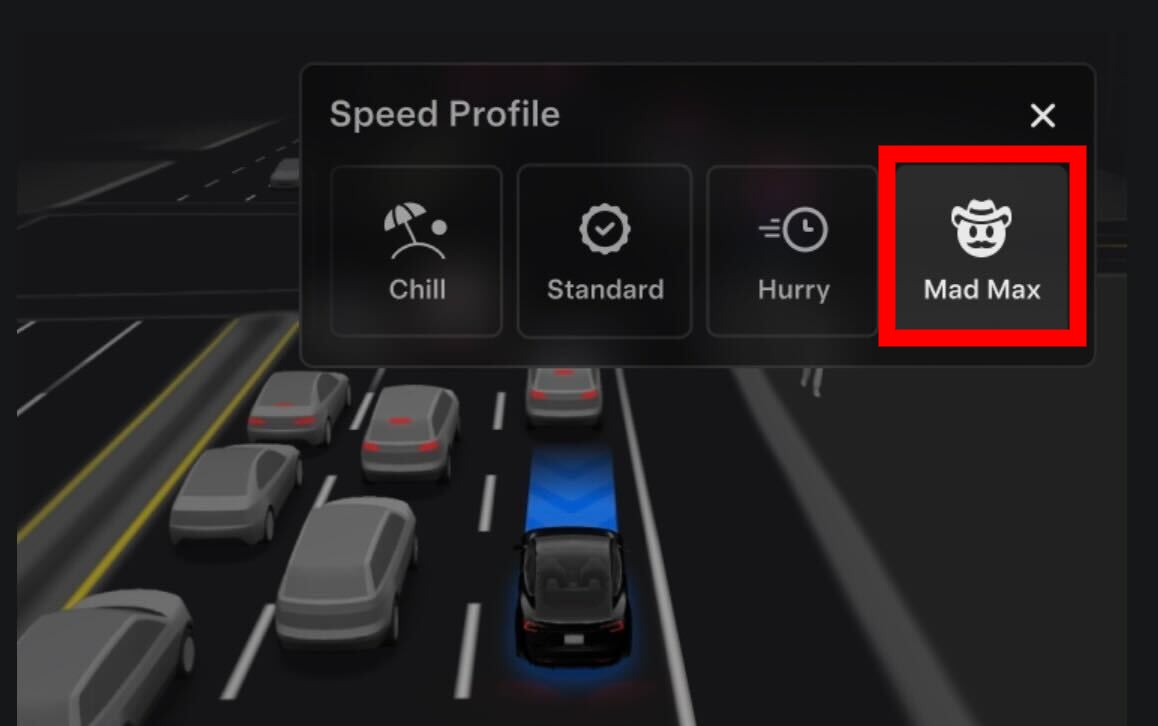

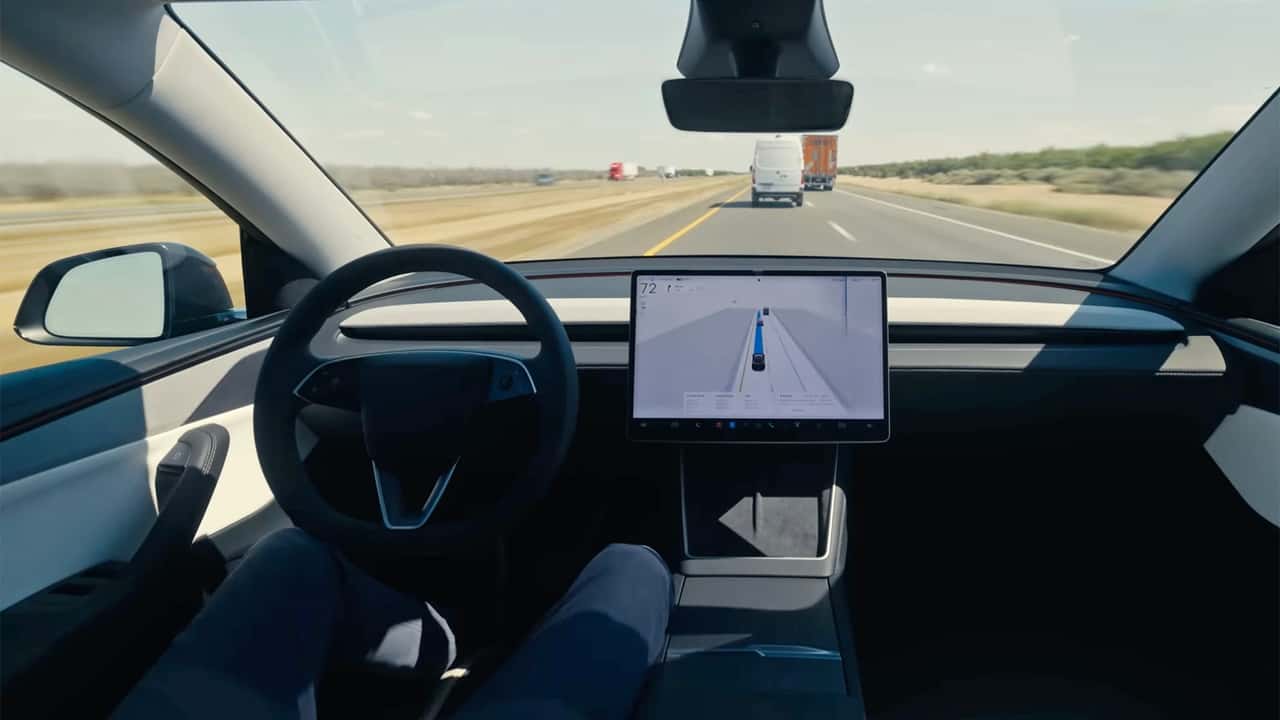

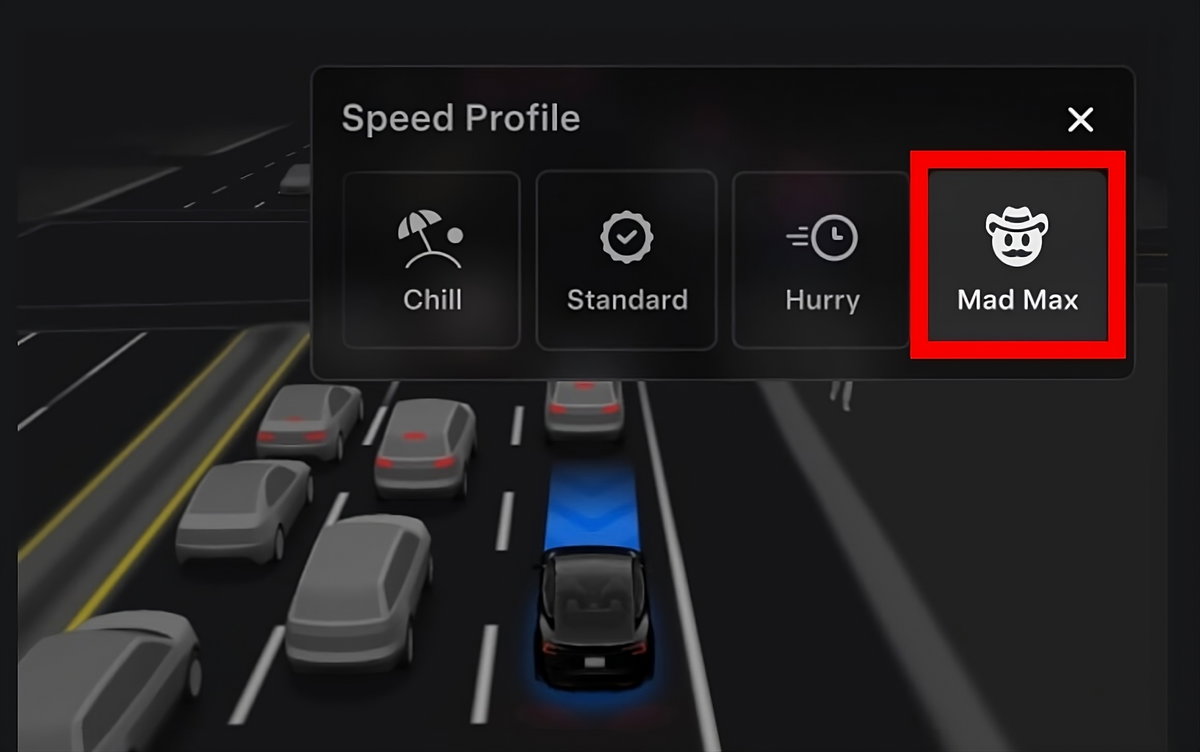

Tesla released a new 'Mad Max' mode for its Full Self-Driving (FSD) AI system, enabling vehicles to drive aggressively, frequently exceed speed limits, and perform risky maneuvers. This has led to regulatory investigations and at least one wrongful death lawsuit, raising significant safety and legal concerns in the United States.[AI generated]