The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

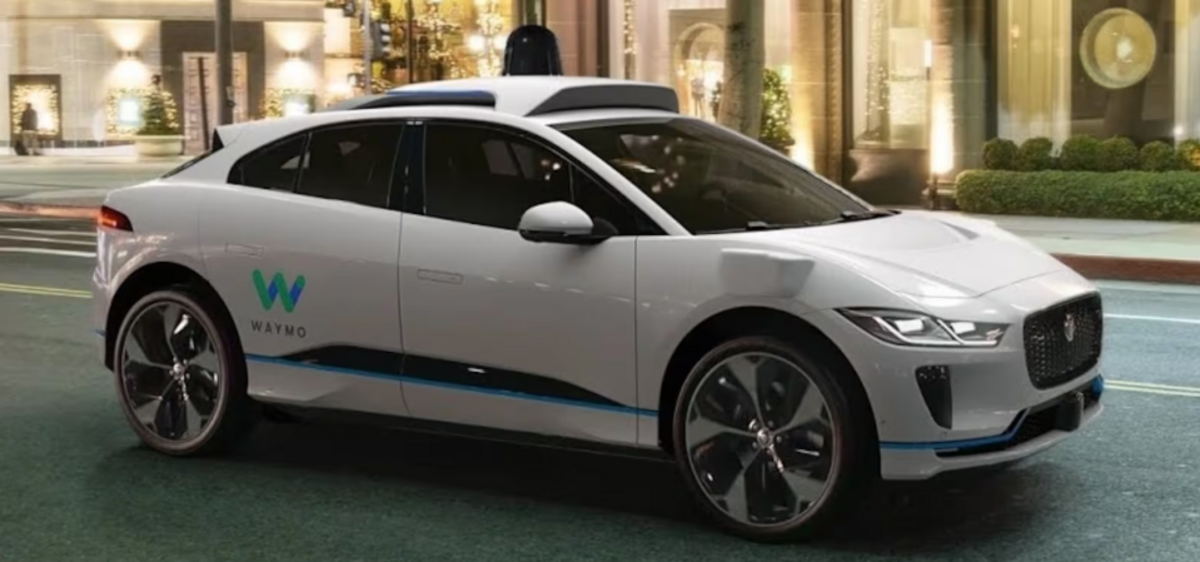

Waymo's autonomous vehicles have caused community disruption in San Francisco due to noise and erratic driving, prompting resident protests. Separately, in Atlanta, a Waymo robotaxi illegally passed a stopped school bus unloading children, triggering a federal safety investigation and raising concerns about the AI system's reliability and public safety.[AI generated]