The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

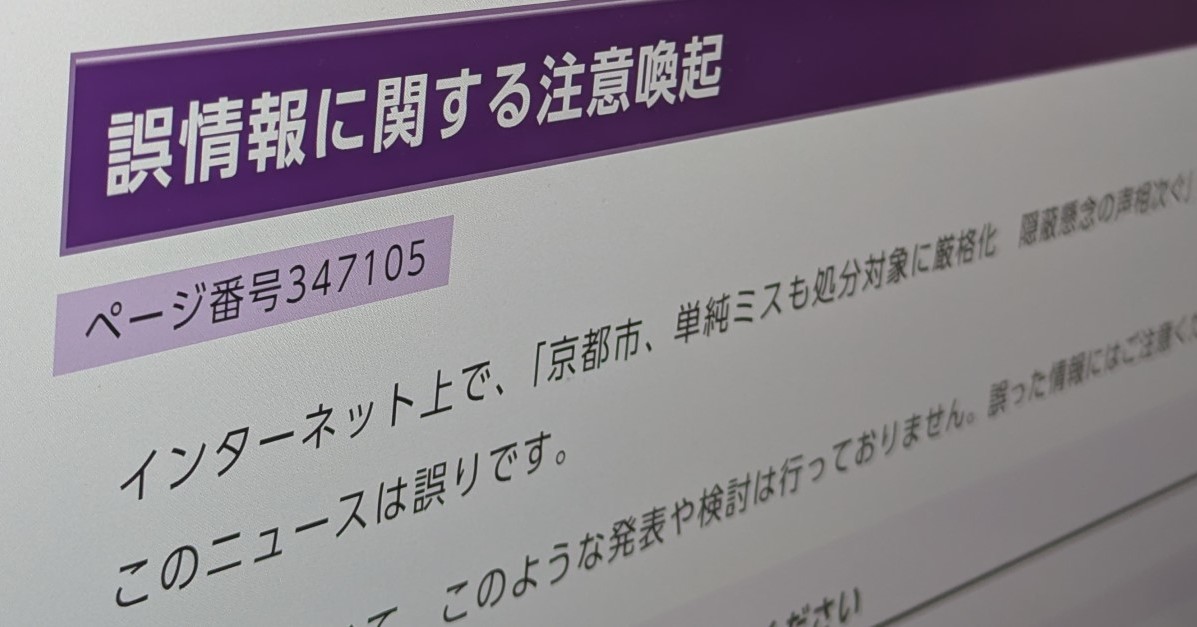

A generative AI system on X (formerly Twitter) created and distributed a false news summary misattributing a policy from Nagahama City to Kyoto City. Kyoto City requested the removal of the misinformation, which X promptly deleted and apologized for. The incident caused public confusion and reputational harm in Japan.[AI generated]