The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

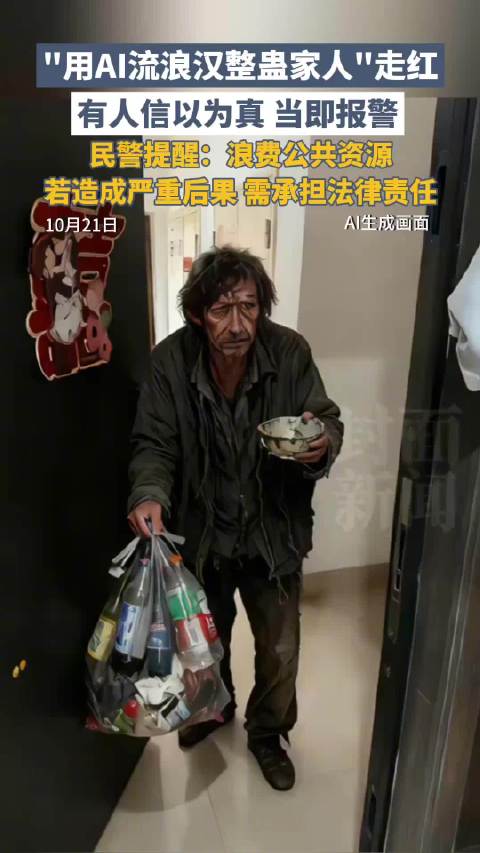

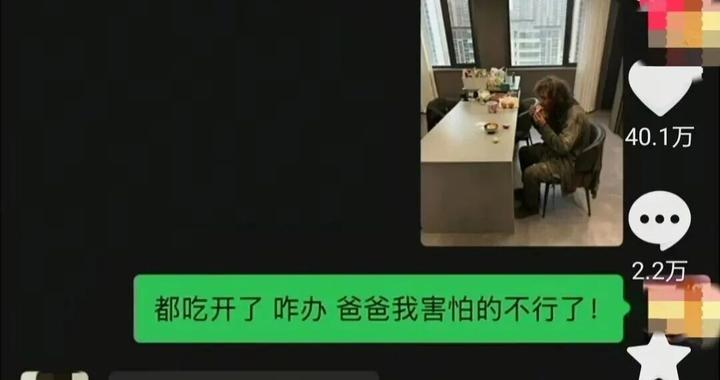

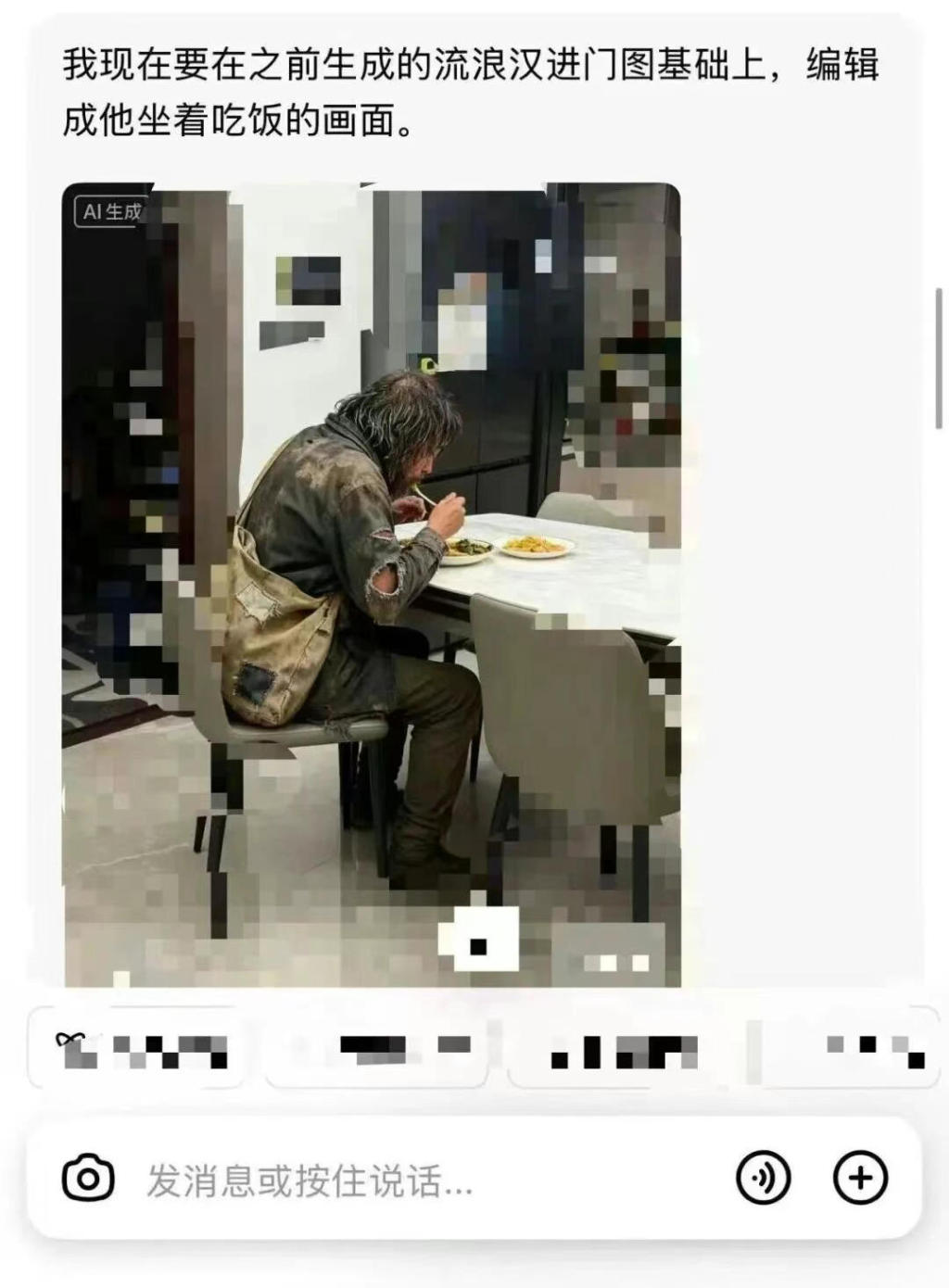

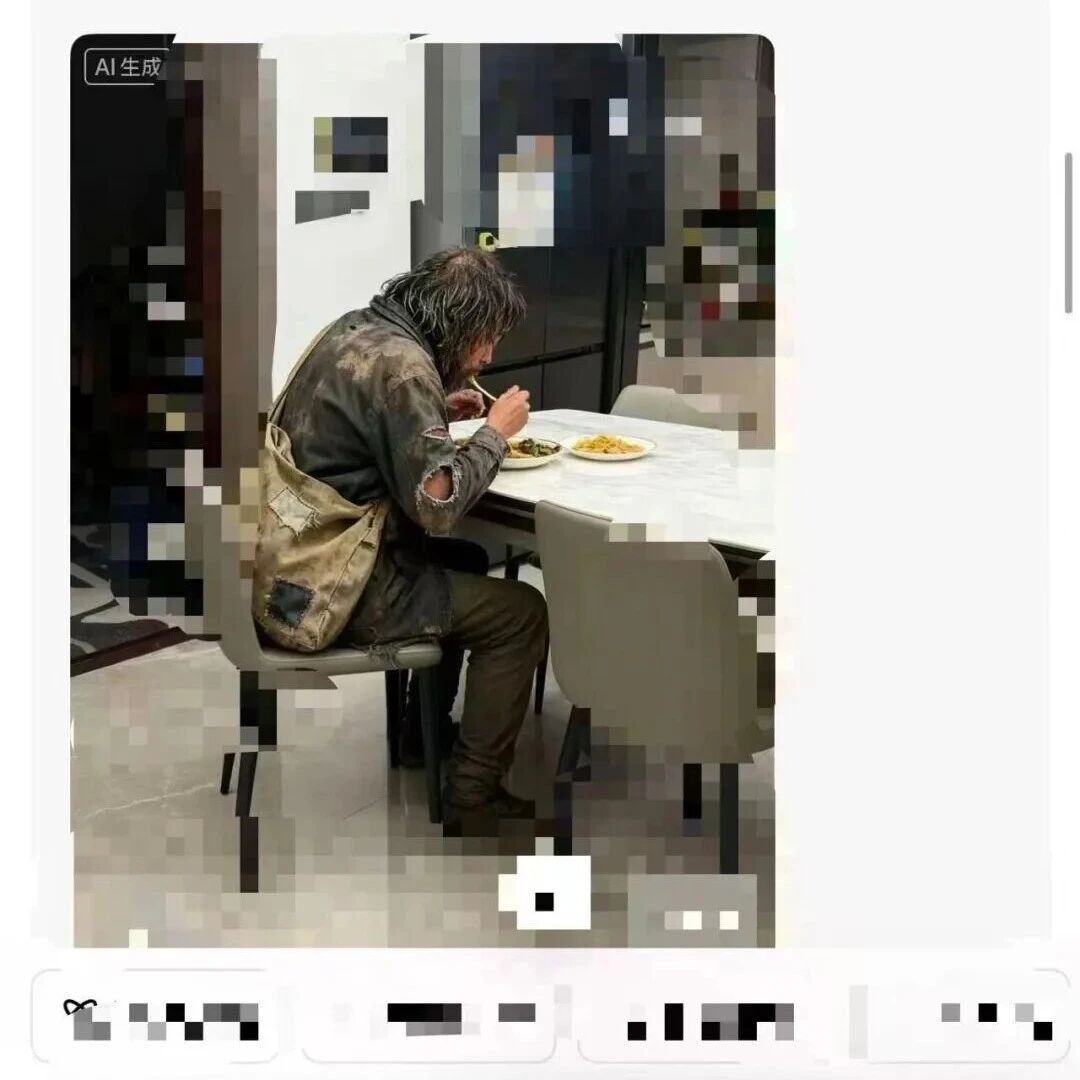

In China, individuals used AI to generate realistic images of a homeless person entering a home as a prank, leading to false alarms, police mobilization, and public resource waste. The misuse of AI for such pranks has sparked legal warnings, public concern, and calls for stricter regulation to prevent further harm.[AI generated]