The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

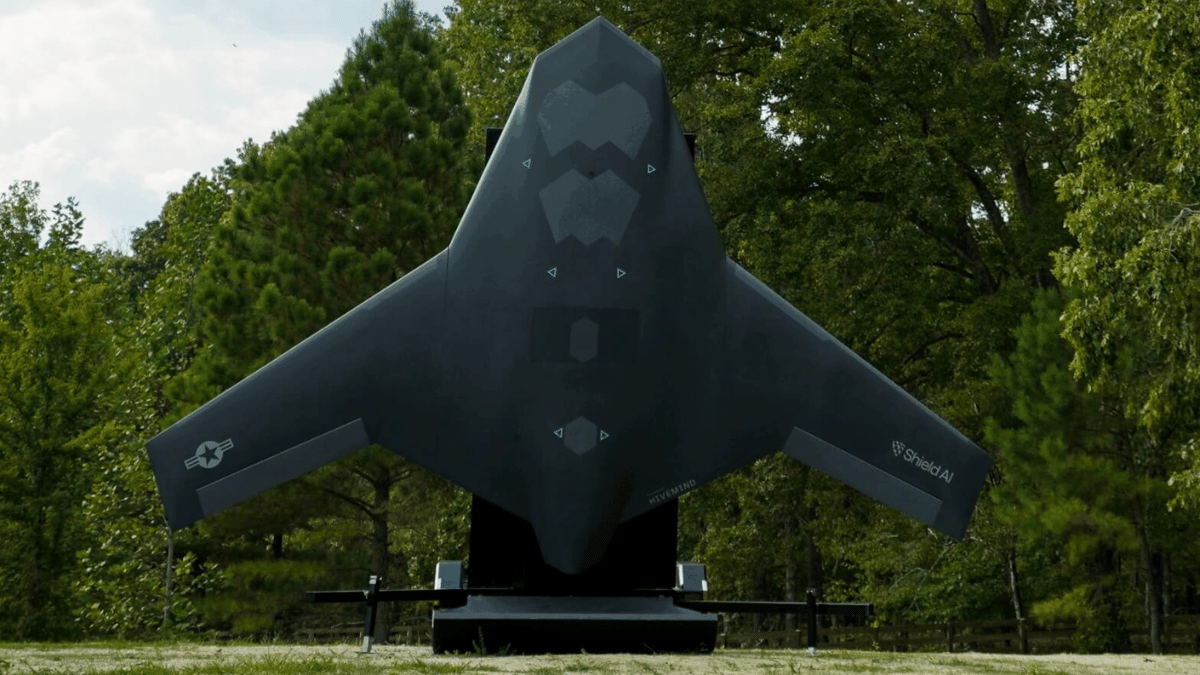

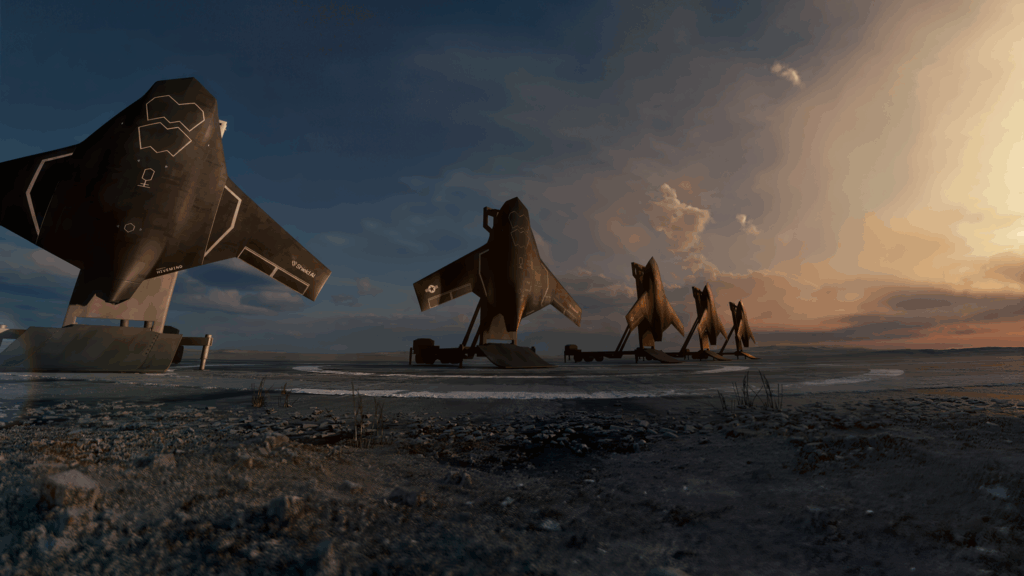

Shield AI has unveiled the X-BAT, an AI-piloted, vertical takeoff and landing (VTOL) combat drone designed for autonomous military operations. Powered by the Hivemind autonomy software, X-BAT can execute missions independently or alongside manned aircraft, raising future risks of harm in warfare due to its autonomous capabilities.[AI generated]