The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

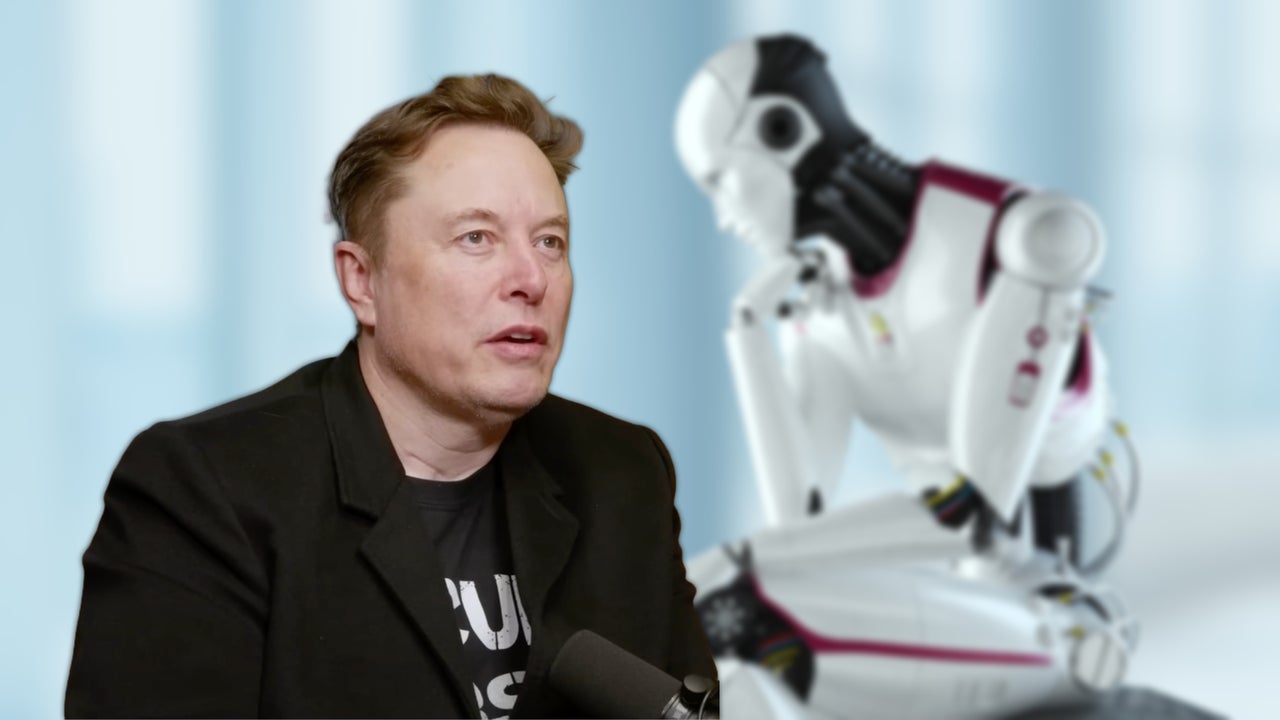

Tesla's autonomous Robotaxi service in Austin, Texas, has experienced at least four reported crashes since its launch, despite having safety monitors present. The incidents, reported to the NHTSA, highlight ongoing safety concerns as Tesla expands its driverless service and plans to remove safety moderators in the future.[AI generated]

:format(jpg):quality(99)/f.elconfidencial.com/original/5bb/d32/563/5bbd32563aff53f1ac7358551e0bec6e.jpg)