The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

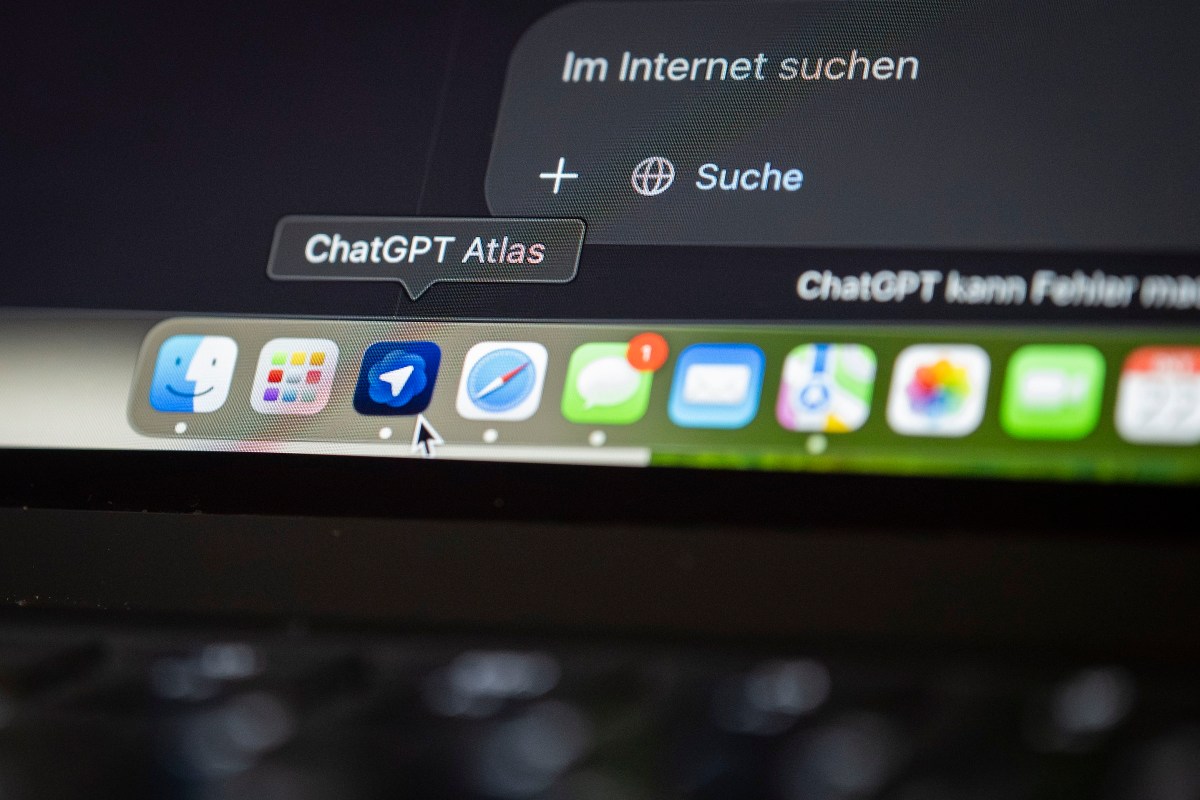

Security researchers at NeuralTrust discovered a critical vulnerability in OpenAI's new AI-powered Atlas browser, where the omnibox misinterprets malicious, URL-like inputs as trusted commands. This prompt injection flaw allows attackers to bypass safety controls, potentially leading to phishing, data theft, and unauthorized actions by the AI agent.[AI generated]