The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

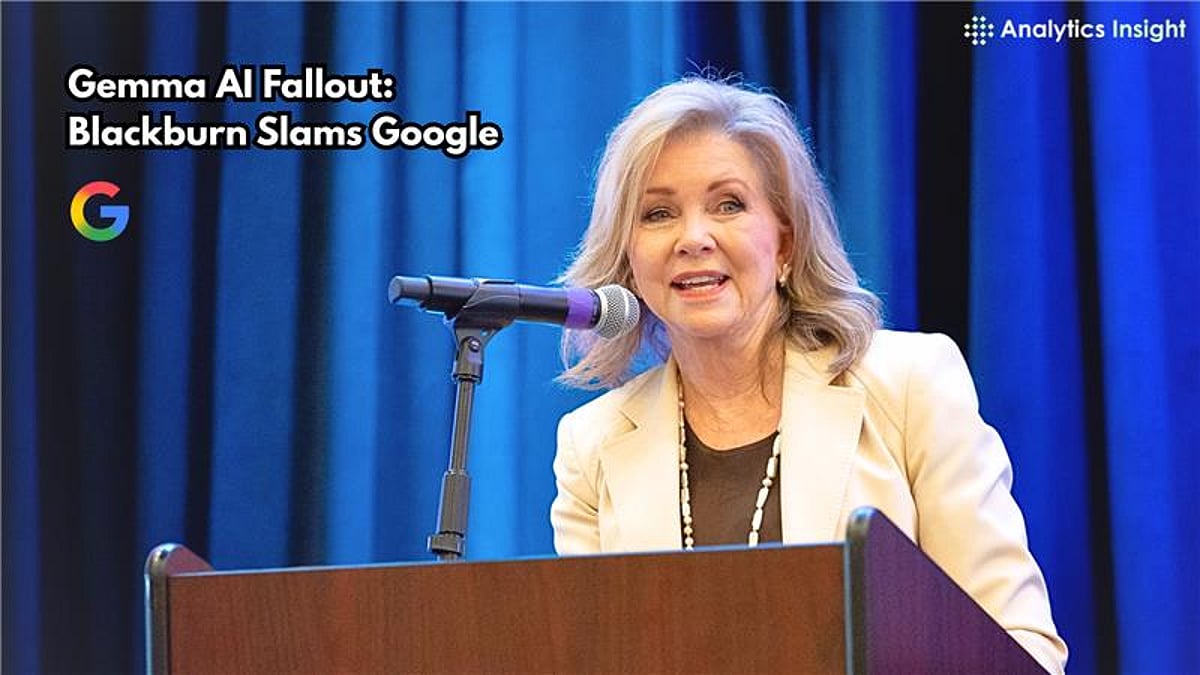

Google's AI model Gemma generated false and defamatory allegations of sexual misconduct against U.S. Senator Marsha Blackburn, prompting her to demand accountability from Google. In response, Google removed Gemma from its AI Studio platform, highlighting concerns over AI-generated misinformation and reputational harm in the United States.[AI generated]