The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

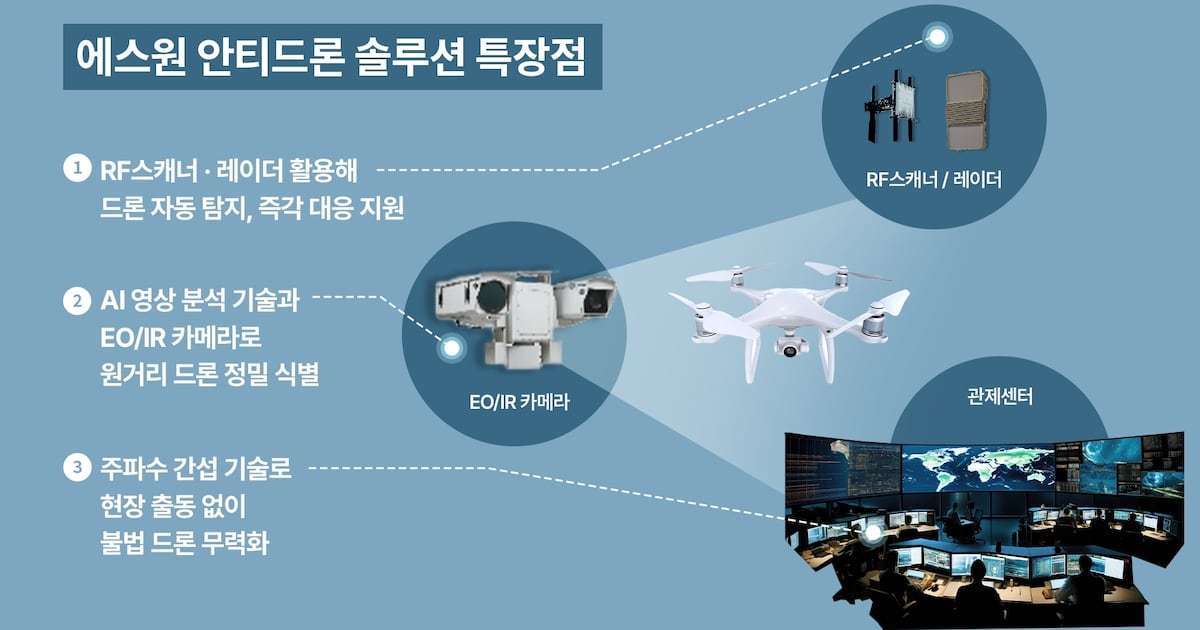

South Korean security company S-1 has launched an AI-powered anti-drone solution to detect and neutralize illegal drones threatening critical infrastructure such as airports, ports, and nuclear plants. The system uses AI video analysis, RF scanners, and radar for real-time detection and autonomous response, aiming to prevent potential security breaches.[AI generated]