The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

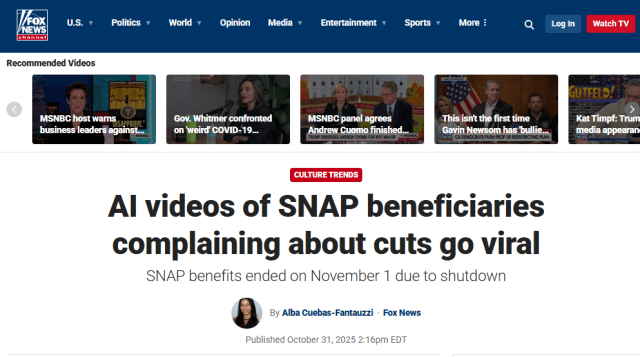

AI-generated deepfake videos depicting Black women misusing SNAP benefits circulated widely on social media, spreading racist stereotypes and misinformation. Fox News mistakenly reported these fabricated videos as real, further amplifying the false narratives before issuing a correction. The incident highlights the harm caused by AI-generated misinformation and media failures in the United States.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event explicitly involves an AI system (the AI app Sora) generating harmful content that spreads racist misinformation and stereotypes. This content is actively causing harm by influencing public perception negatively, reinforcing racial biases, and contributing to social stigma against SNAP recipients, which constitutes harm to communities and a violation of rights. Therefore, this qualifies as an AI Incident because the AI system's use has directly led to significant harm as defined in the framework.[AI generated]