The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

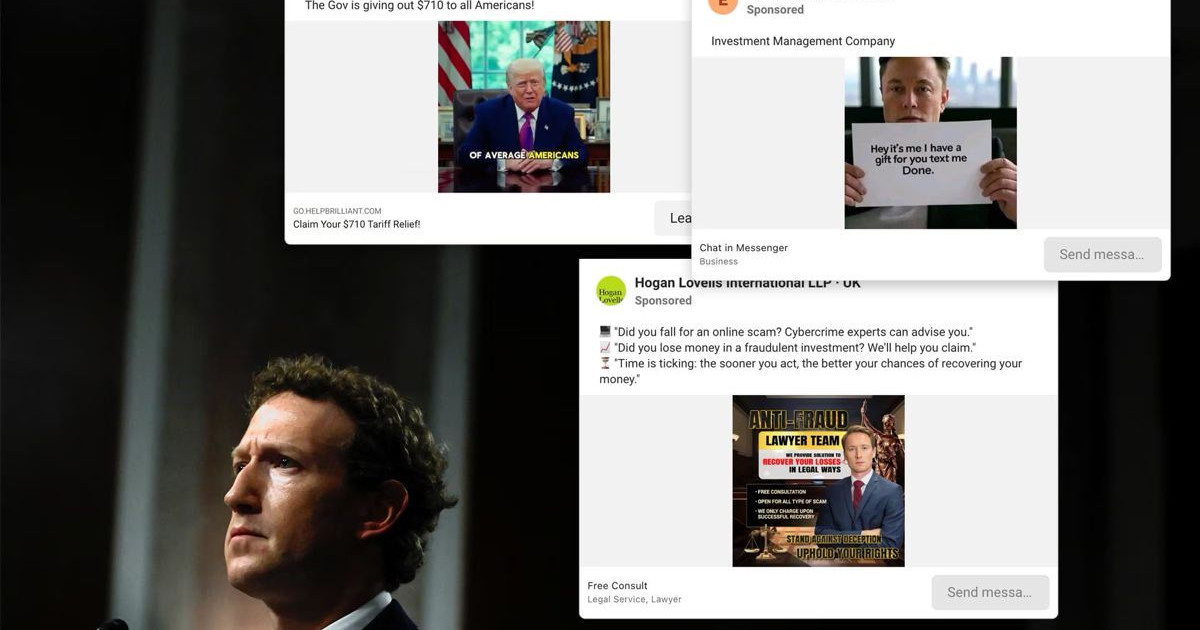

Internal documents reveal Meta's AI-driven ad systems failed to block billions of scam and illegal ads on Facebook, Instagram, and WhatsApp, exposing users to fraud and prohibited products. The AI systems only block ads with 95% certainty of fraud, allowing many harmful ads and generating up to 10% of Meta's annual revenue.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event explicitly involves Meta's automated advertising systems, which are AI systems that predict and classify ads for risk. The harm includes exposure of users to scams, illegal products, and fraudulent content, which harms communities and violates user rights. The harm is realized, not just potential, as evidenced by regulatory investigations and user losses. Meta's internal documents show the AI system's role in enabling these harms through insufficient blocking thresholds and business decisions. Hence, this qualifies as an AI Incident due to direct and indirect harm caused by the AI system's use and malfunction (or underperformance).[AI generated]