The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

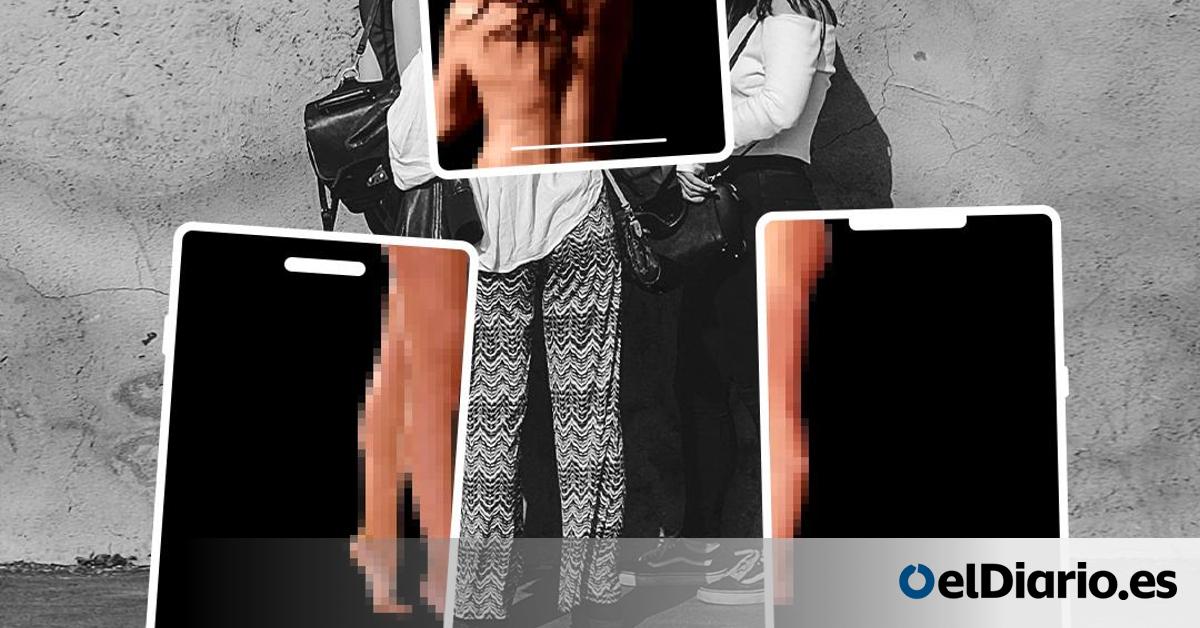

In Almendralejo, Spain, minors used AI deepfake technology to create and disseminate fake nude images of adolescent girls without consent. The Spanish Data Protection Agency fined those responsible, marking the country's first sanction for AI-generated sexual content involving minors and highlighting the legal and ethical risks of AI misuse.[AI generated]