:max_bytes(150000):strip_icc():focal(991x348:993x350)/morgan-freeman-111225-4bd98fa0513b4ec69d8d50c8d170e0ec.jpg)

The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

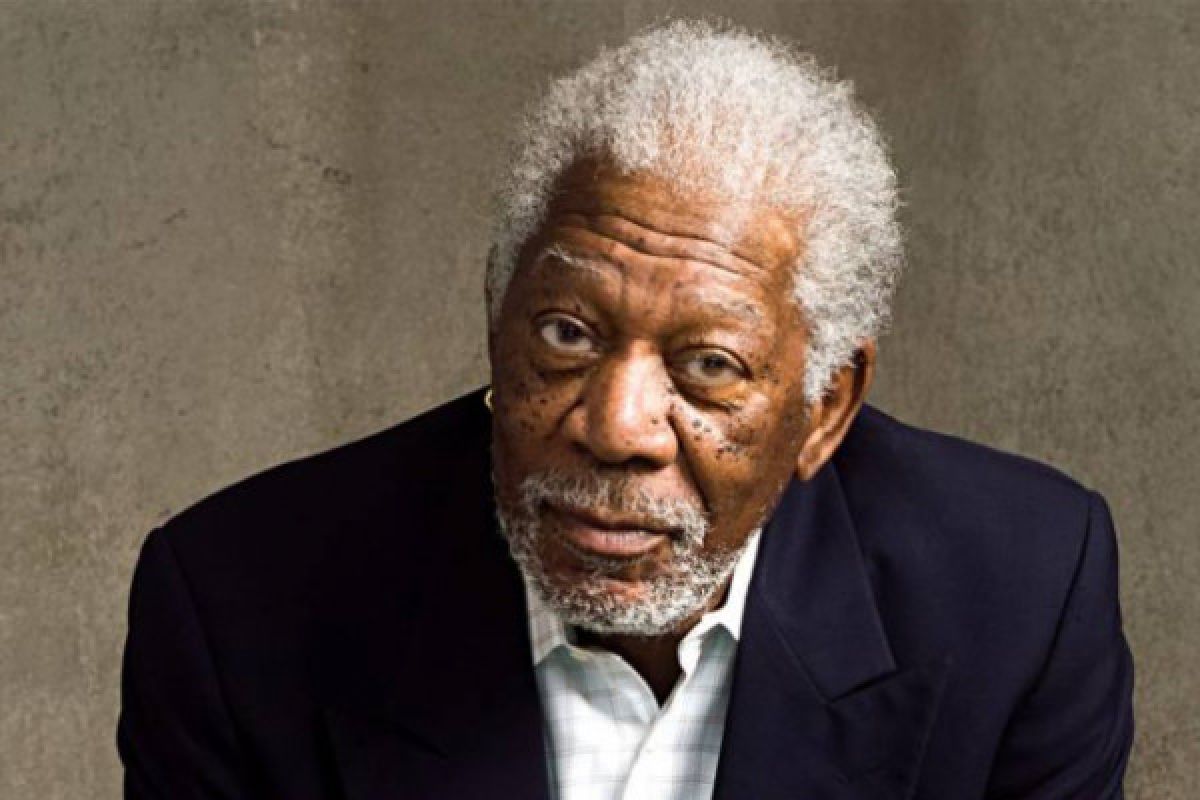

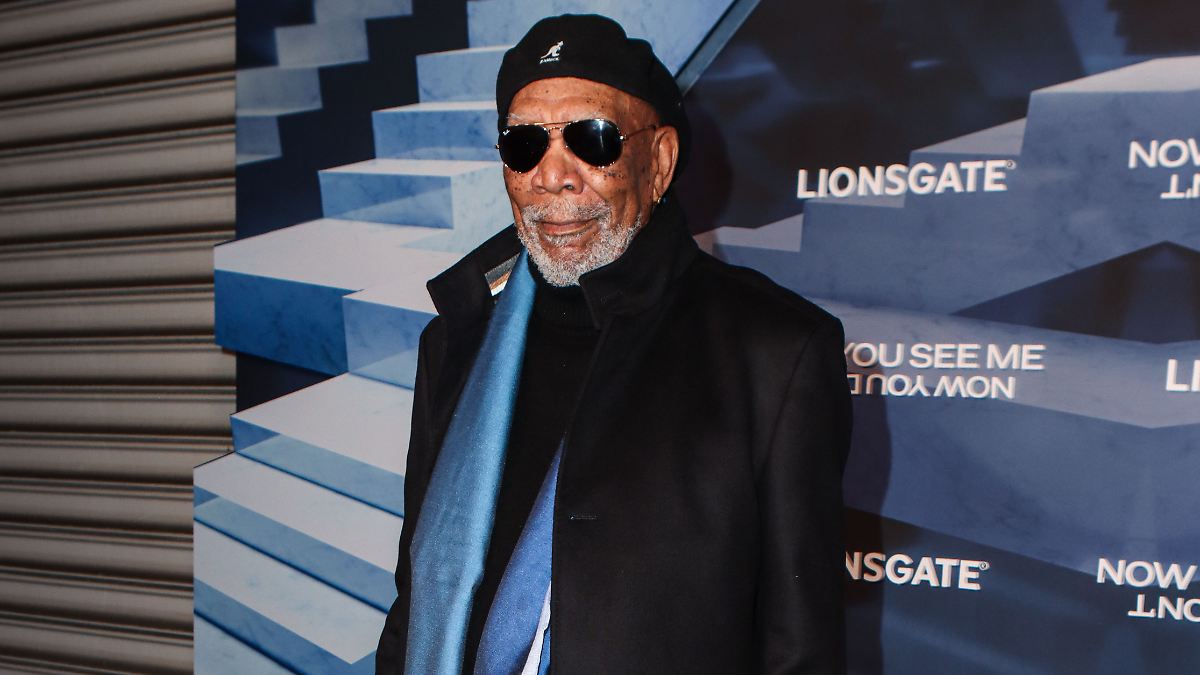

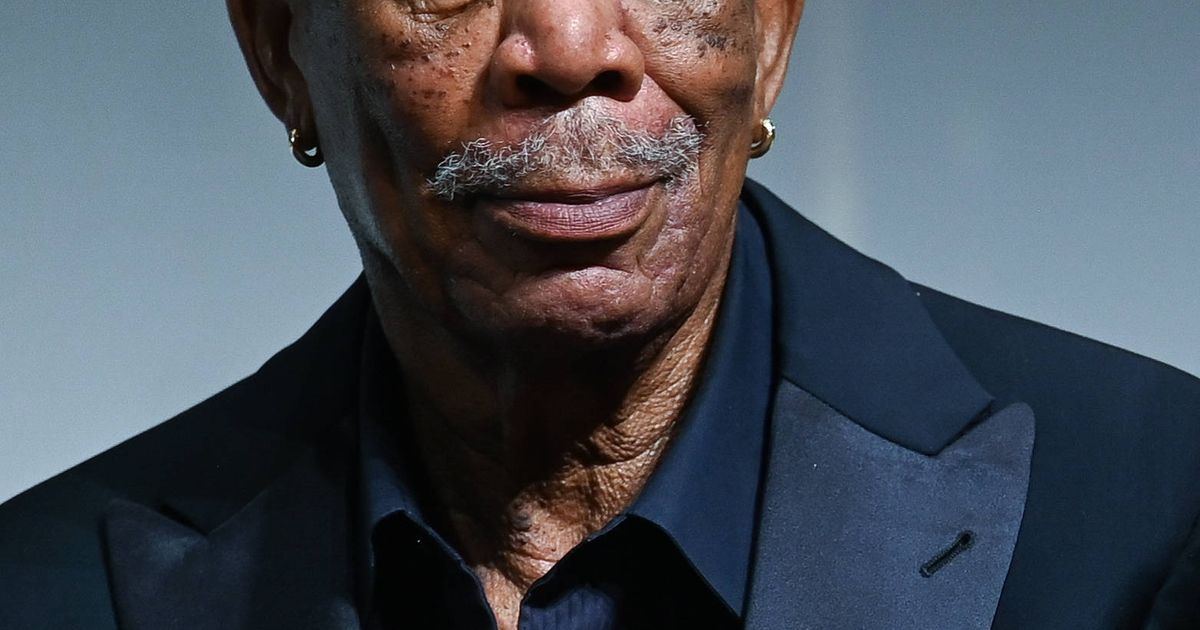

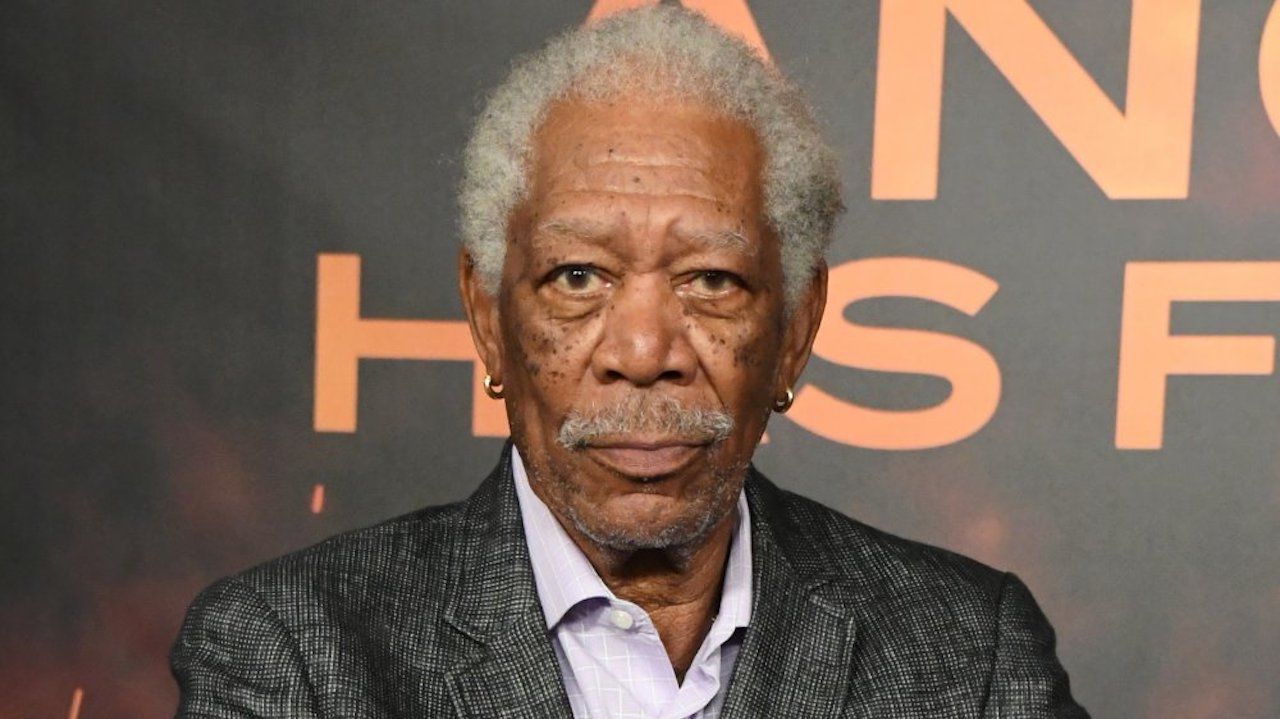

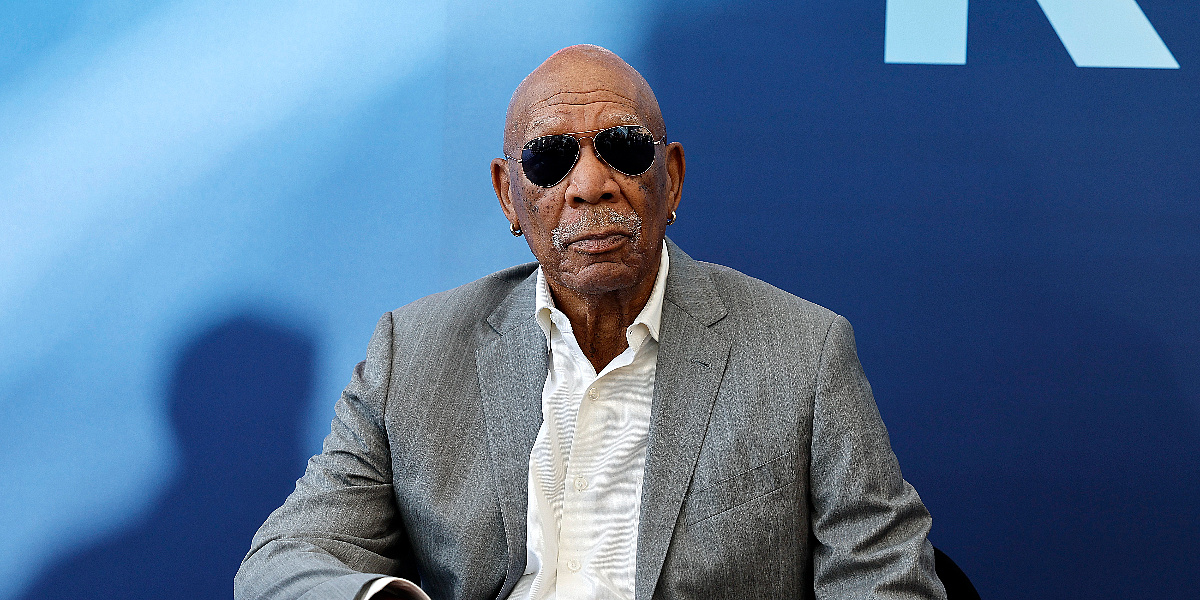

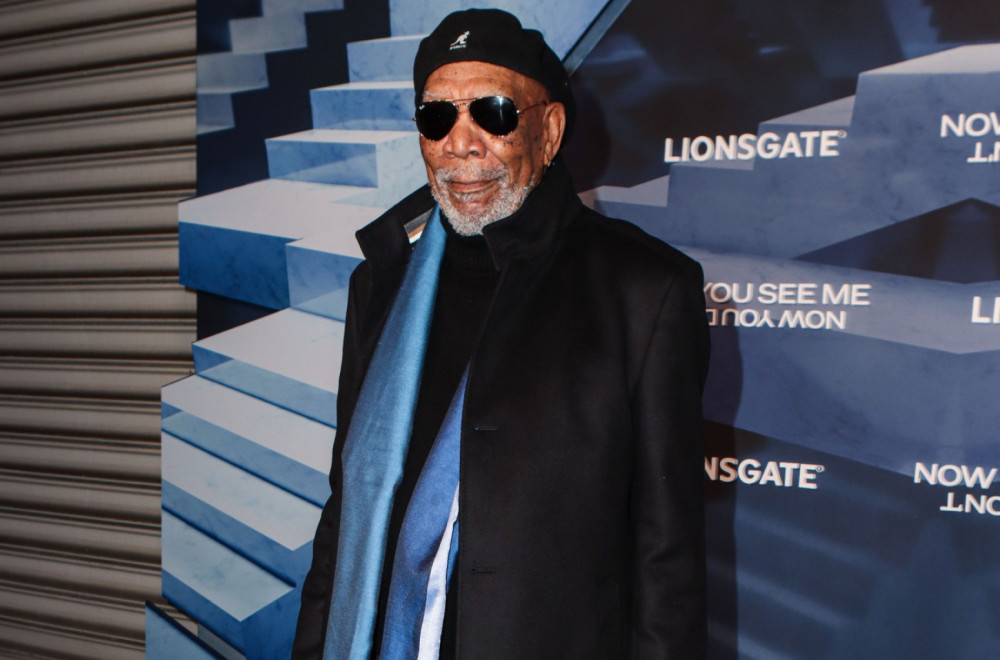

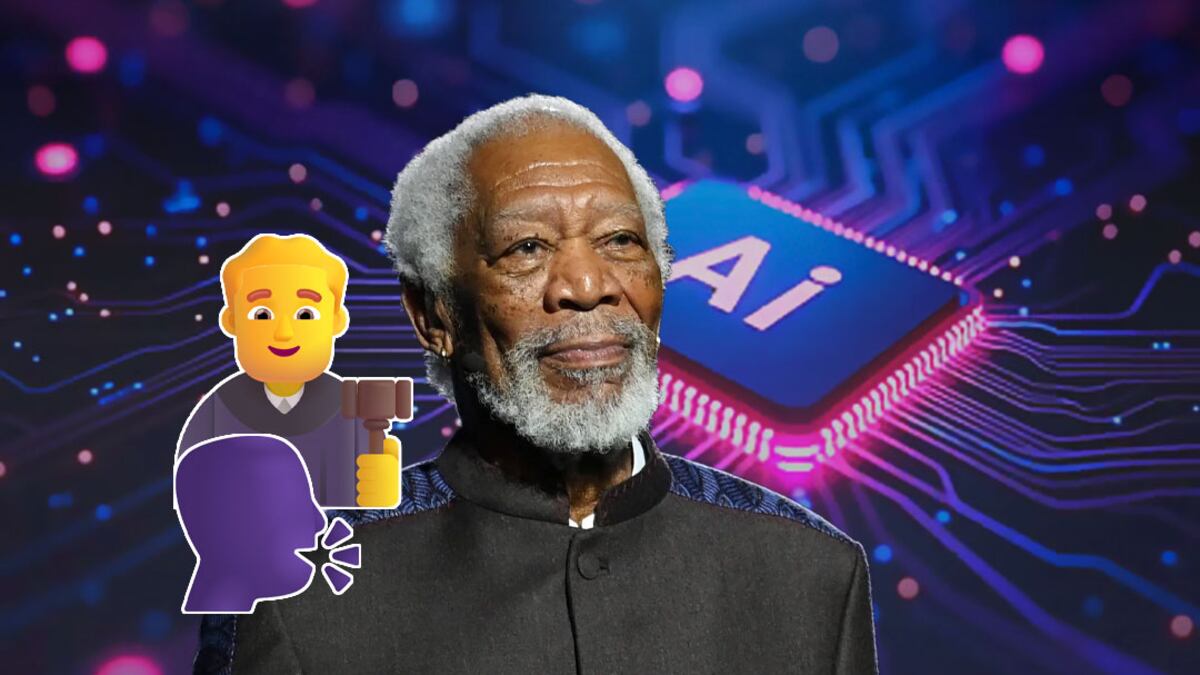

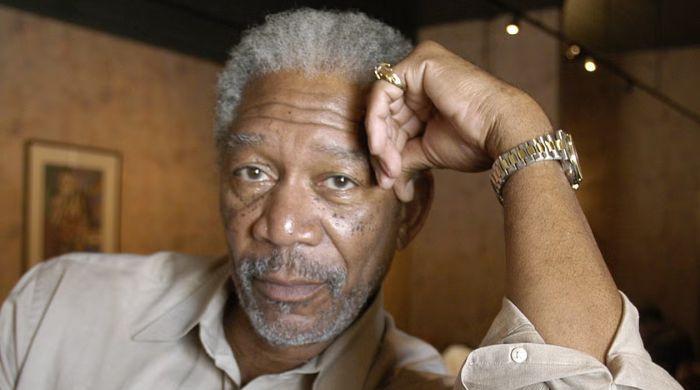

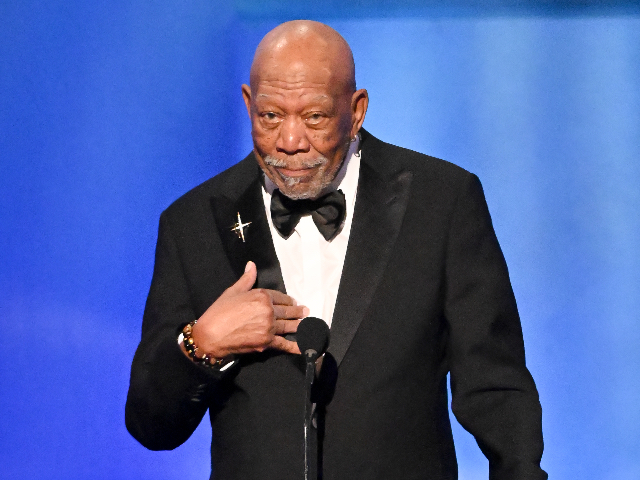

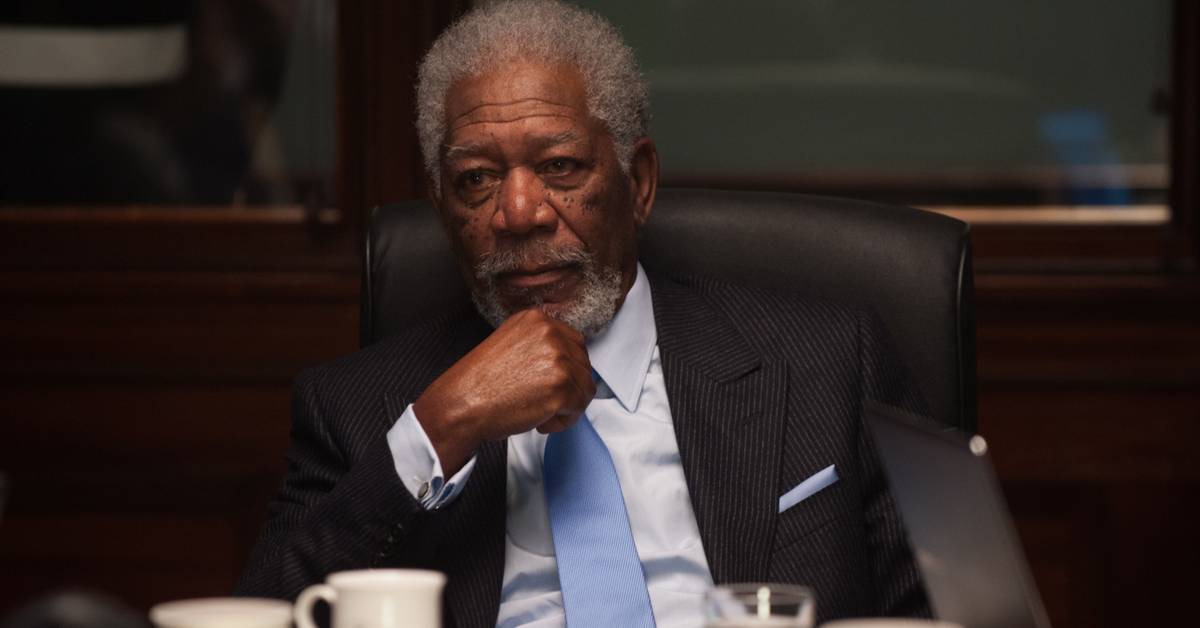

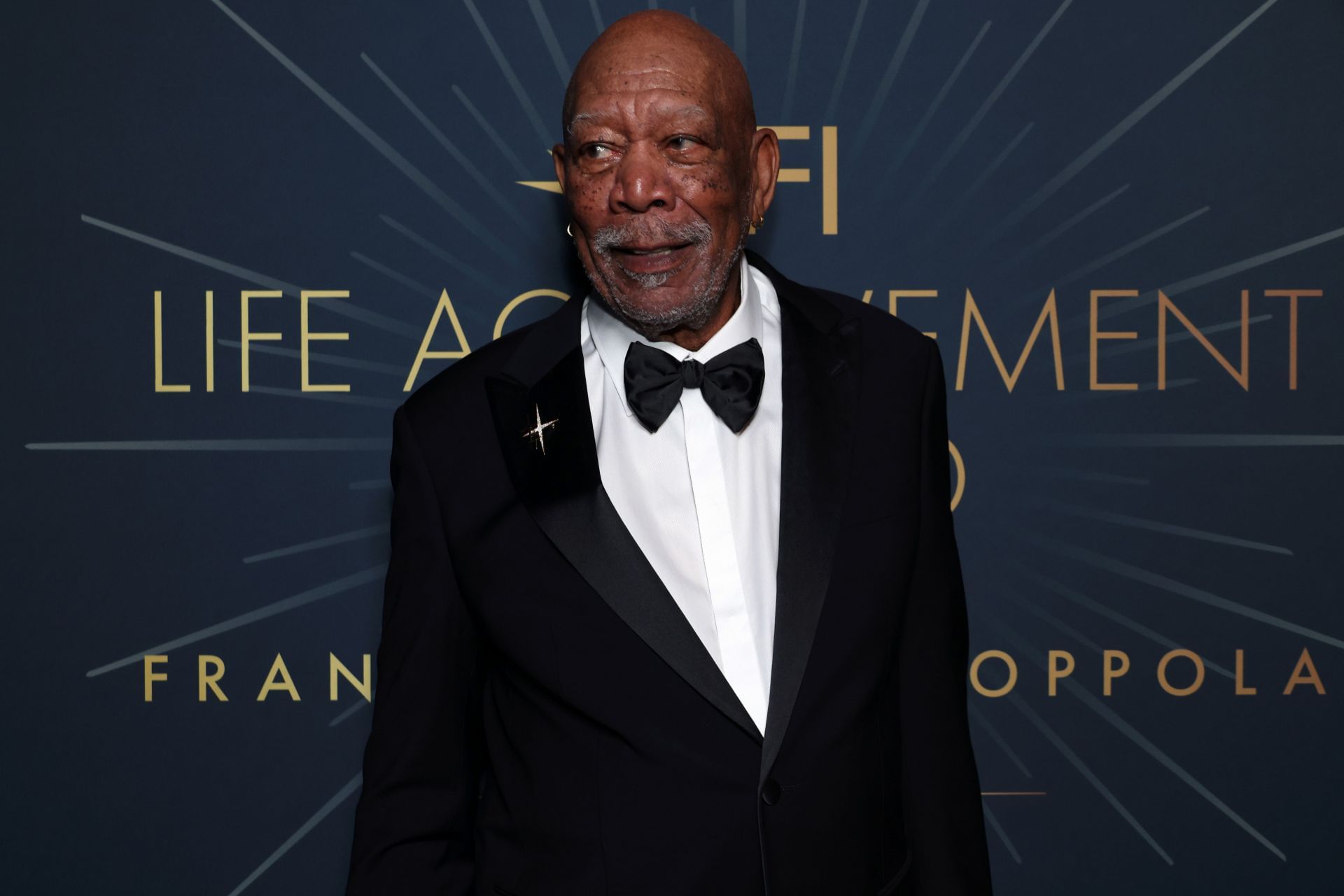

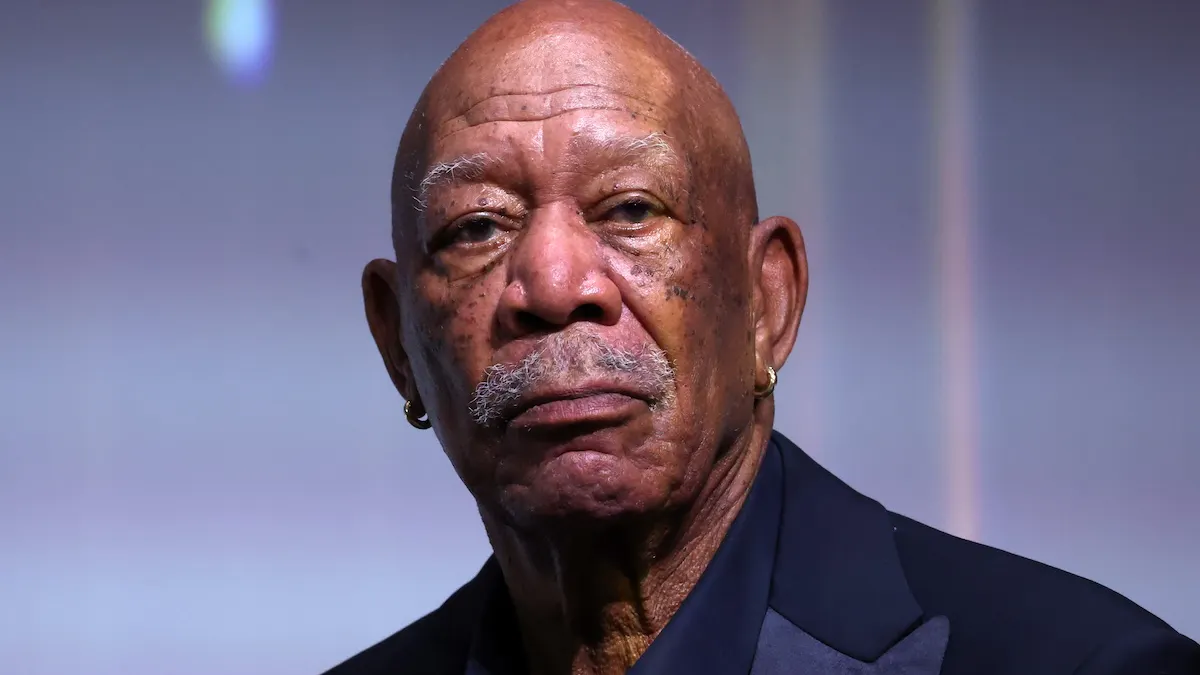

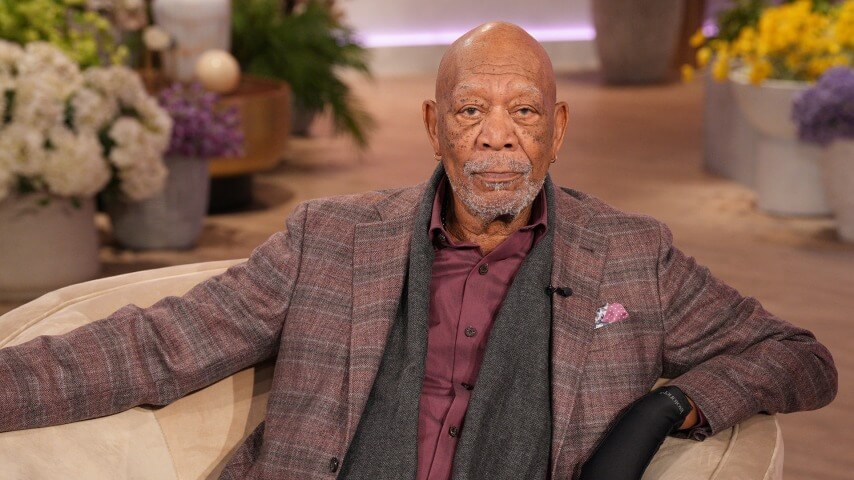

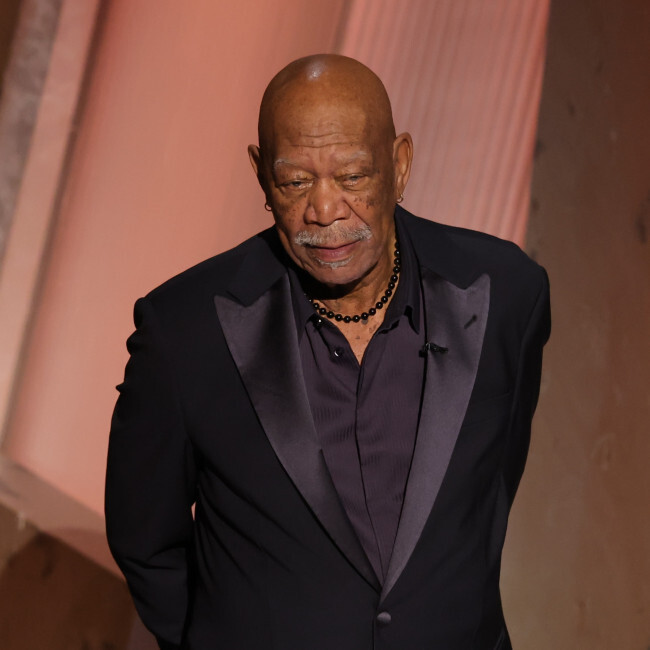

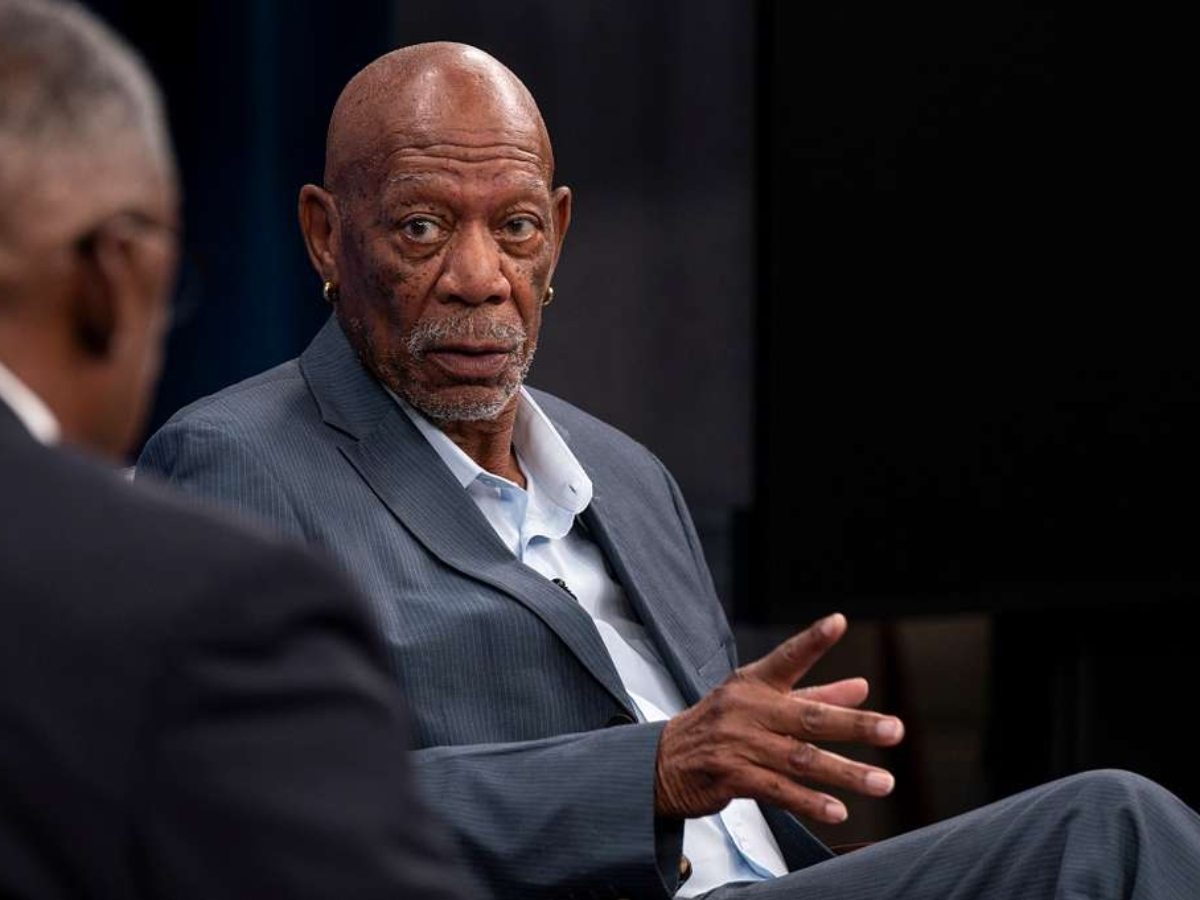

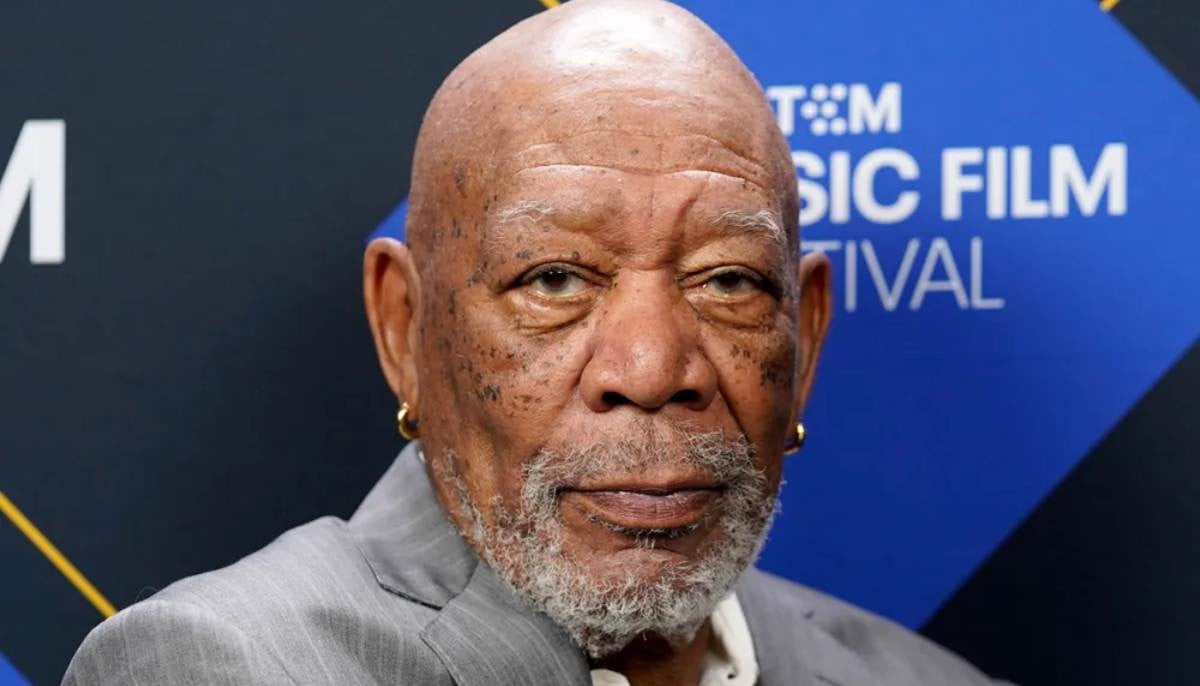

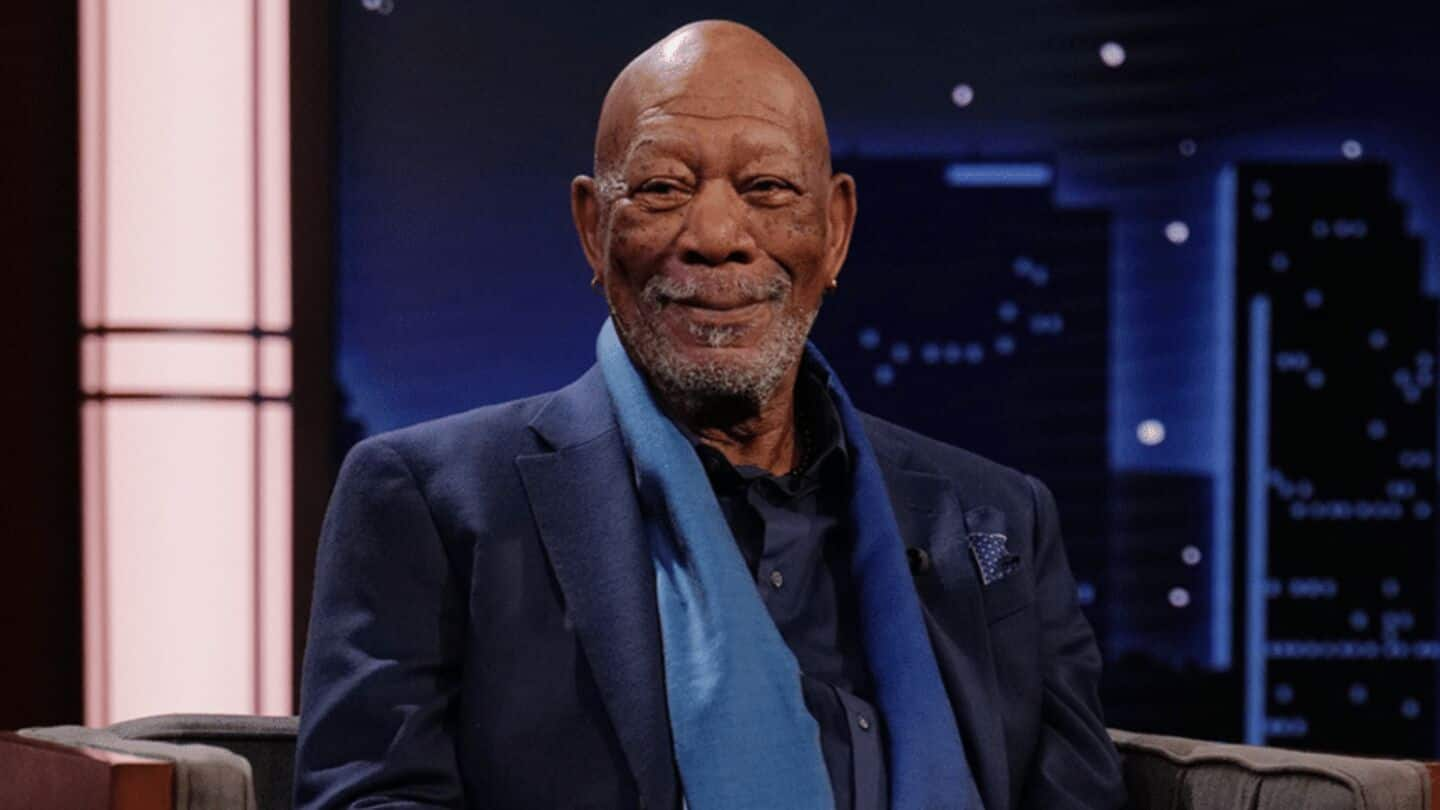

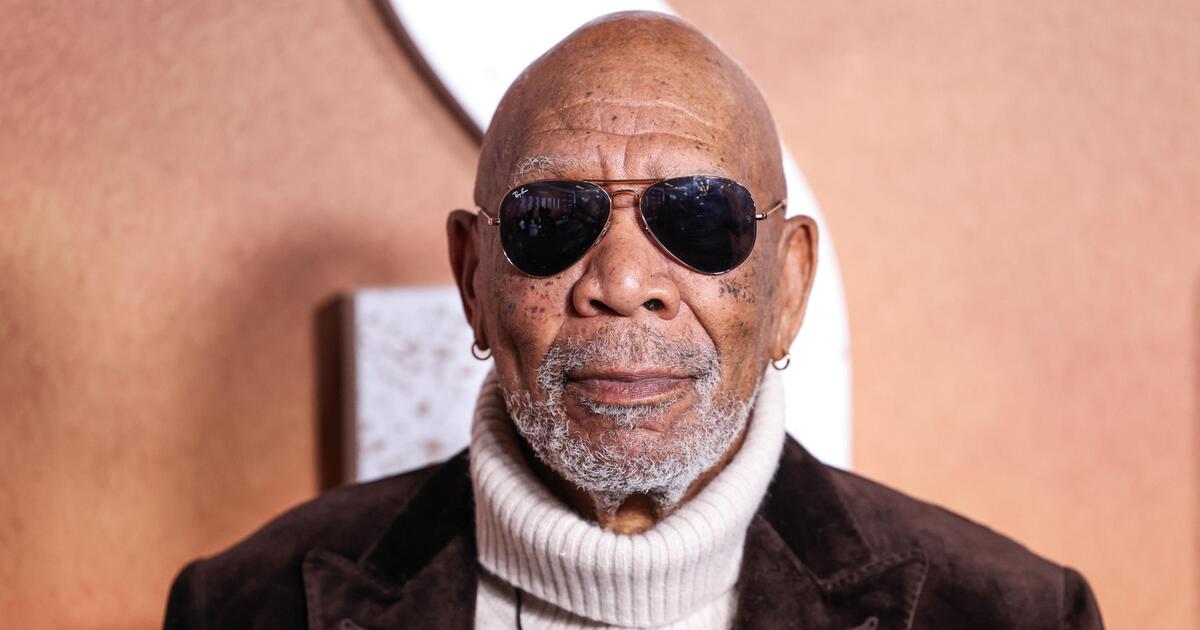

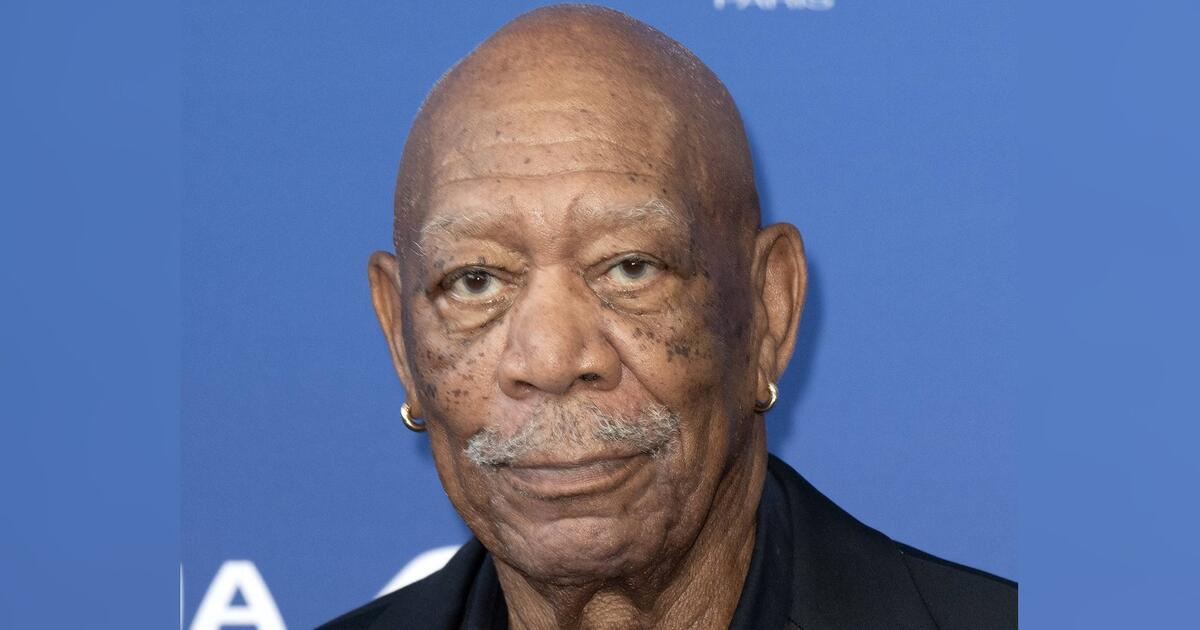

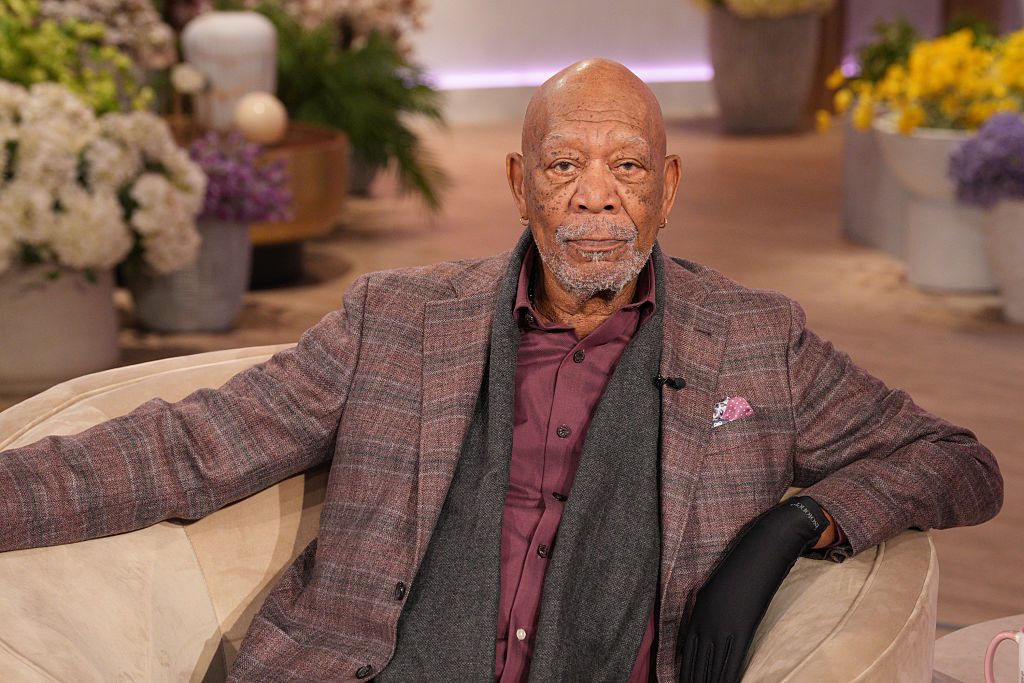

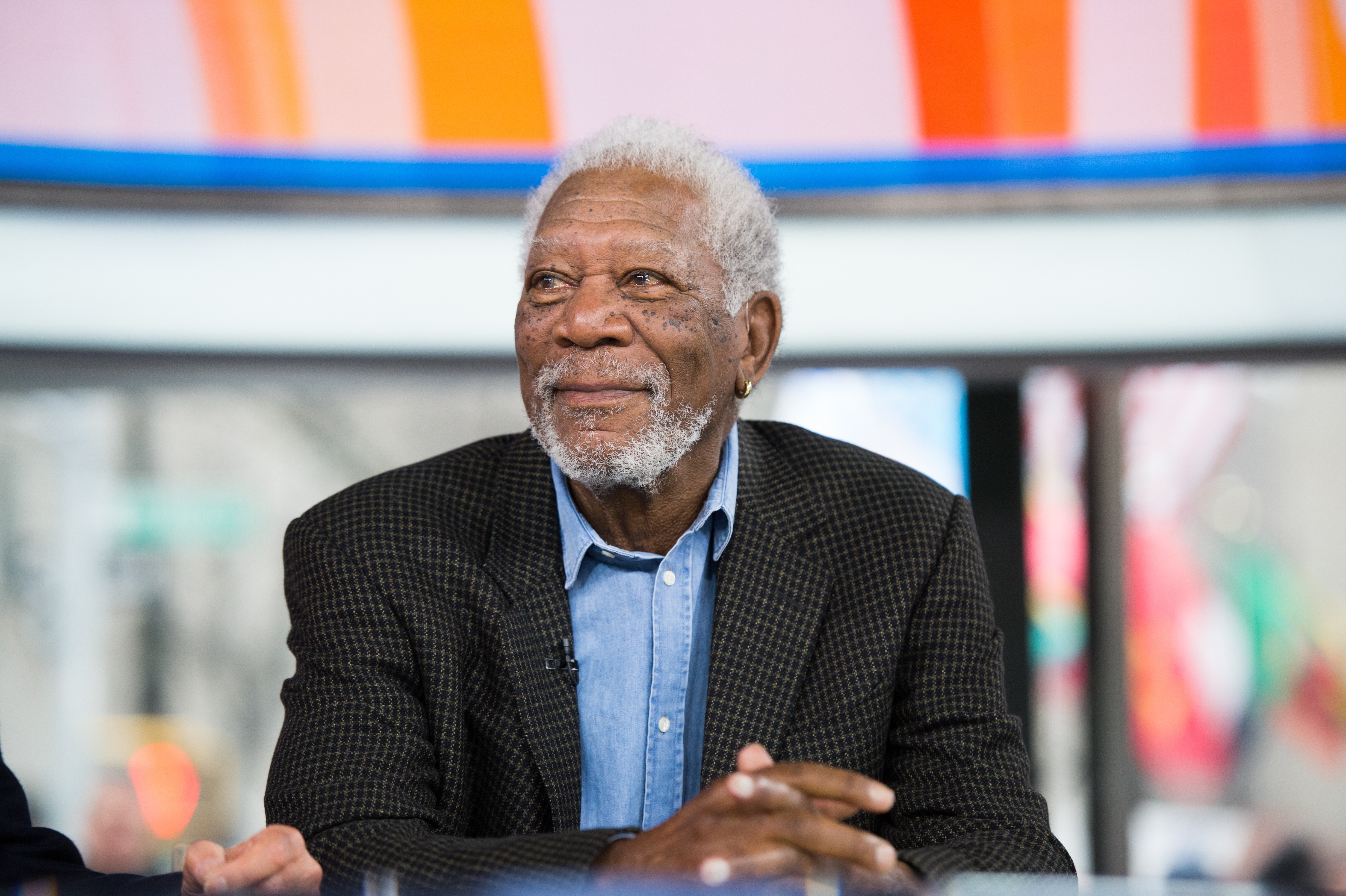

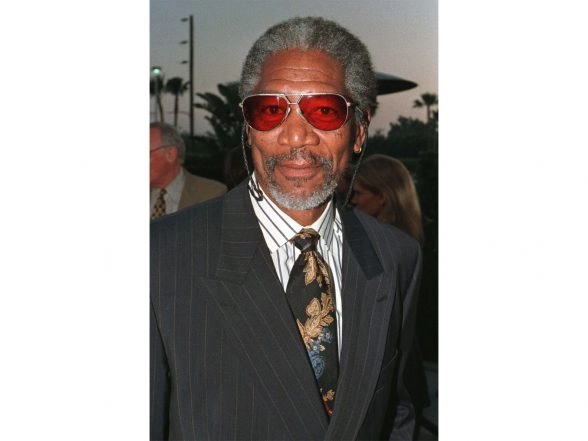

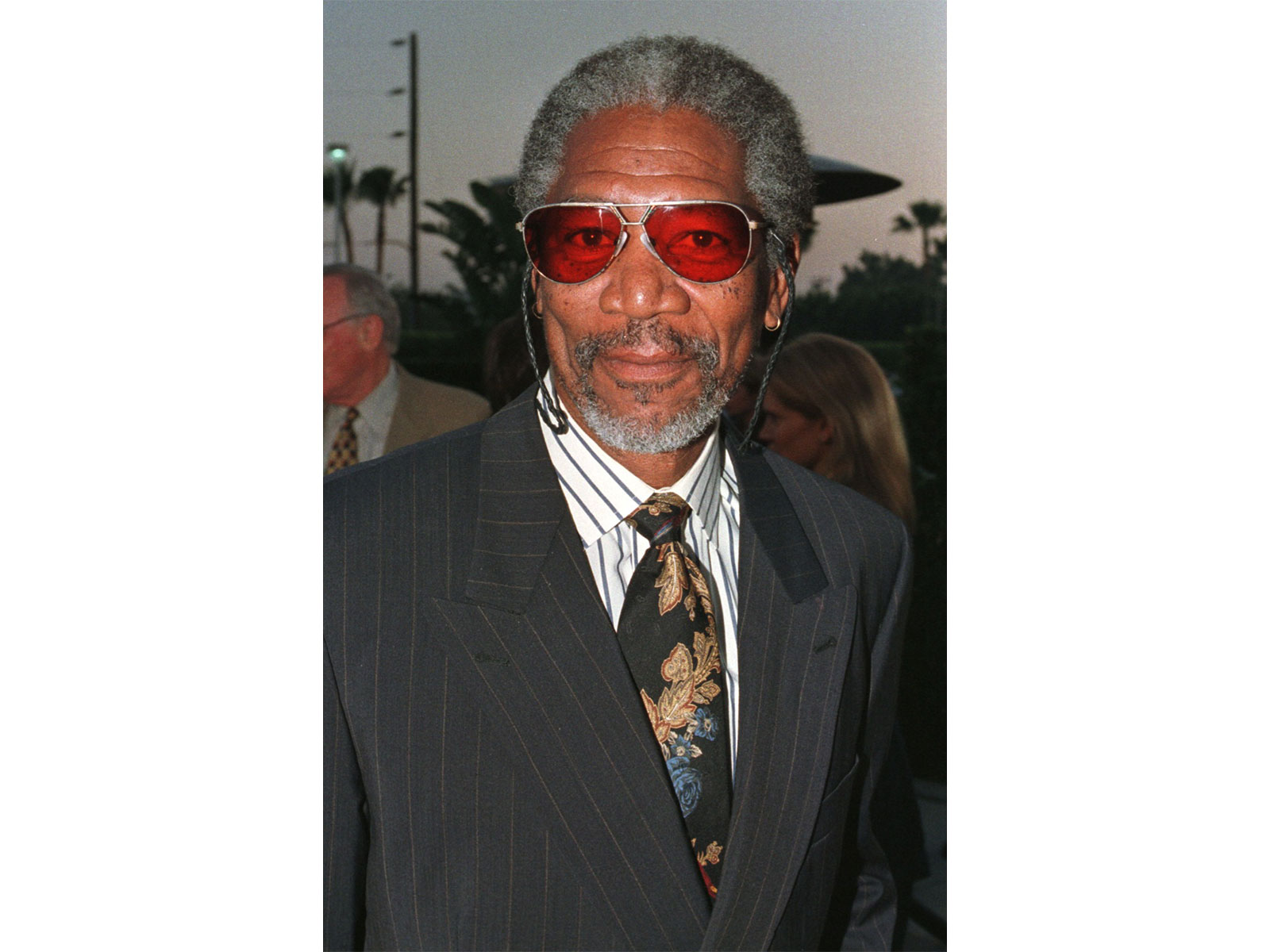

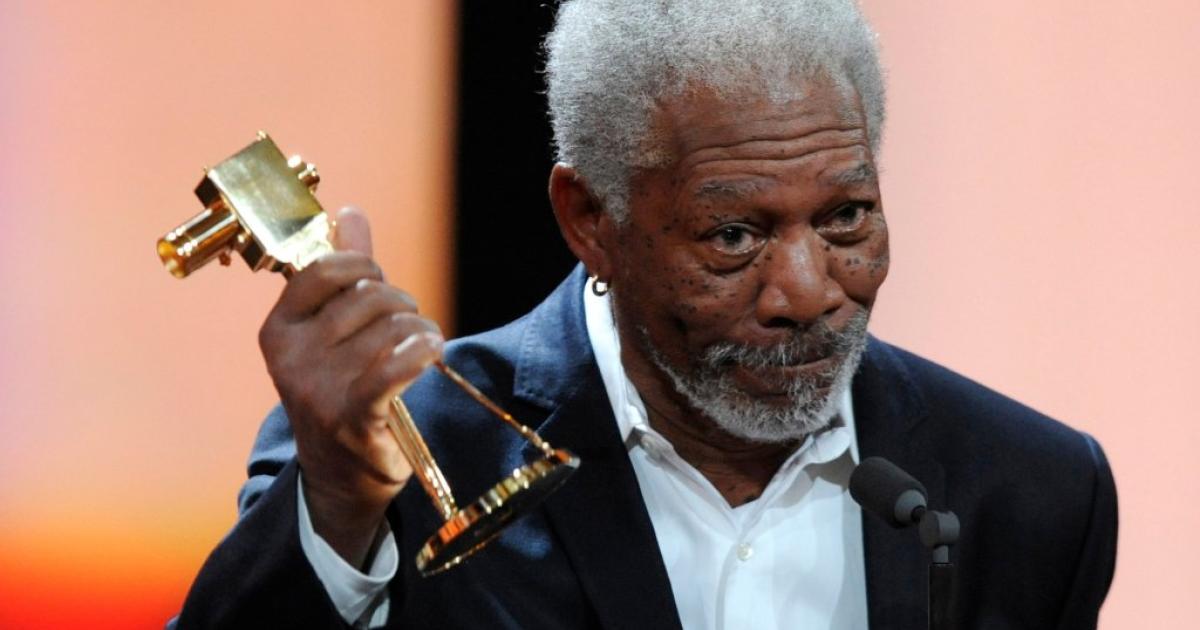

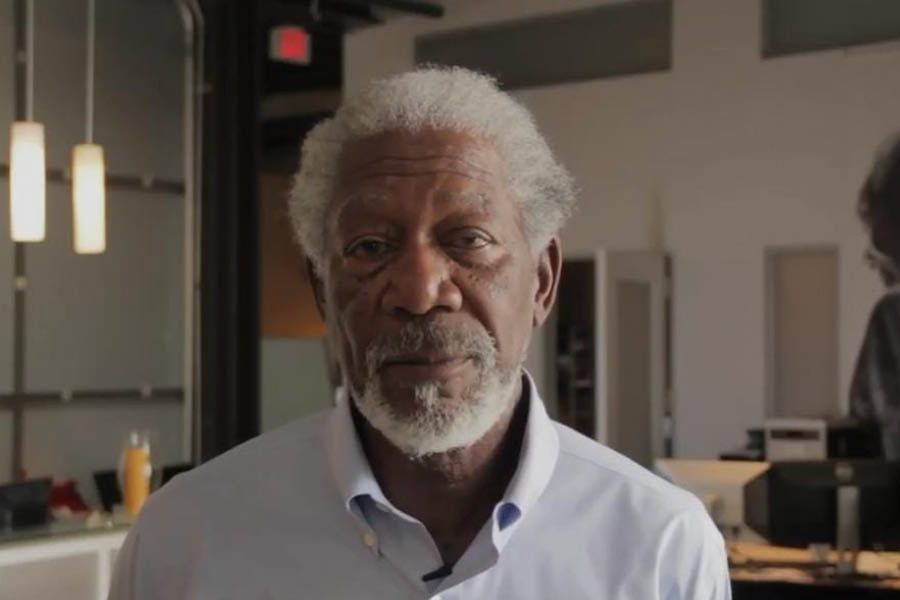

Morgan Freeman has condemned the unauthorized use of AI to clone his iconic voice, calling it theft and a violation of his intellectual property rights. The actor revealed his legal team is actively pursuing multiple cases where AI-generated voices have been used without his consent, resulting in lost work and exploitation.[AI generated]

:strip_icc():format(jpeg)/kly-media-production/medias/5412445/original/001264600_1763092613-000_34WR3PG.jpg)