The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

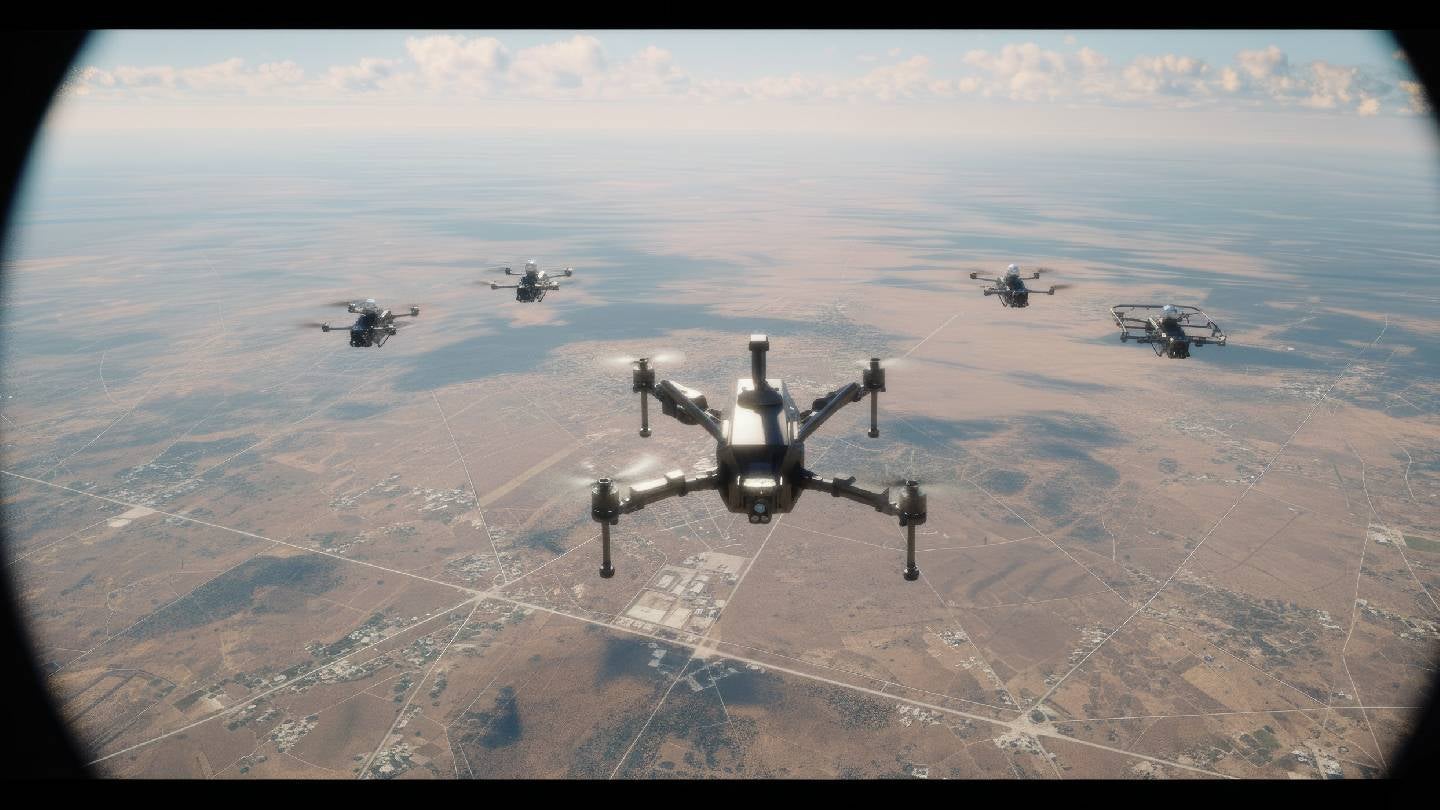

The US Department of Defense awarded Israeli company XTEND Reality Inc. a multi-million-dollar contract to supply AI-enabled attack drone kits, previously tested on civilians in Gaza. These drones, featuring autonomous swarm and precision strike capabilities, have been deployed in conflict zones, raising concerns over civilian harm and the use of AI in lethal operations.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event explicitly involves AI systems integrated into lethal drone kits used in combat operations, which directly relate to injury or harm to persons (harm category a). The AI system's use in precision strikes and swarm control indicates autonomous or semi-autonomous decision-making capabilities that influence physical environments with lethal outcomes. The article states these systems are battle-proven and deployed, indicating realized harm or at least direct involvement in harm. Hence, this is not merely a potential hazard but an incident where AI systems have contributed to harm. The presence of AI, the nature of the harm, and the operational deployment confirm classification as an AI Incident.[AI generated]