The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

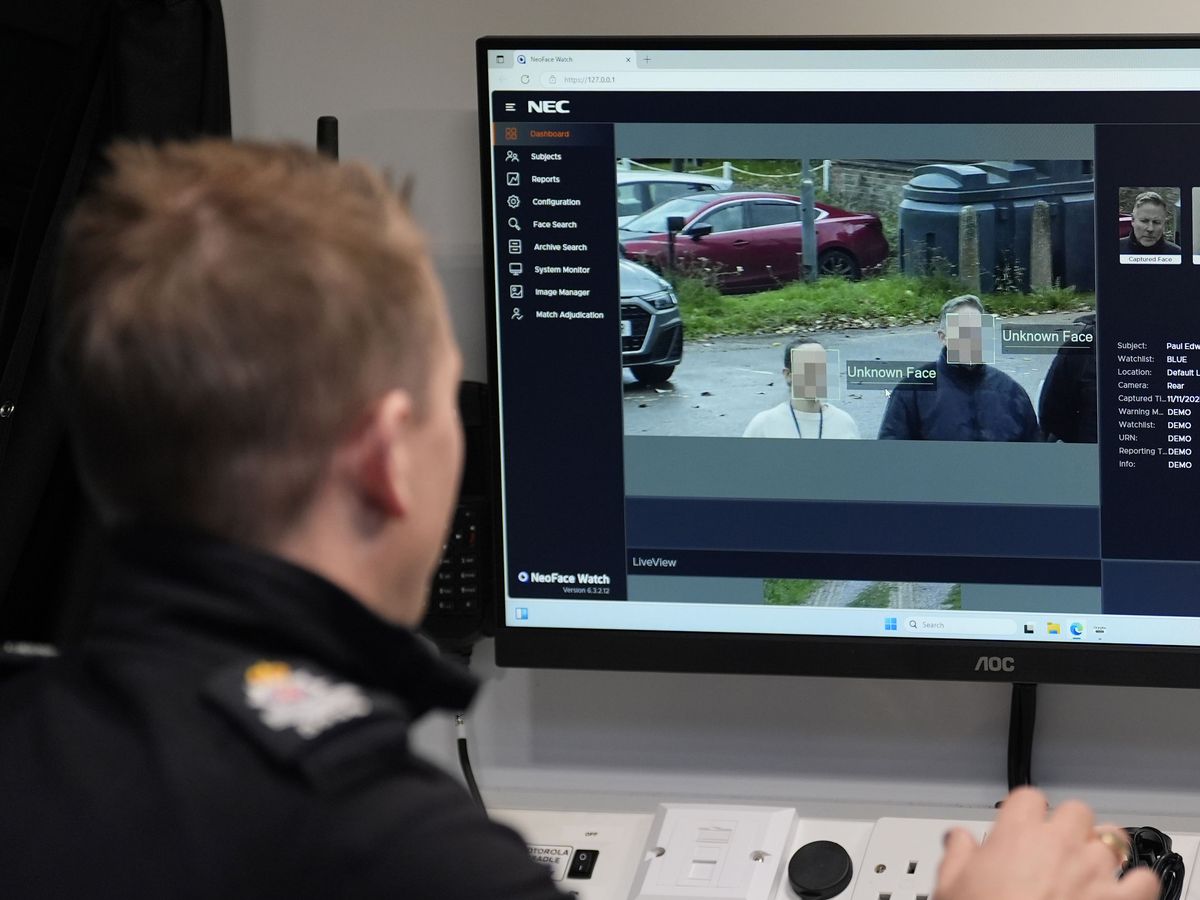

Seven UK police forces, including West Yorkshire, Greater Manchester, and Sussex, are deploying AI-powered facial recognition vans to identify suspects. Despite claims of low false alert rates, civil liberties and anti-racism groups warn of the technology's history of inaccuracies and racial bias, raising concerns about potential rights violations.[AI generated]