The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

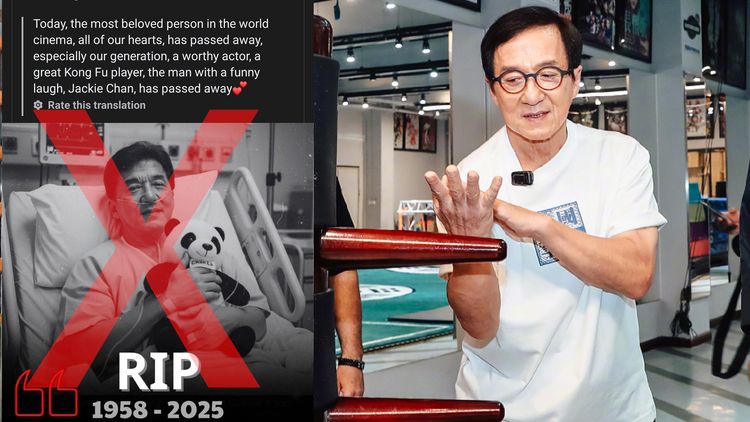

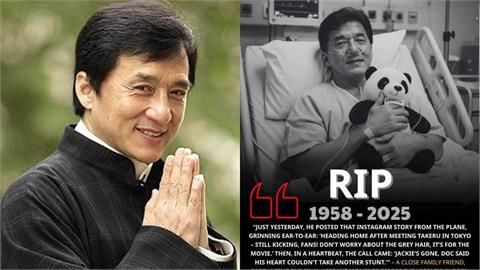

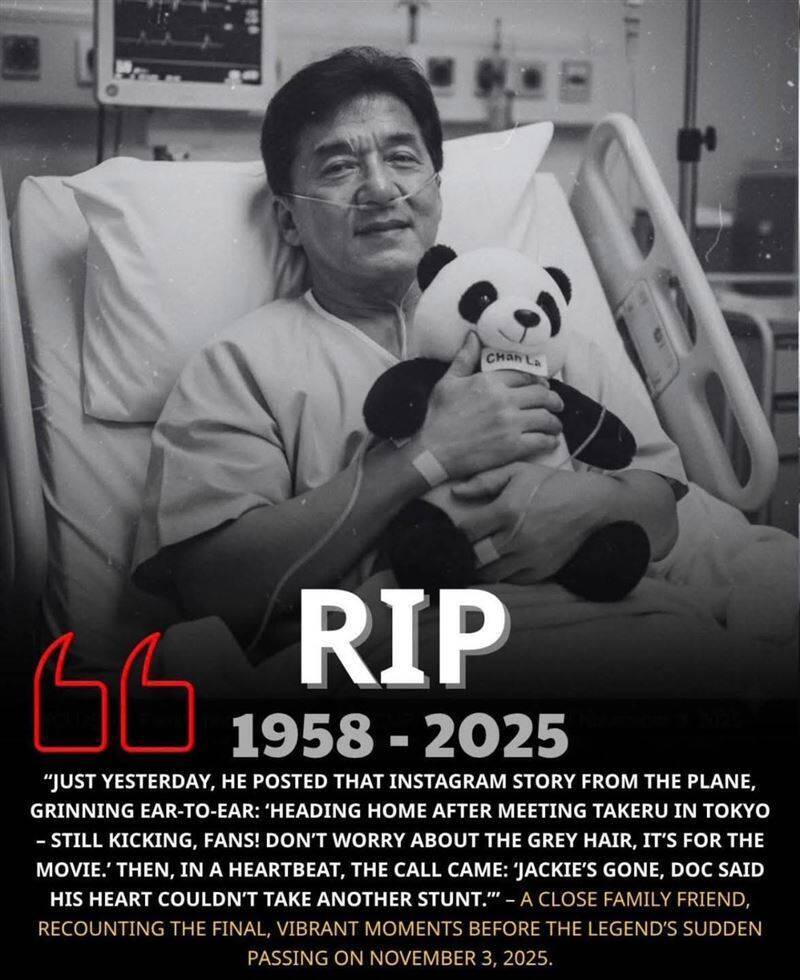

A Facebook page with 1.5 million followers used AI to generate a fake image of Jackie Chan on a hospital bed, falsely announcing his death. The AI-created photo and misinformation spread widely online, causing public confusion and distress before being debunked by media and Jackie Chan himself. The incident highlights AI's role in spreading harmful misinformation.[AI generated]