The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

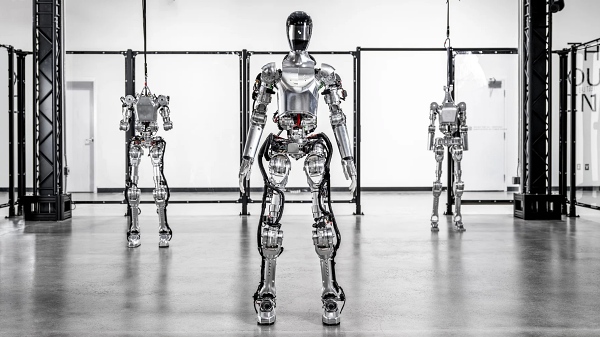

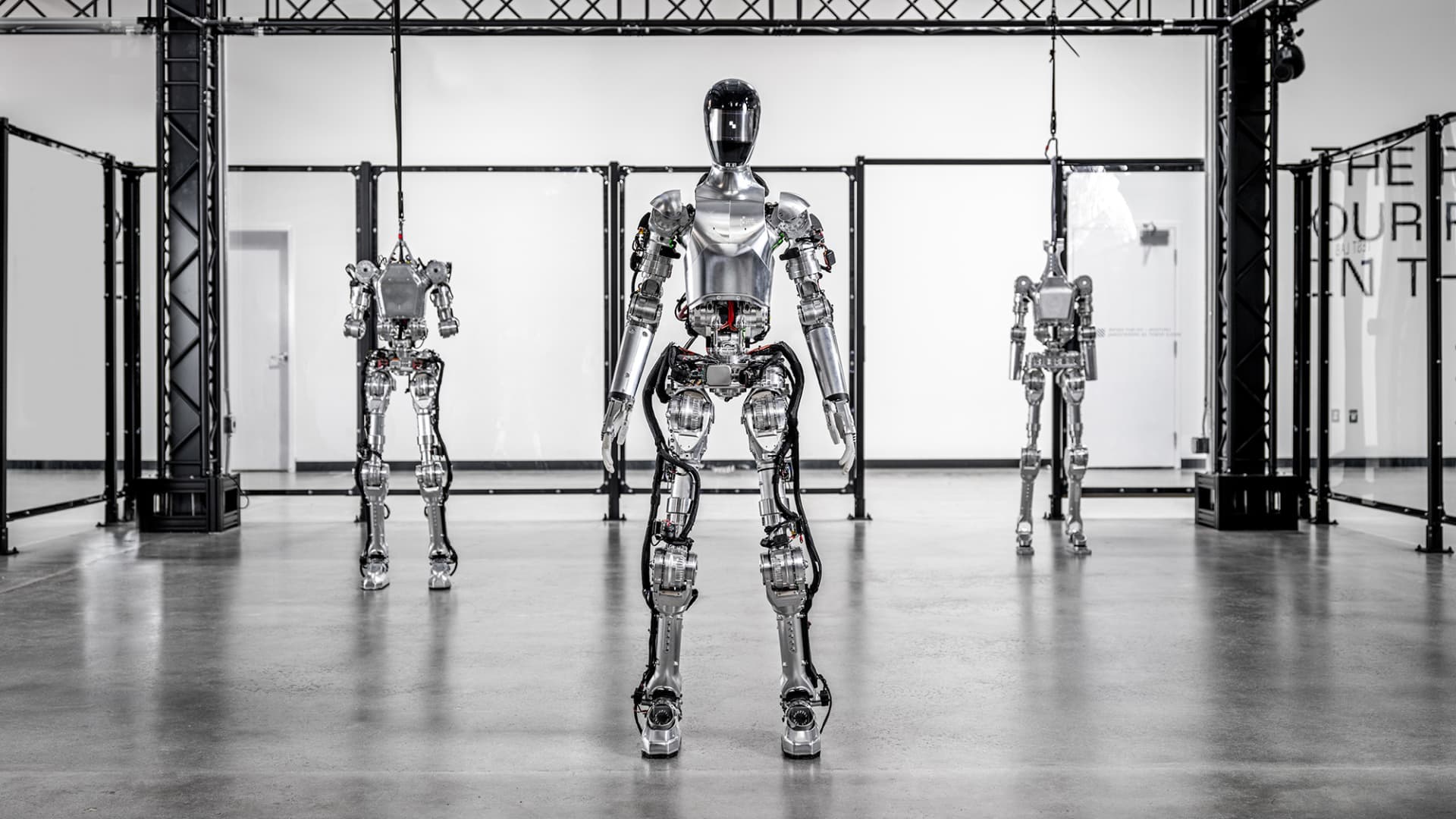

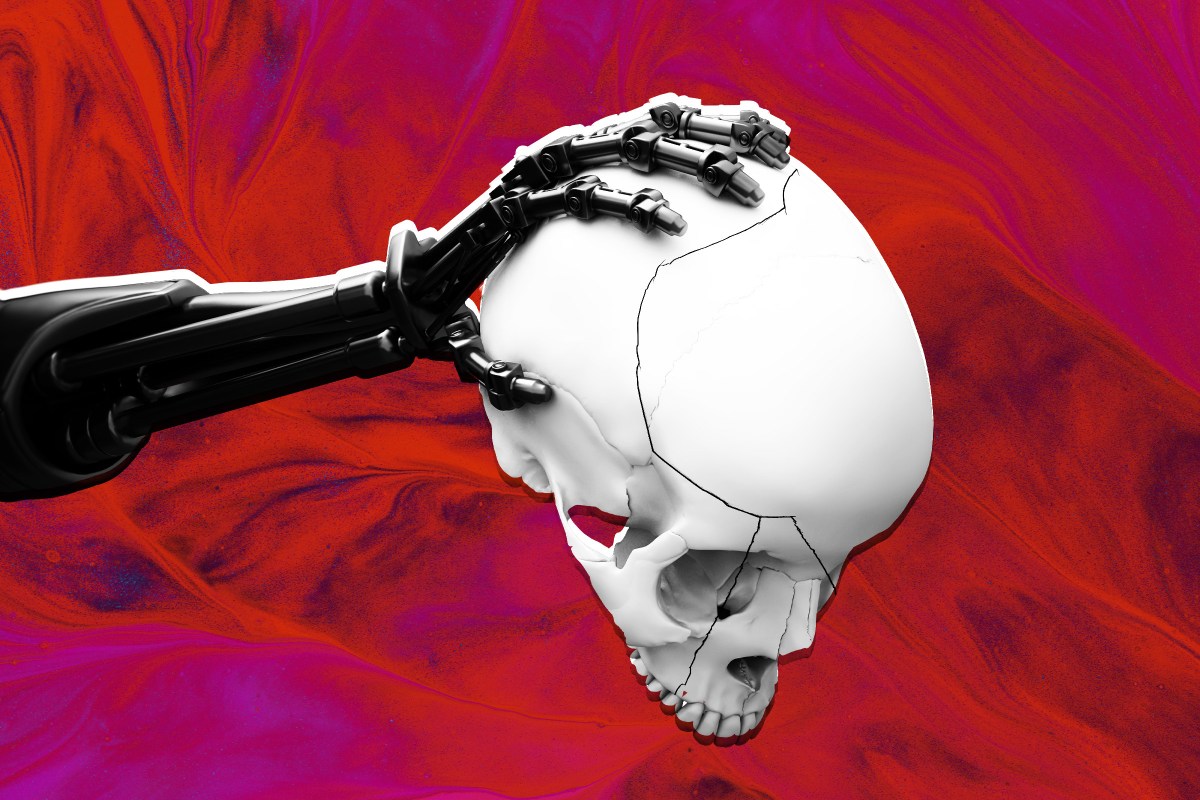

A former engineer has sued Figure AI, claiming he was fired after warning that the company's humanoid robots could exert enough force to fracture a human skull, posing serious safety risks. The lawsuit highlights concerns over inadequate safety measures in the AI-controlled robots' design and operation.[AI generated]