The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

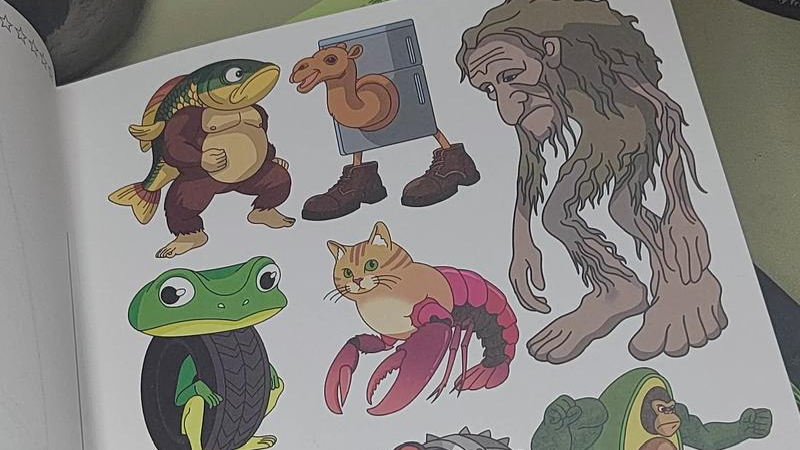

AI systems are being used to generate and spread disturbing, violent, and culturally distorting videos and images, widely consumed by children in China. These "AI-modified" contents, often algorithmically recommended, have led to psychological harm, confusion about traditional culture, and parental concern, highlighting failures in content moderation and platform responsibility.[AI generated]

Why's our monitor labelling this an incident or hazard?

The event involves AI-generated content (AI system involvement) that is widely disseminated and consumed by children, leading to concerns about mental and cultural harm. The harm is indirect but plausible and significant, as psychological experts warn about the negative impact on children's mental health and cultural perception. This fits the definition of an AI Incident because the AI system's use has directly or indirectly led to harm to a group of people (children) in terms of mental health and cultural understanding. The article does not describe a future risk but an ongoing situation with realized harm potential, so it is not merely a hazard or complementary information.[AI generated]