The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

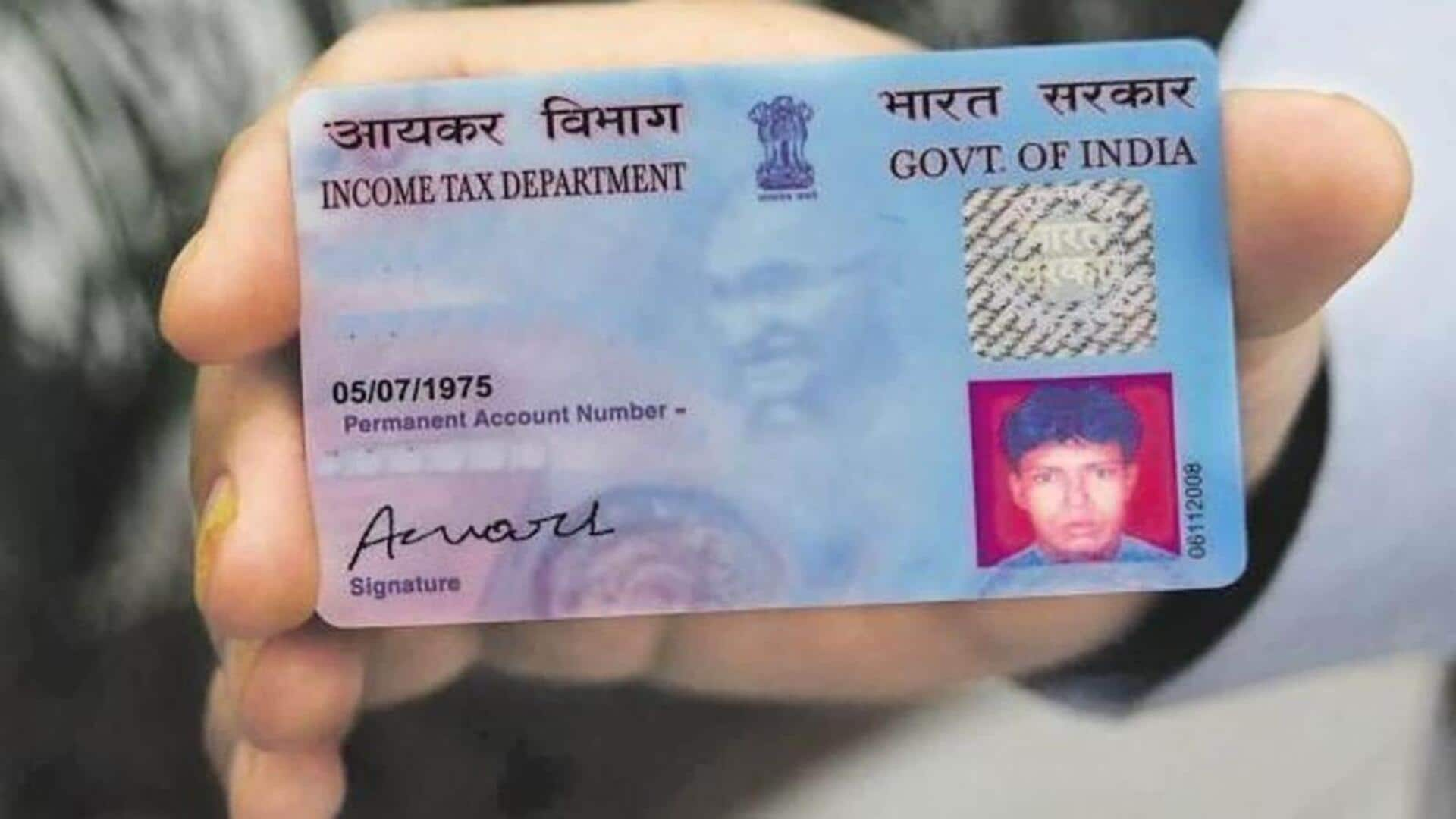

Google's Nano Banana Pro AI tool has been used to generate highly realistic fake Indian identity documents, such as Aadhaar and PAN cards. Experts and users warn that these AI-generated fakes can bypass legacy verification systems, raising serious concerns about identity theft and fraud in India.[AI generated]