The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

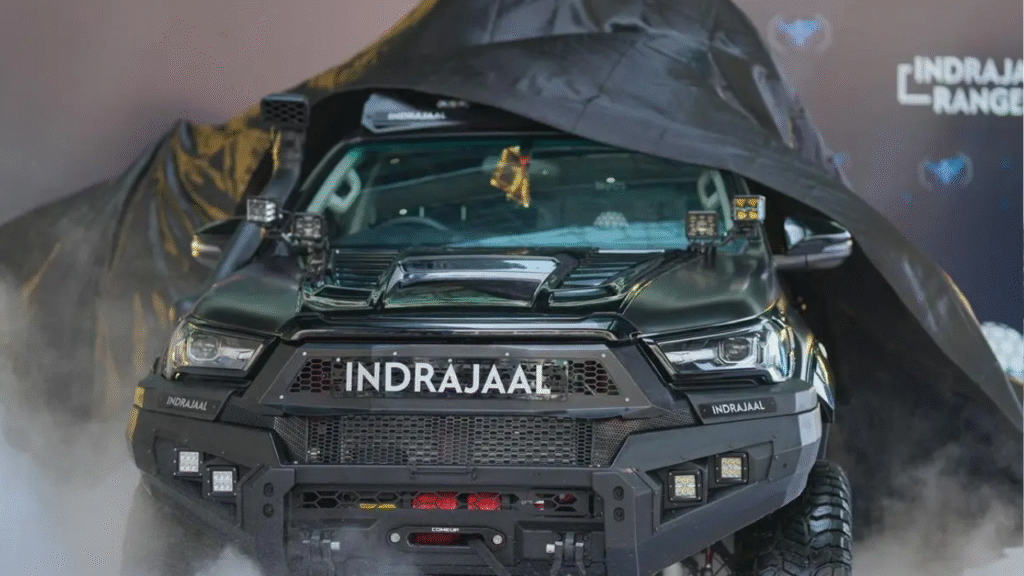

Indrajaal Drone Defence in Hyderabad has launched the Indrajaal Ranger, India's first AI-enabled, fully autonomous anti-drone patrol vehicle. Designed to detect, track, and neutralize hostile drones, the system aims to prevent drone-based smuggling and attacks along India's borders, enhancing national security through real-time autonomous threat response.[AI generated]

-1764257561910.webp)