The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

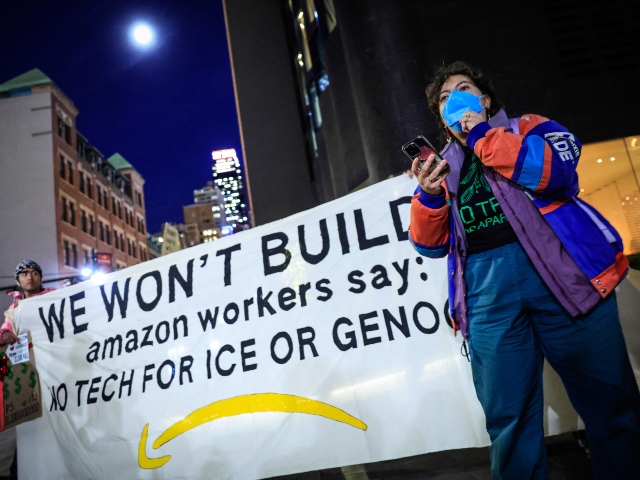

Over 1,000 Amazon employees, supported by thousands from other tech firms, signed an open letter warning that Amazon's rapid AI development could harm jobs, the environment, and democratic norms. The workers criticize the company's aggressive AI strategy, citing risks of layoffs, increased emissions, and societal impacts.[AI generated]