The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

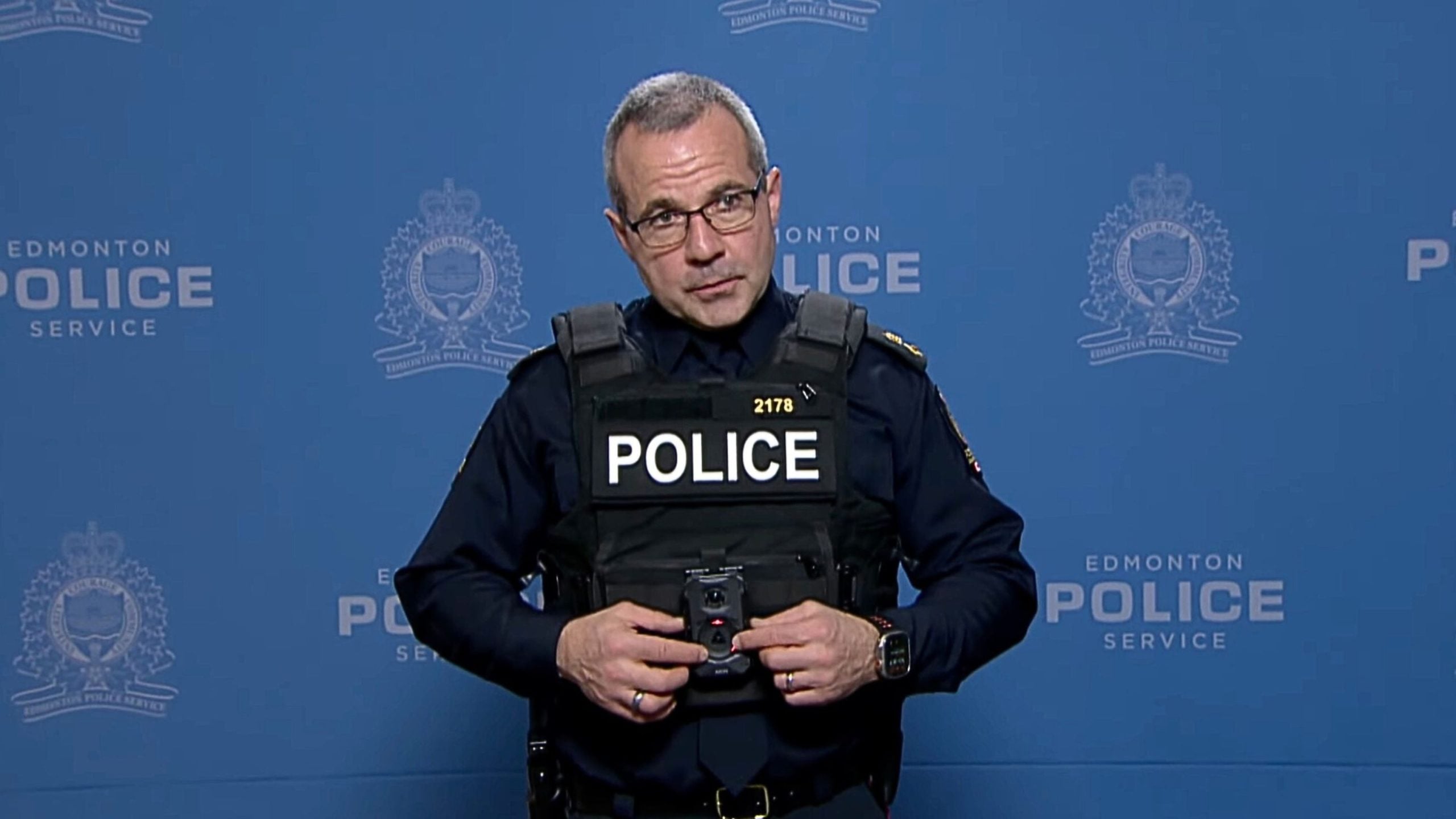

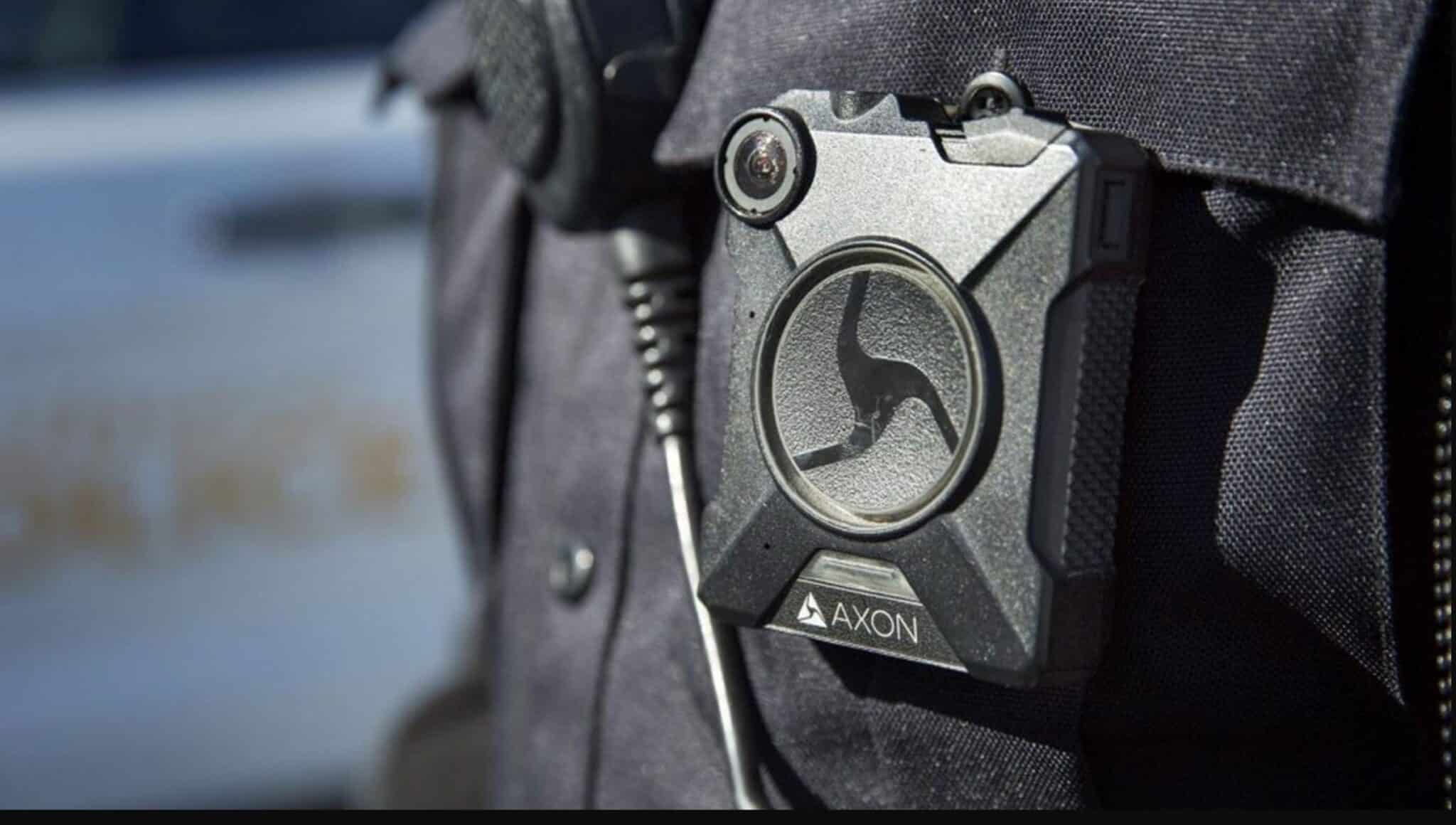

The Edmonton Police Service in Canada has become the first in the world to trial Axon's body cameras equipped with facial recognition technology. Up to 50 officers will use the cameras to identify individuals with outstanding warrants, raising concerns about privacy, potential misidentification, and human rights risks.[AI generated]