The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

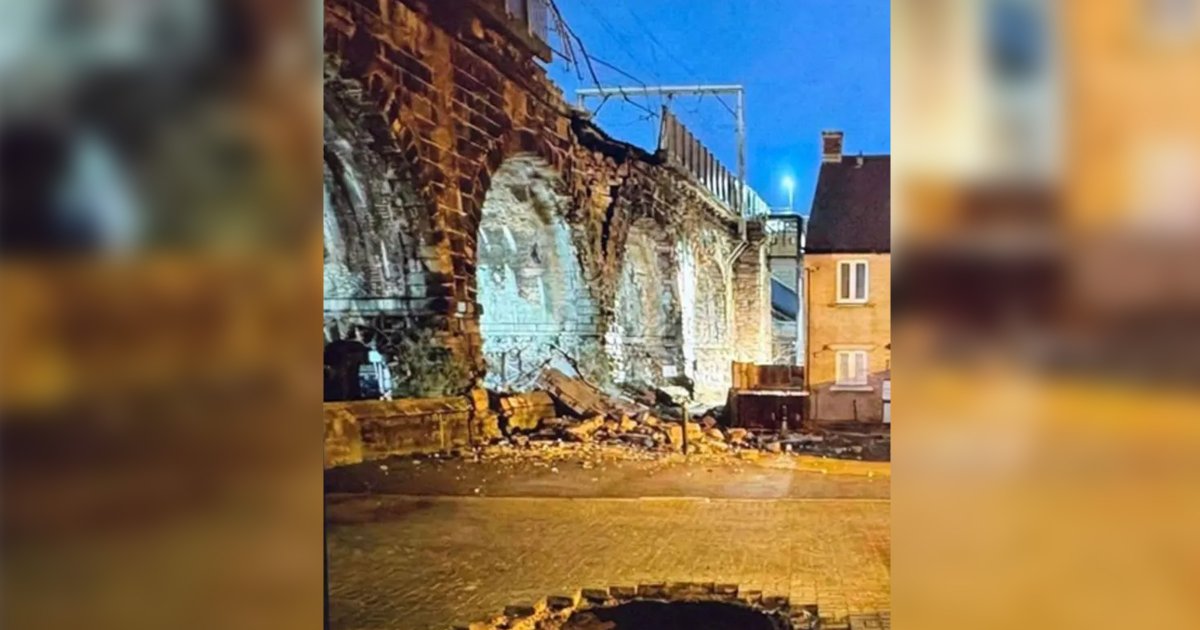

An AI-generated fake image showing severe damage to the Carlisle Bridge in Lancaster, UK, circulated on social media after a minor earthquake. Mistaking it for real, Network Rail suspended train services for safety checks, causing delays to dozens of trains and significant disruption to rail operations.[AI generated]