The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

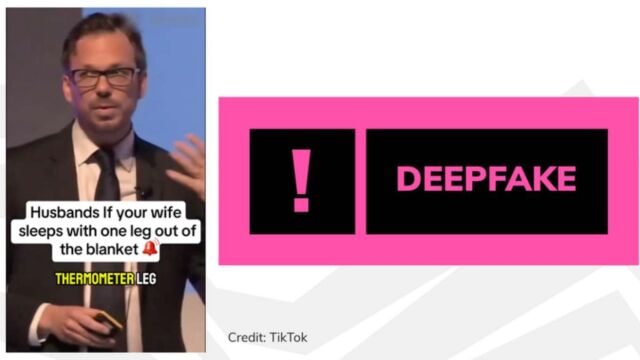

AI-generated deepfake videos featuring fake doctors have flooded TikTok and other social media platforms, promoting unproven supplements and spreading false health information. These videos, based on manipulated real footage, mislead users—especially menopausal women—into buying products from a U.S. supplement company, prompting calls for stricter AI content regulation.[AI generated]