The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

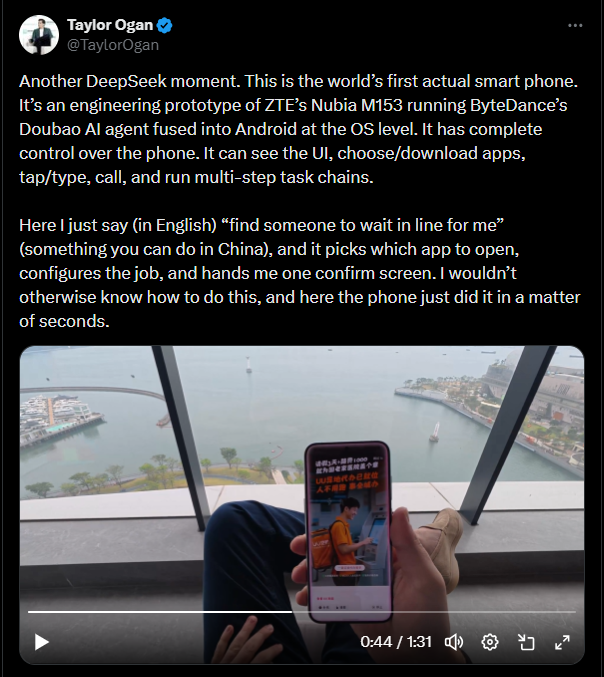

ByteDance's AI-powered smartphone, featuring the agentic Doubao assistant, faced widespread backlash in China after major apps restricted or blocked its functions. The AI's autonomous operations led to account suspensions, app crashes, and privacy concerns, prompting ByteDance to disable features in financial and gaming apps to mitigate harm.[AI generated]