The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

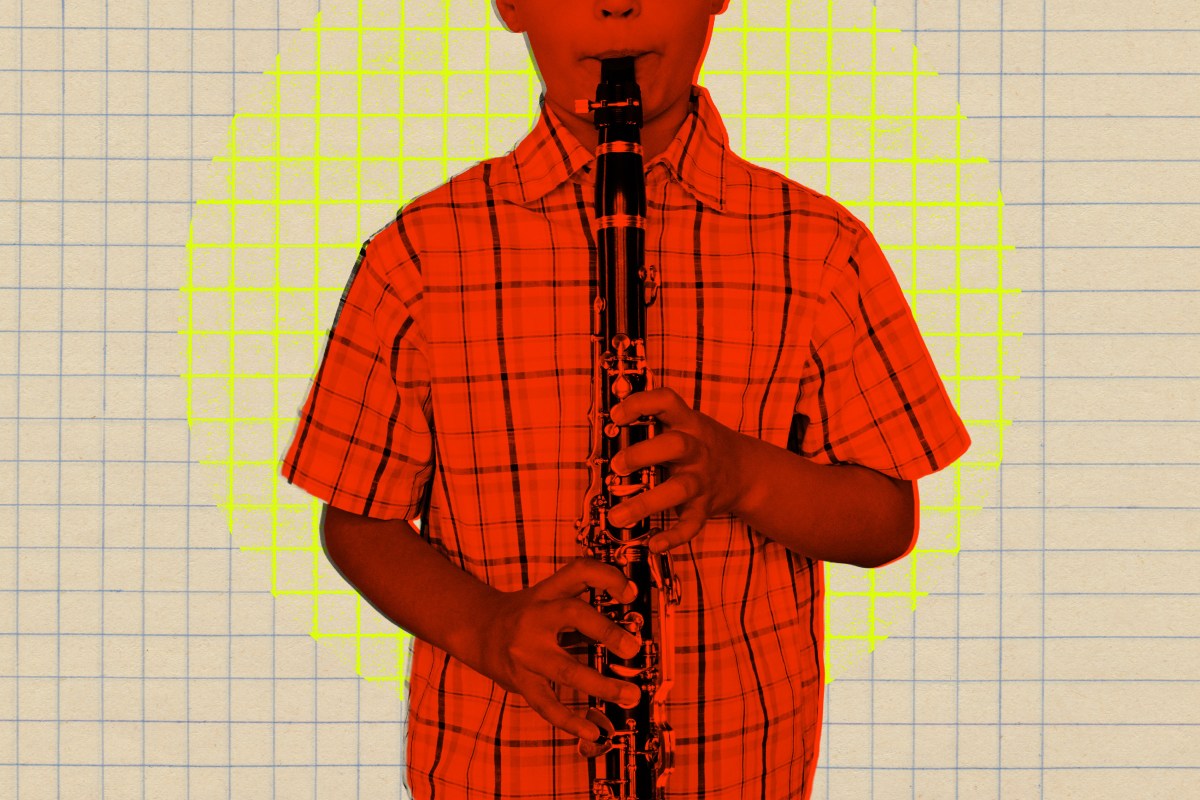

An AI-powered weapons detection system at Lawton Chiles Middle School in Oviedo, Florida, mistakenly identified a student’s clarinet as a gun, triggering a Code Red lockdown. The false alarm disrupted school operations and caused concern among students and staff, highlighting risks of AI misclassification in critical safety systems.[AI generated]

.jpg)