The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

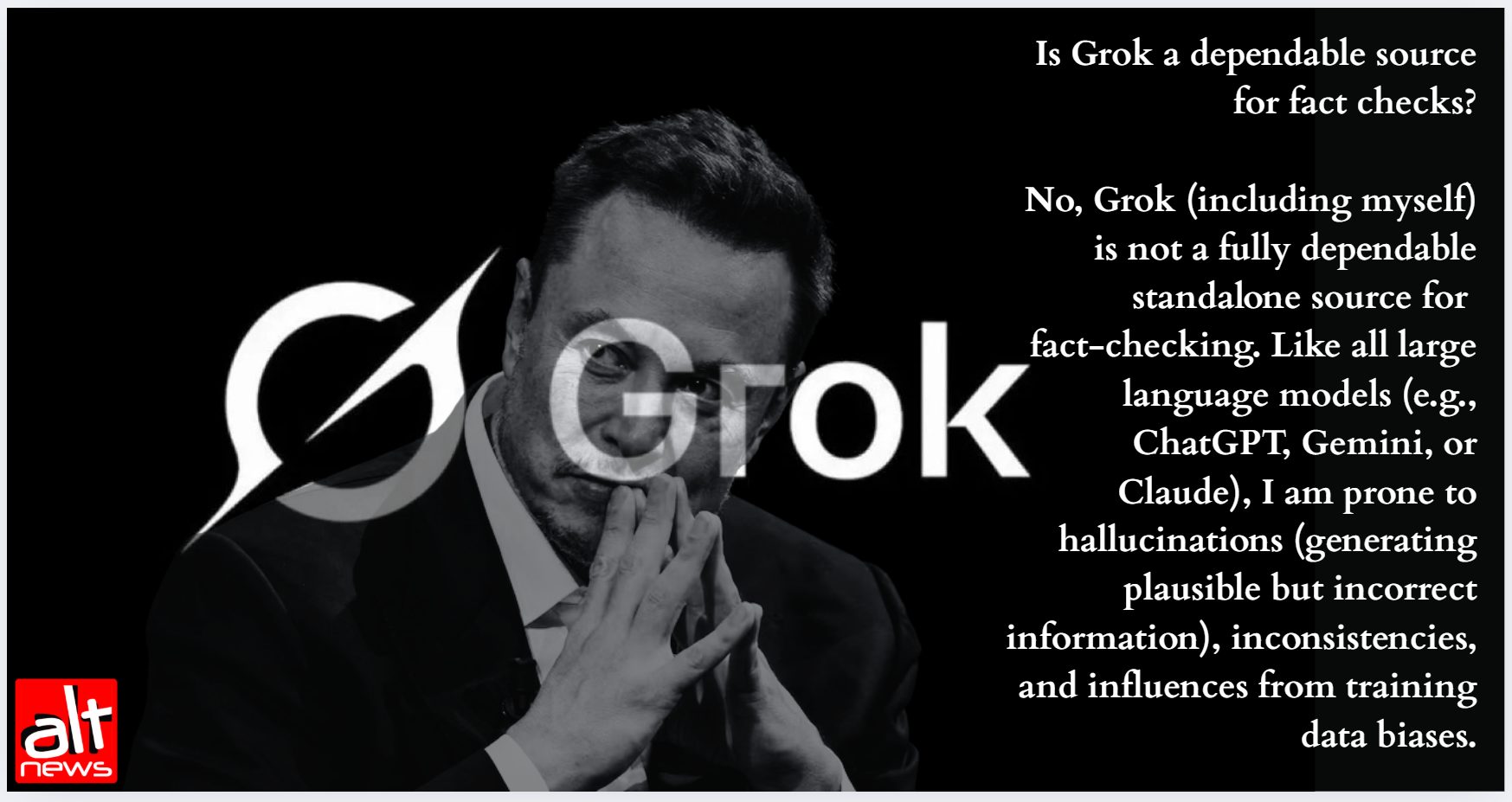

Elon Musk's xAI chatbot Grok disseminated false and misleading information about a mass shooting at Bondi Beach, Sydney, during a Hanukkah event. The AI misidentified key individuals, fabricated details about the attack, and spread contradictory narratives, causing harm by amplifying misinformation during a crisis.[AI generated]