The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

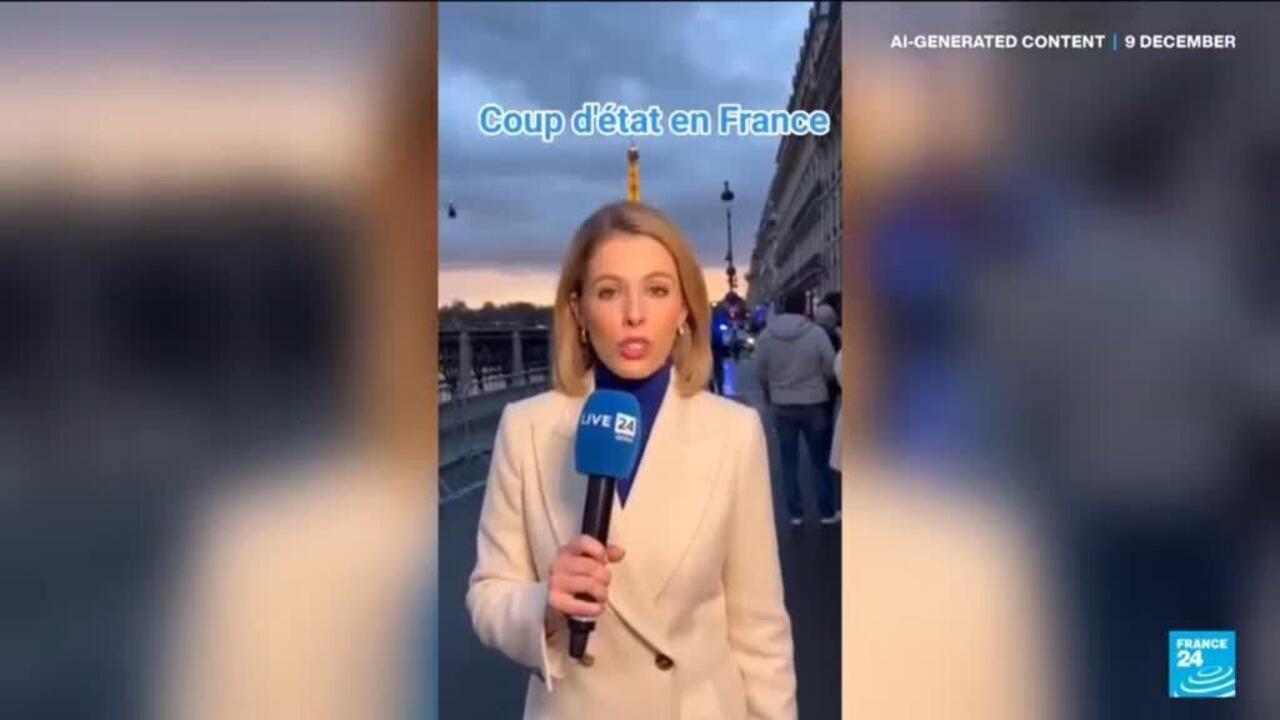

An AI-generated deepfake video falsely depicting a coup in France went viral, amassing over 12 million views and causing widespread misinformation. The video alarmed international leaders and led to political concern, with President Macron criticizing Meta for initially refusing to remove the content despite official requests.[AI generated]