The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

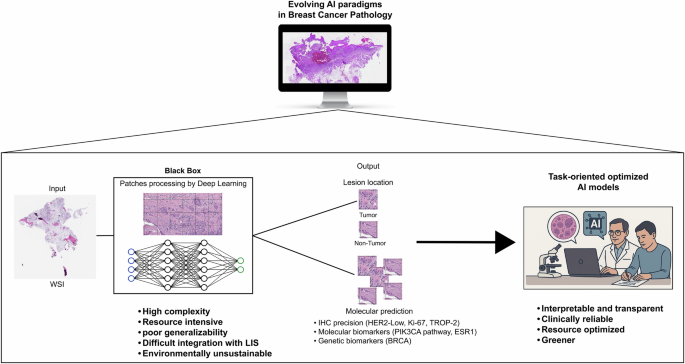

Multiple studies led by Harvard Medical School reveal that AI models used for cancer diagnosis from pathology slides perform unequally across demographic groups, resulting in less accurate diagnoses for certain populations. Researchers identified causes of this bias and developed a tool to mitigate it, highlighting the need for systematic bias checks in medical AI.[AI generated]