The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

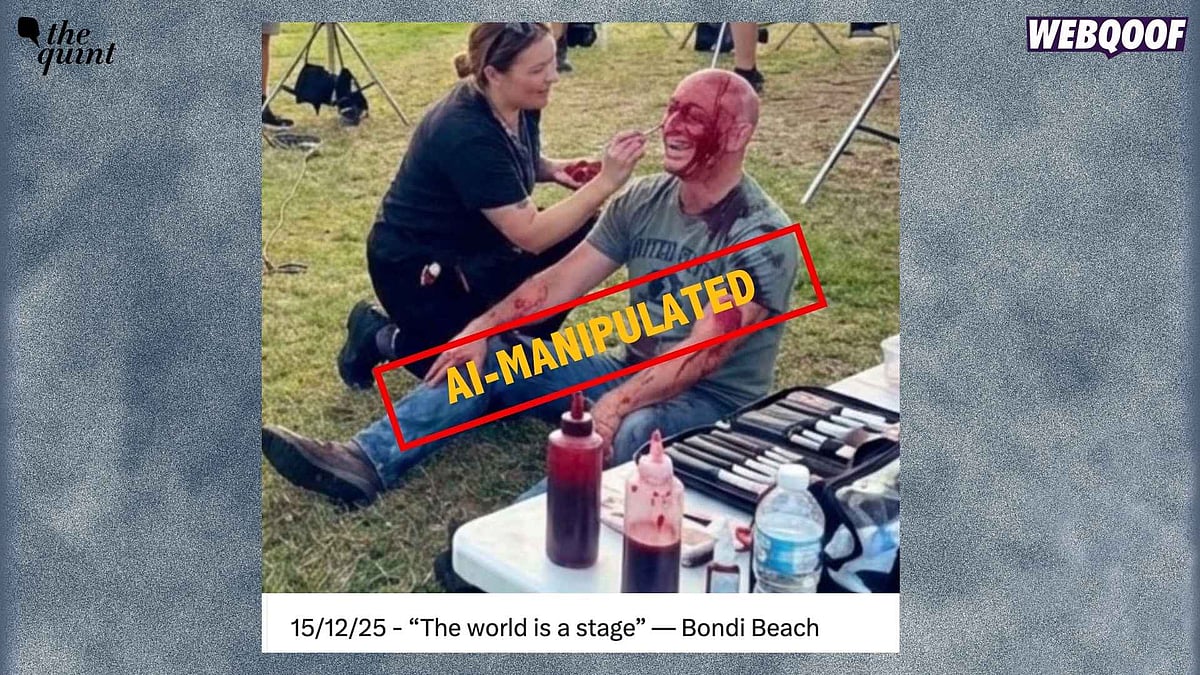

An AI-generated image falsely depicting a Bondi Beach shooting victim having fake blood applied was widely circulated online, fueling conspiracy theories that the attack was staged. The image, detected by Google's SynthID watermark, misled the public and harmed the reputation of the victim, Arsen Ostrovsky, in Australia.[AI generated]

Why's our monitor labelling this an incident or hazard?

The article explicitly states that an AI-generated image is being used to falsely claim the Bondi shooting was staged, which is misinformation causing harm to the community's understanding and trust. The AI system's role is pivotal in generating the fake image that fuels these false narratives. The harm is realized and ongoing, as the false claims have been widely spread on social media. This fits the definition of an AI Incident because the AI system's use has directly contributed to harm to communities through misinformation dissemination.[AI generated]