The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

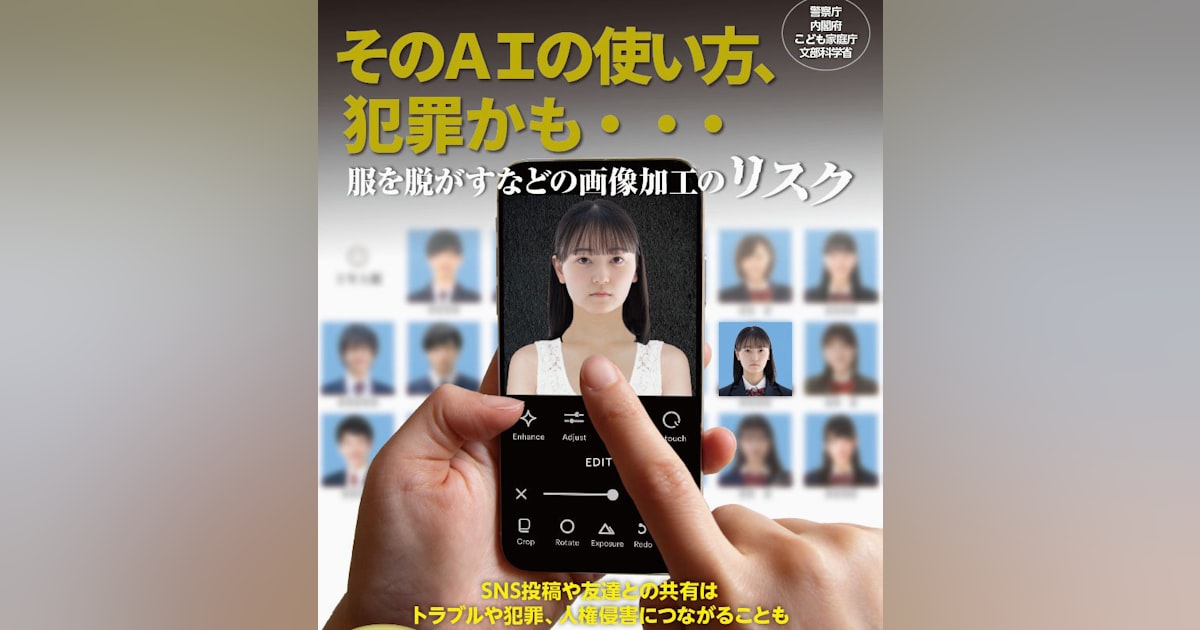

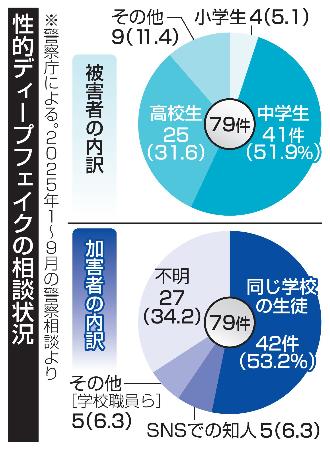

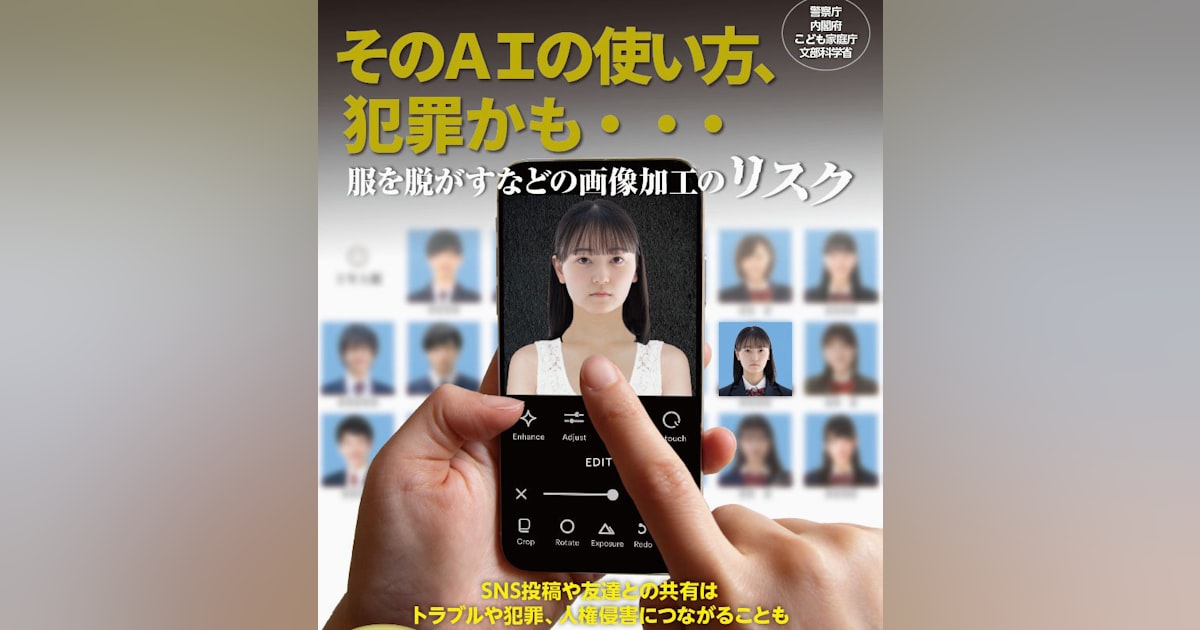

Japanese police reported 79 cases from January to September 2025 involving AI-generated sexual deepfake images of minors, mostly targeting middle and high school students. About half the perpetrators were classmates. Authorities responded with legal actions, public warnings, and inter-agency cooperation to address the growing harm caused by misuse of generative AI.[AI generated]

Why's our monitor labelling this an incident or hazard?

The article explicitly mentions the use of generative AI to create sexual deepfake images of minors, which is a clear example of an AI system's use leading to harm. The harms include violations of human rights and dignity, defamation, and sexual exploitation of children, which are serious legal and ethical violations. The involvement of AI in producing these images and the resulting legal actions confirm that this is an AI Incident as per the definitions provided.[AI generated]