The information displayed in the AIM should not be reported as representing the official views of the OECD or of its member countries.

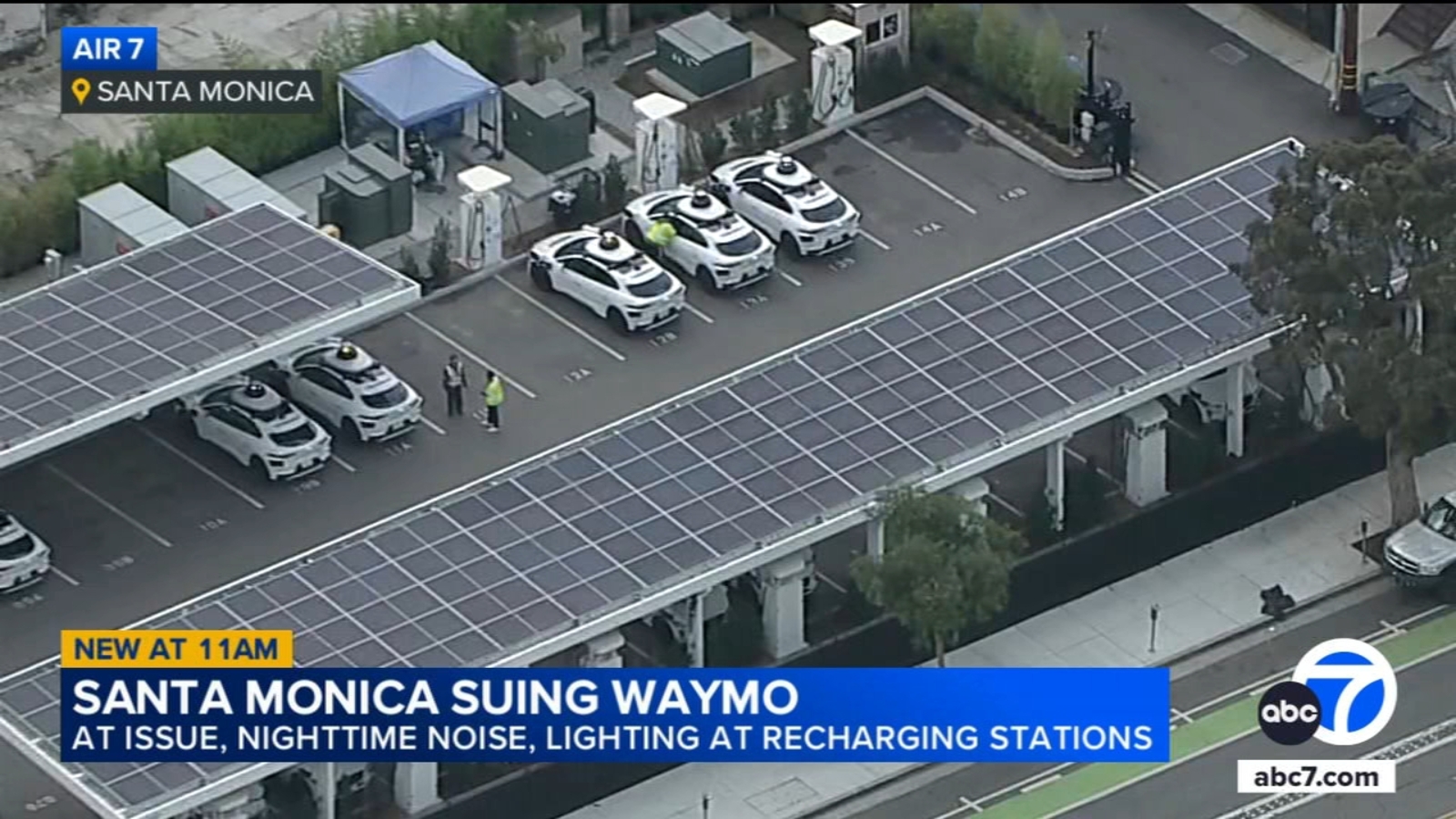

Waymo's autonomous vehicles caused community disruption in Santa Monica due to noise and light from 24-hour charging operations, prompting a city order to halt overnight activity and a subsequent lawsuit from Waymo. Separately, a San Francisco power outage left Waymo's self-driving fleet stalled, causing traffic gridlock and raising concerns about AI system resilience during emergencies.[AI generated]